#Reinforcement Learning

50 articles with this tag

Cursor's AI Agents Get Worktree Boost

David Gomes of Cursor detailed the integration of Git worktrees into AI agents, enabling isolated task execution and reducing code complexity.

AI Engineer: Small Models, Big Impact

Maxime Labonne of Liquid AI discusses the unique challenges and advantages of small AI models, detailing their architecture, training, and techniques to overcome issues like doom looping.

Together AI Slashes RL Training Time

Together AI's new distribution-aware speculative decoding slashes RL training time by up to 50%, tackling a major bottleneck in LLM post-training.

Verifiable Reasoning in MLLMs

The V-tableR1 framework enables verifiable, multi-step reasoning in MLLMs by grounding logic in visual data, achieving SOTA on tabular benchmarks.

UniDoc-RL: Finer-Grained Visual RAG

UniDoc-RL enhances LVLMs with fine-grained visual RAG via hierarchical RL, active perception, and multi-reward training, achieving state-of-the-art results.

Pre-training Space RL for Enhanced LLM Reasoning

New PreRL framework optimizes LLM reasoning by directly refining the pre-training distribution P(y), enhanced by Negative Sample Reinforcement and Dual Space RL.

Agentic RLHF Needs New Benchmarks

New benchmark Plan-RewardBench reveals current RMs struggle with agentic tool use and long-horizon tasks, highlighting the need for specialized trajectory-level reward modeling.

Agentic Models Bypass Tool Reliance

HDPO framework enables agentic multimodal models to drastically reduce tool use by decoupling accuracy and efficiency optimization, fostering self-reliance without performance loss.

Unlocking AI Agents with Gym-Anything

Gym-Anything enables scalable creation of complex AI agent environments, leading to the vast CUA-World benchmark and more efficient VLM agents.

LLMs Learn to Play Tic-Tac-Toe with Reinforcement Learning

Stefano Fiorucci discusses the power of reinforcement learning for training LLMs, showcasing Tic-Tac-Toe as a case study for building interactive environments and improving model capabilities.

Together AI's Aurora Learns on the Fly

Together AI's Aurora framework uses RL to continuously adapt speculative decoding for faster LLM inference, outperforming static models.

Personalized Driving with Vega

The Vega vision-language-action model enhances autonomous driving by enabling personalized, instruction-based navigation through a novel dataset and hybrid AI architecture.

Agent-Designing Agents Emerge

Memento-Skills introduces an agent-designing agent that autonomously creates and refines specialized LLM agents through skill evolution, bypassing core LLM retraining.

OS-Themis: Scalable Rewards for Robust RL

OS-Themis, a new multi-agent critic framework, revolutionizes GUI agent training by providing scalable, accurate rewards through milestone decomposition and evidence auditing.

Enhancing LLM Trust via Instruction Hierarchy

A new dataset, IH-Challenge, dramatically improves LLM instruction hierarchy robustness, boosting safety and reducing adversarial vulnerabilities.

Databricks Buys Quotient AI

Databricks acquires Quotient AI to enhance AI agent reliability and performance in production environments, integrating its evaluation technology into key products.

Databricks' KARL Cuts Agent Costs

Databricks' new KARL AI agent drastically cuts costs and latency for enterprise knowledge tasks using custom reinforcement learning.

RLAIF Explained: Latent Values in LLMs

RLAIF explained: Human values are latent directions in LLM representations, activated by constitutional prompts, with alignment ceiling tied to model capacity and data quality.

AI Governance: Optimization's Normative Limits

A new paper on arXiv argues that optimization-based AI, including RLHF LLMs, are formally incapable of normative governance due to inherent structural limitations.

AI Agents Learn to Cooperate Without Rules

Google researchers propose a simpler way for AI agents to cooperate: train them against diverse opponents, leveraging in-context learning to drive mutual cooperation through 'extortion' dynamics.

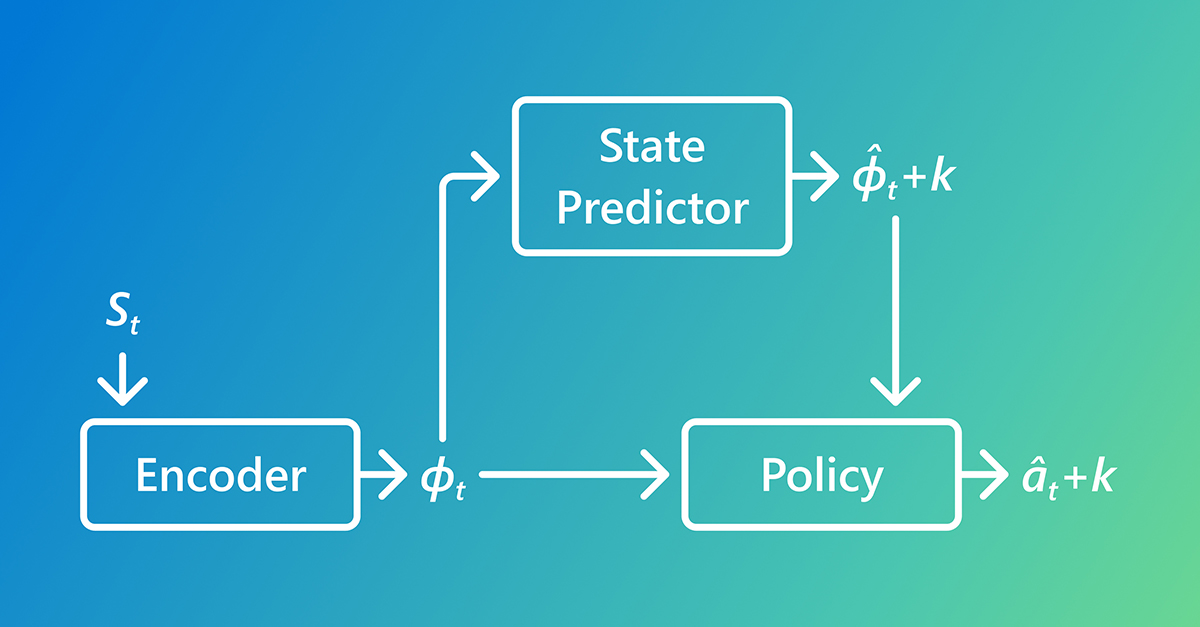

AI Learns Faster by Predicting the Future

AI learns faster with Predictive Inverse Dynamics Models (PIDMs) by forecasting future states, making imitation learning more data-efficient than traditional methods.

RL Fixes Overfitting in AI Radiology Reports

Microsoft Research’s UniRG framework uses reinforcement learning guided by clinical error signals to achieve state-of-the-art performance in AI radiology reports.

Argos Framework Delivers Grounded AI Reasoning

Argos is an agentic verification framework that fundamentally changes reinforcement learning by rewarding models only for Grounded AI reasoning based on verifiable evidence.

DeepMind, OpenAI Vets Launch humans& for Human-Centered AI

A new frontier AI lab, humans&, launched by veterans of DeepMind and OpenAI, aims to pivot the industry toward truly human-centered AI focused on collaboration and trust.

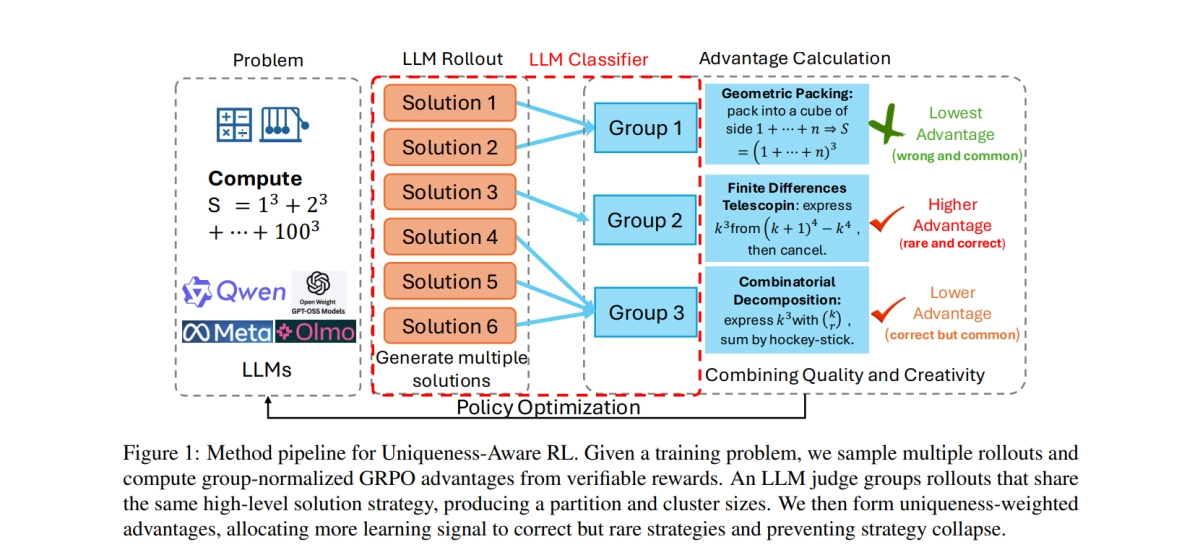

Uniqueness-Aware RL stops LLMs from getting lazy

Uniqueness-Aware RL prevents LLMs from converging on a single solution path by explicitly rewarding correct answers that employ rare problem-solving strategies.

Poolside’s Full-Stack Bet: Building AGI Agents from Data Centers to Code Completion

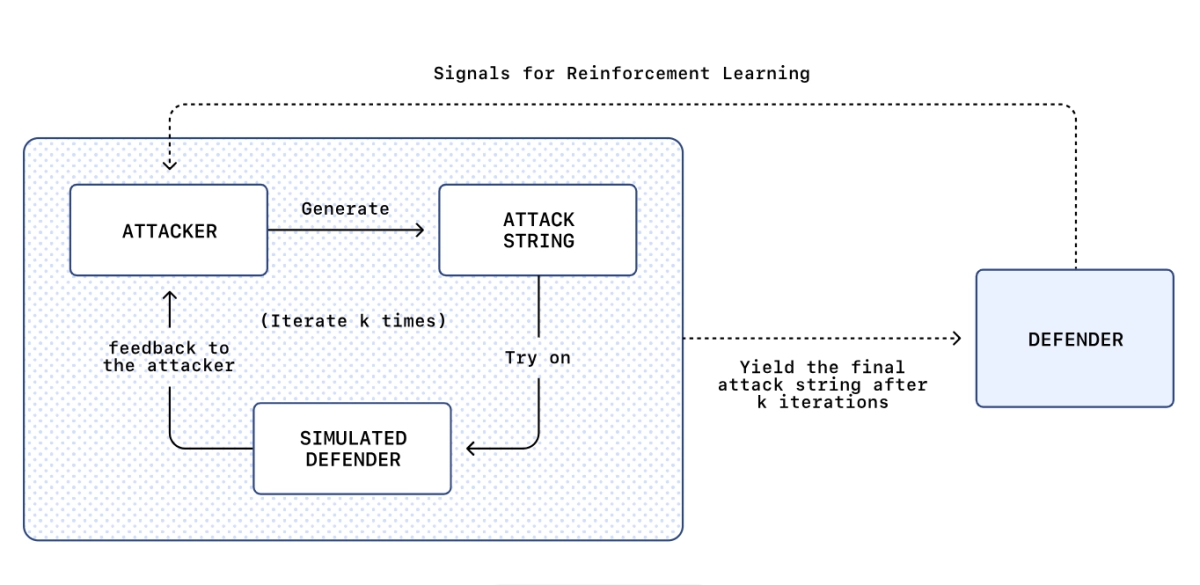

ChatGPT prompt injection is so bad they built an AI attacker

LLM Agent Reinforcement Learning Gets Practical

OpenAI Unveils Agent RFT: Revolutionizing AI with Self-Improving Tool-Using Models

AI Model Confessions: A New Honesty Layer

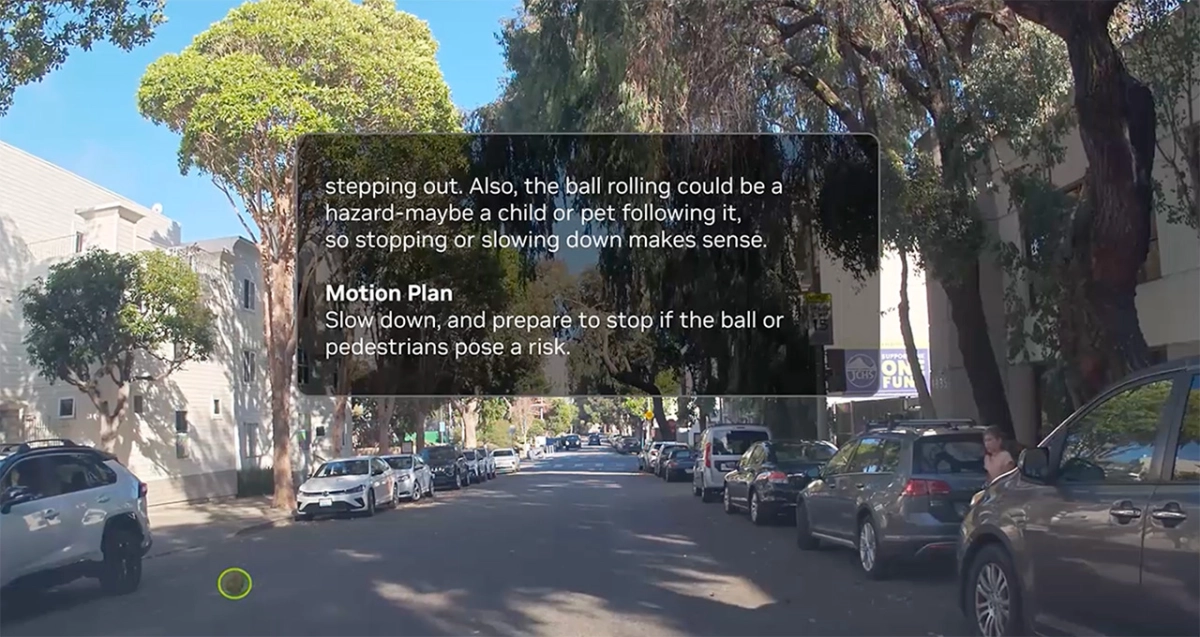

NVIDIA Autonomous Driving AI Gains Human-Like Reasoning

DR Tulu deep research: Open AI closes proprietary gap

AlphaProof system proves its worth at the Math Olympiad

OpenAI’s Agent RFT: Boosting Autonomous AI Performance Through Tailored Reinforcement Learning

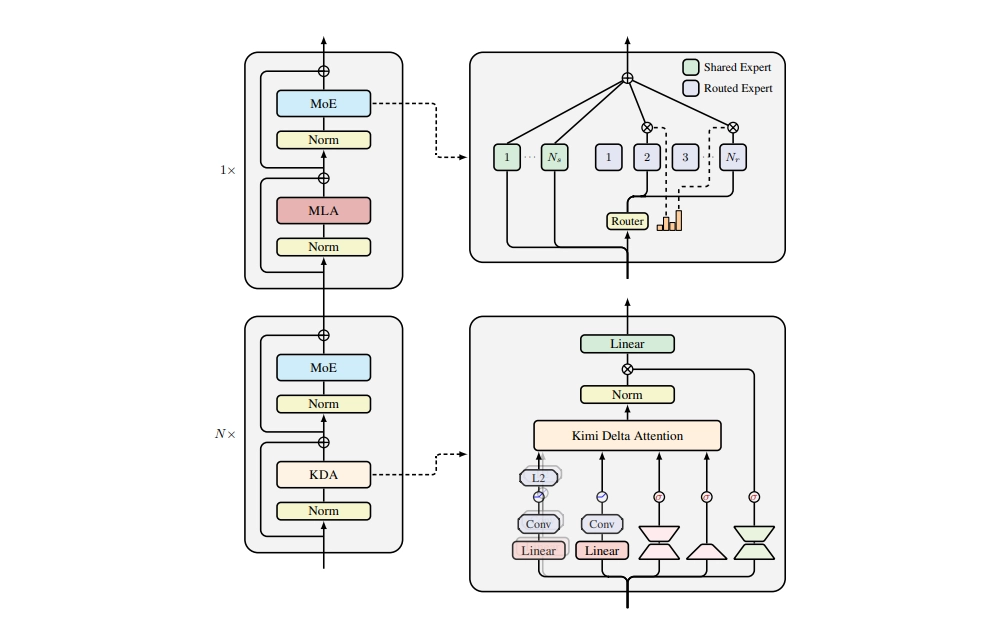

Kimi Linear promises to beat full attention with less memory

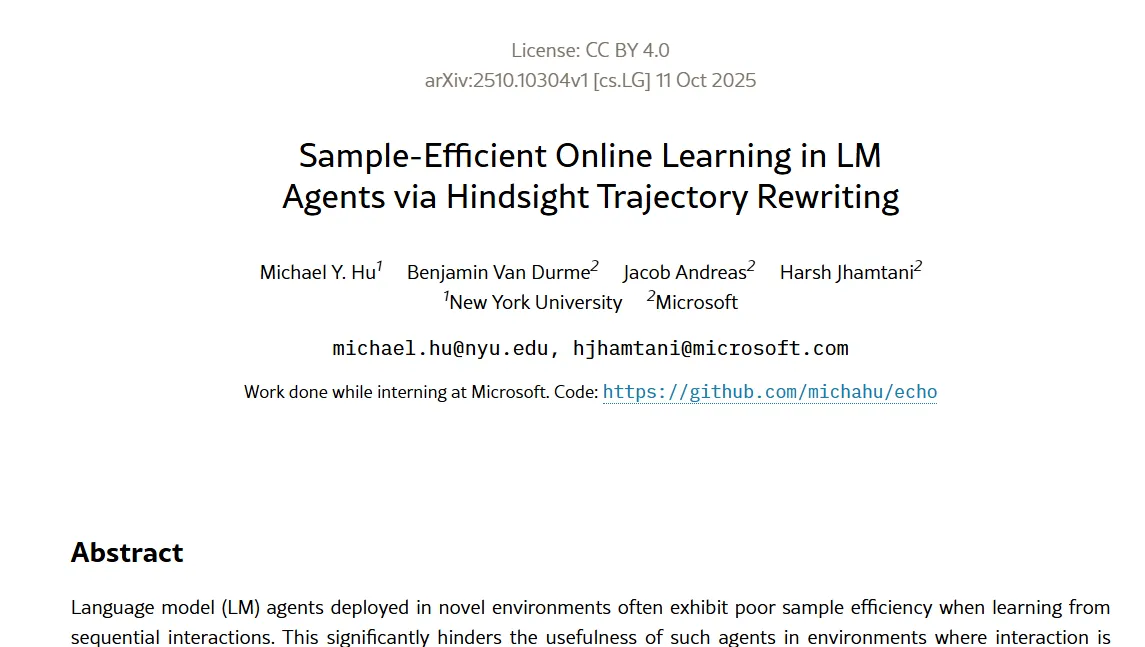

Microsoft's ECHO Language Model learns from failure

Cognition's New Bet on Fast Context Retrieval

Reinforcement Fine-Tuning: Osmosis AI fine-tunes agents past FMs

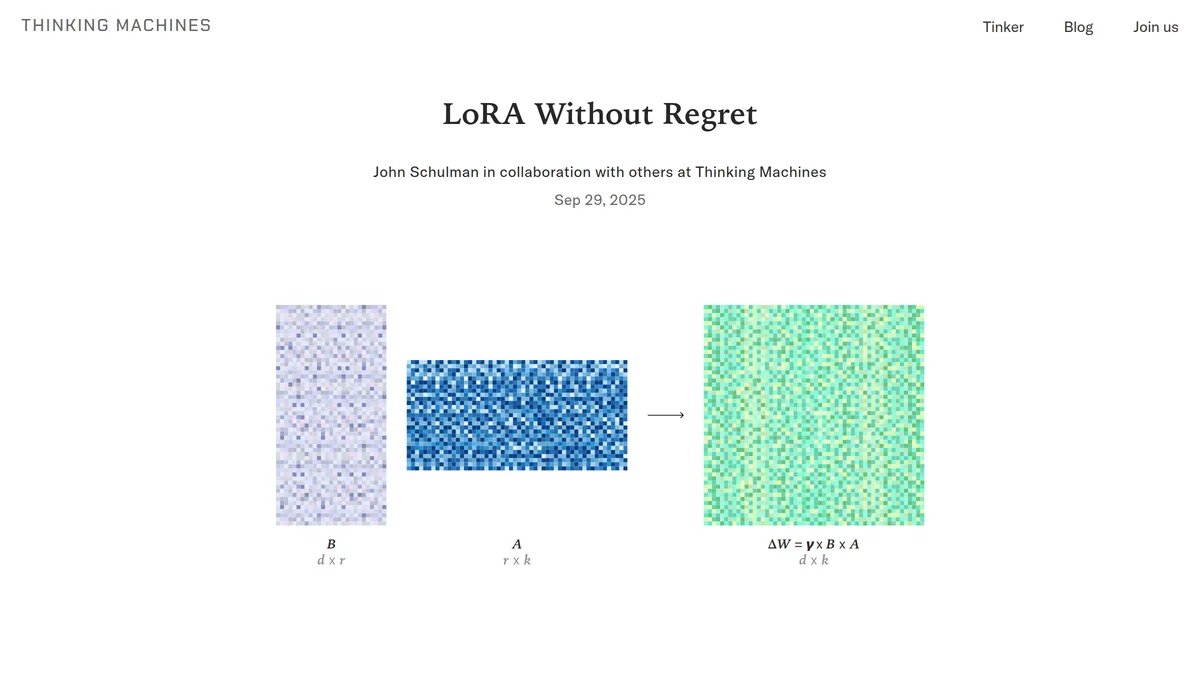

LoRA vs full fine-tuning: The debate is over

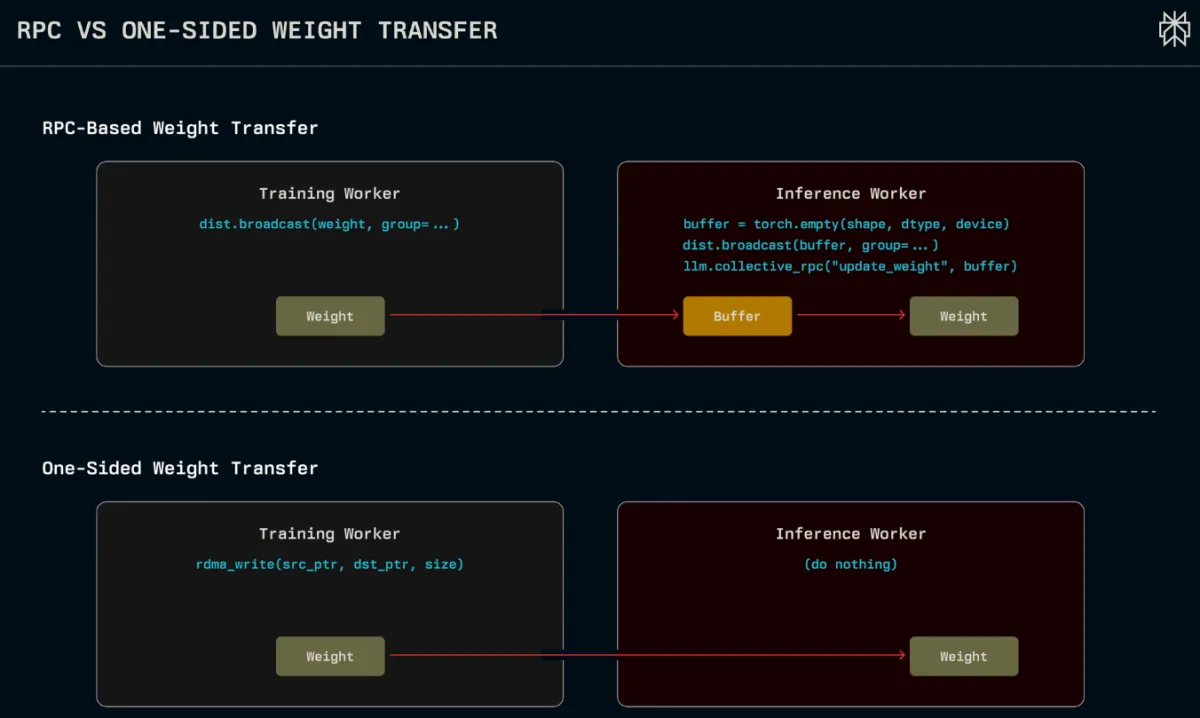

New Fast GPU Weight Transfer Syncs Trillion-Parameter AI in 1.3s

Updating the brains of a massive AI model used to be a sluggish affair, often taking minutes to sync new knowledge from a training cluster to a live inference c...

New Fast GPU Weight Transfer Syncs Trillion-Parameter AI in 1.3s

Updating the brains of a massive AI model used to be a sluggish affair, often taking minutes to sync new knowledge from a training cluster to a live inference c...

OpenAI's Leap Towards Reasoning and Automated Discovery with GPT-5

AI's Dual Realities: Hallucinations, Augmentation, and the Micro-Model Frontier

Reinforcement Fine-Tuning: Elevating AI Reasoning with Grader-Driven Optimization

OpenAI's Hallucination Breakthrough: A Feature, Not a Bug, and How to Fix It

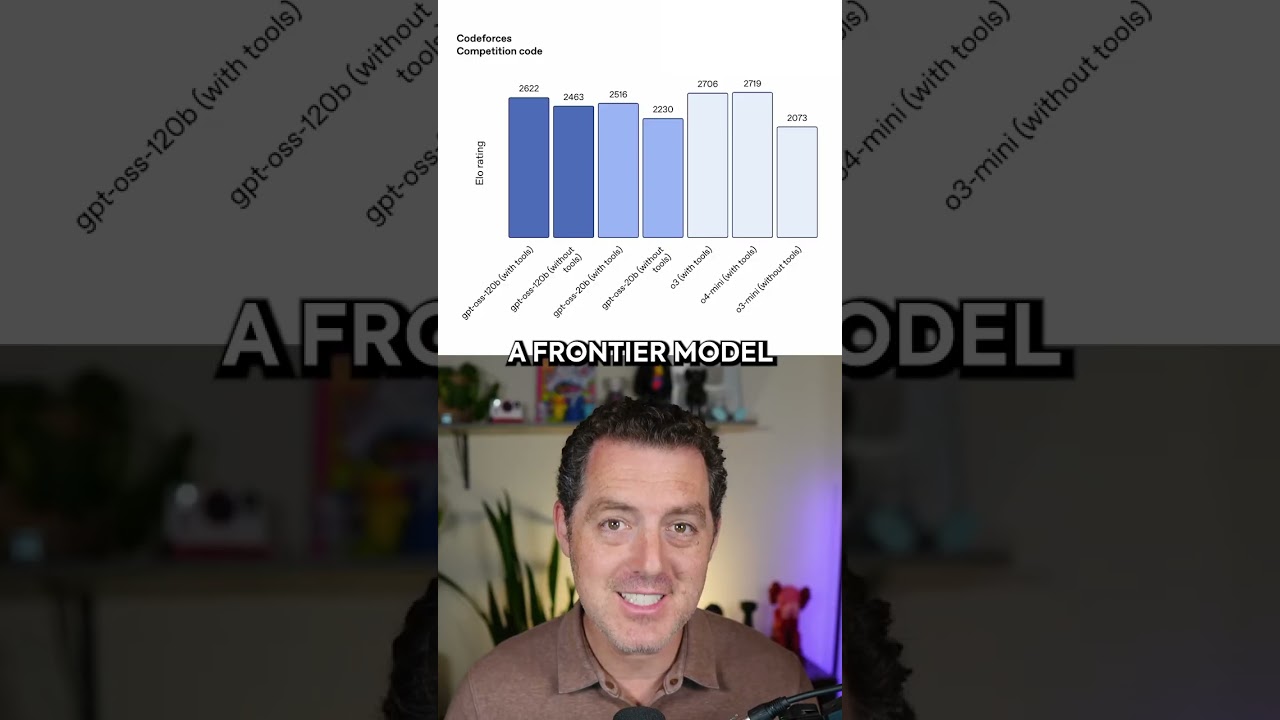

OpenAI’s Open-Weight GPT-OSS Challenges AI Landscape

OpenAI’s AI Achieves Gold at International Math Olympiad, Unveiling Path to General Reasoning

AI's Predictable Ascent: Scaling Laws Reshape the Path to Human-Level Intelligence

DeepSeek's Reasoning Leap Reshapes AI Scaling Paradigms