The Achilles’ heel of modern multimodal AI is the hallucination problem—the tendency to generate plausible, confident outputs that are entirely disconnected from the actual sensory input. This fundamental lack of grounding poses a severe safety and reliability risk, particularly as AI agents move into robotics and autonomous systems. Microsoft Research has introduced Argos, a novel agentic verification framework designed to fundamentally restructure reinforcement learning (RL) by rewarding models only for Grounded AI reasoning rooted in verifiable evidence. This approach shifts the focus from merely achieving a correct answer to ensuring the agent arrives at that answer for the right, observable reasons.

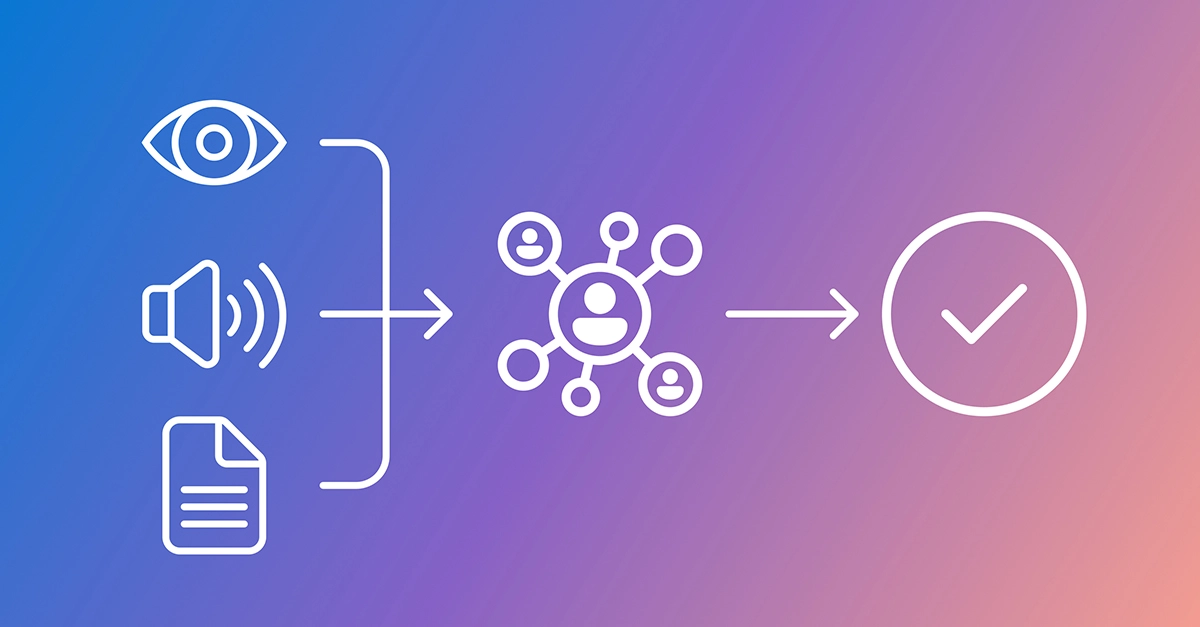

Argos fundamentally alters the reinforcement learning paradigm by introducing a verification layer that scrutinizes the agent’s internal logic, not just its final output. Traditionally, RL rewards only the final correct behavior, which inadvertently encourages models to find ungrounded shortcuts that exploit dataset biases or ignore visual evidence. Argos uses specialized tools and larger, more capable teacher models to verify two critical conditions: first, that the objects and events referenced by the model actually exist in the input data, and second, that the model’s reasoning steps align with those observations. This dual requirement ensures that the model is optimizing for reliability rather than superficial performance metrics.