NVIDIA is making a profound impact on the future of autonomous driving AI with its latest open-source advancements unveiled at NeurIPS, signaling a significant industry shift. The company introduced NVIDIA DRIVE Alpamayo-R1 (AR1), heralded as the world's first industry-scale open reasoning vision language action (VLA) model specifically engineered for autonomous vehicles. This pivotal development signals a critical evolution towards more human-like decision-making capabilities in self-driving systems, moving beyond mere reactive pattern recognition to proactive, contextual understanding, a long-sought goal in the AV sector.

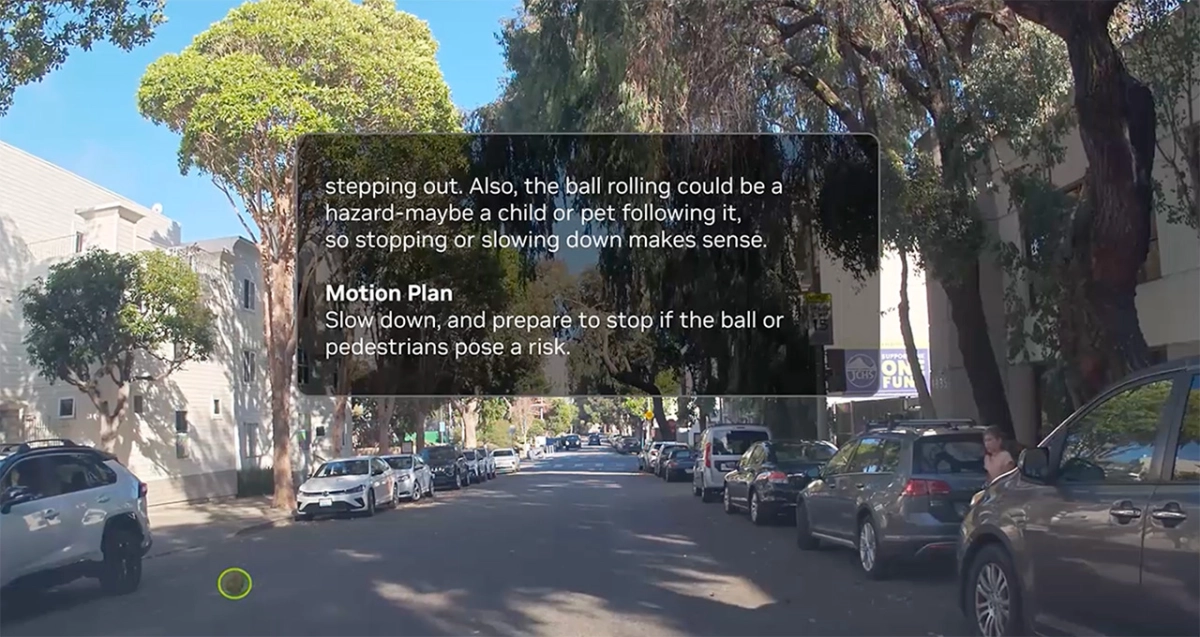

AR1 represents a substantial architectural leap for NVIDIA autonomous driving AI by deeply integrating chain-of-thought reasoning directly into the vehicle's path planning, a methodology previously confined to large language models. This sophisticated approach allows an autonomous vehicle to systematically break down highly complex and ambiguous scenarios, such as navigating a pedestrian-heavy intersection, responding to an unexpected lane closure, or maneuvering around a double-parked vehicle in a bike lane. According to the announcement, this infusion of "common sense" enables AVs to drive more like humans, making nuanced decisions with greater safety and precision, which is absolutely fundamental for achieving true Level 4 autonomy. By considering all possible trajectories and using contextual data to choose the optimal route, AR1 moves AVs closer to intuitive, reliable operation in unpredictable real-world environments, addressing a critical bottleneck in current AV development.