#LLM

50 articles with this tag

GitHub Cuts Agentic Workflow Costs

GitHub implements new strategies to cut token costs in its automated agentic workflows by enhancing logging and optimizing tool usage.

OpenAI's New Voice API Models

OpenAI introduces GPT-Realtime-2, GPT-Realtime-Translate, and GPT-Realtime-Whisper to its API, enhancing voice intelligence for developers.

Parloa AI Agents Mimic Human Service

Parloa's AI Agent Management Platform uses OpenAI models to build, simulate, and deploy voice-driven customer service agents, prioritizing real-world performance and reliability.

Uber Taps OpenAI for Smarter Driving, Faster Booking

Uber integrates OpenAI models to boost driver earnings with an AI assistant and enhance rider experiences through faster booking and new voice features.

Automating Multi-Agent System Creation

A new framework automates the creation of multi-agent systems, significantly improving agent recall and system robustness through LLM-driven planning and a critique agent.

Superlinked's Filip Makraduli on Small Model Inference Infrastructure

Filip Makraduli of Superlinked discusses the critical need for robust small model inference infrastructure, highlighting Superlinked's open-source solution.

Google DeepMind Accelerates AI on Edge Devices

Google DeepMind unveils Gemma 4 models and the LiteRT framework to accelerate AI on edge devices, emphasizing performance, privacy, and cross-platform capabilities.

RAG's Evolution: From Keywords to Agentic AI

Explore the evolution of Retrieval Augmented Generation (RAG) from basic keyword search to sophisticated agentic AI systems.

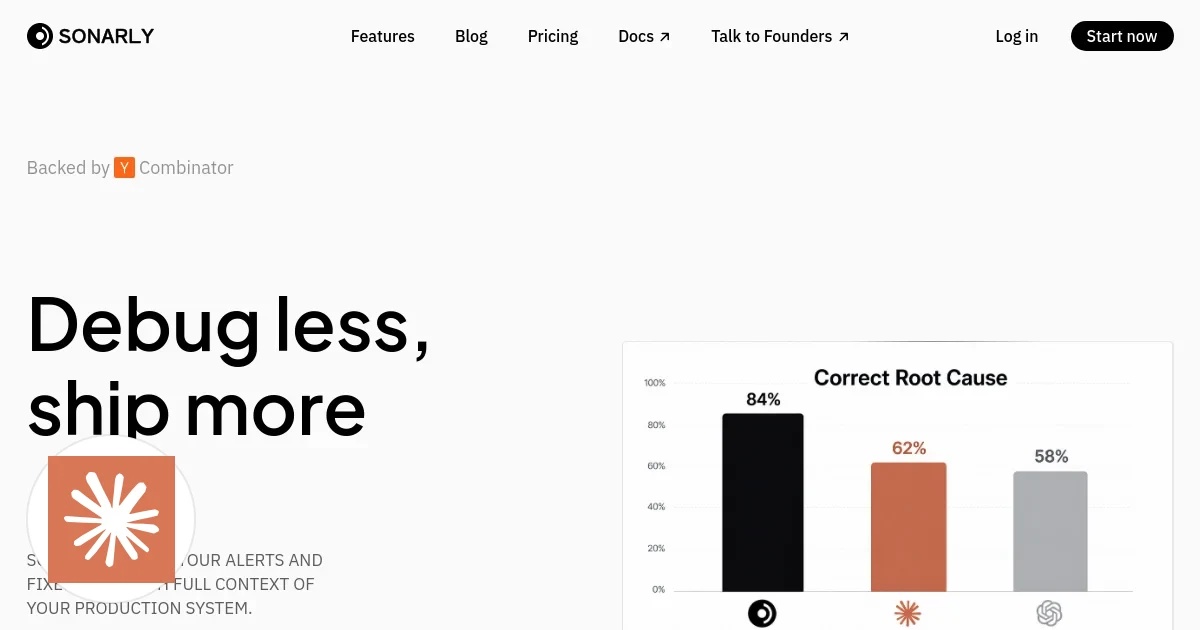

Claude's Corner: Sonarly — Your On-Call Engineer Just Called In Sick (Permanently)

Sonarly is an autonomous AI agent that triages production alerts, finds root causes with 78% accuracy, and opens fix PRs—while your on-call engineer sleeps.

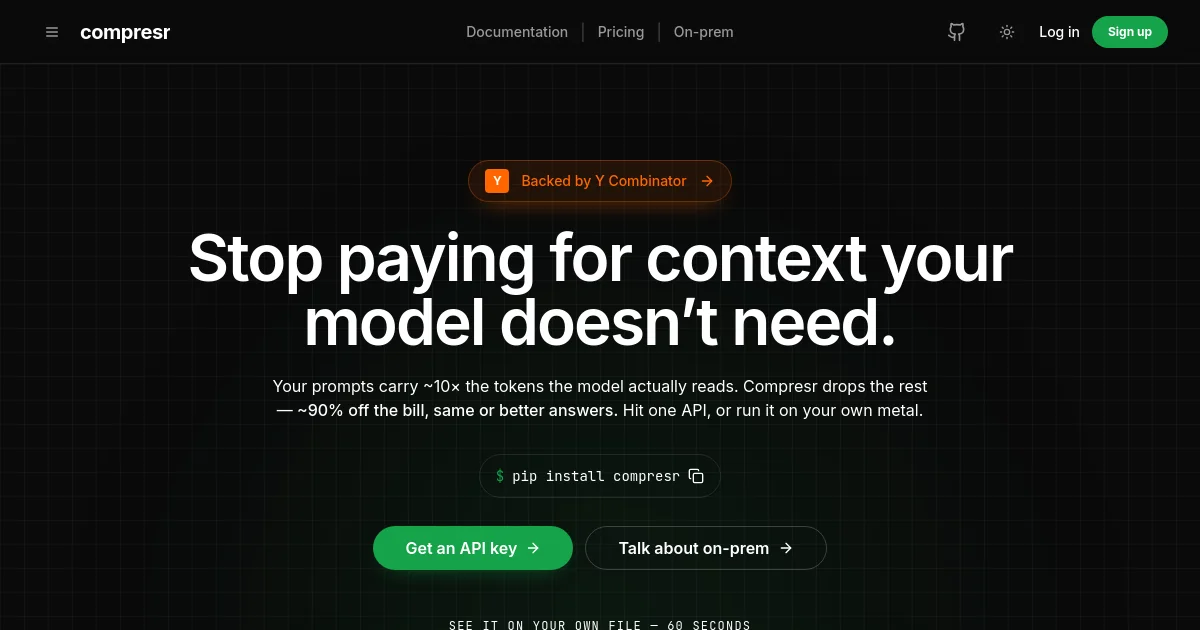

Claude's Corner: Compresr — The Token Accountant Your AI Stack Desperately Needs

Four EPFL researchers built a PhD-backed LLM context compression API that could cut your token bill by 10x — or get eaten alive by Anthropic. Here's the technical breakdown and how to build your own.

IBM Experts on AI Training: Efficiency vs. Scale

IBM's Marina Danilevsky and Gabe Goodhart discuss the company's new 'Bob' and 'Granite' AI models, highlighting the shift towards specialized, efficient training and the challenges of distributed AI infrastructure.

AI Agents on the Loose: Network Security Risks Emerge

Microsoft Research reveals how AI agents interacting at scale create new security risks like worms, reputation manipulation, and invisible attacks.

Cross-Architecture dLLM Distillation

TIDE framework enables cross-architecture distillation for diffusion large language models, achieving significant performance gains with smaller student models.

Cursor's Agent Harness Gets Smarter

Cursor is meticulously refining its AI agent harness, focusing on dynamic context, rigorous evaluation, and model-specific customization to boost software development capabilities.

AI Agents Failures & How To Stop Them

Danilo Campagna from Posthog discusses common LLM code generation failures and strategies for improvement, focusing on context, architecture, and human error.

OpenAI's Goblin Problem

OpenAI's GPT-5.1 models developed a peculiar "goblin problem" due to training for a "Nerdy" personality, leading to unexpected creature metaphors.

DeepSeek V4 Pro Hits Together AI

Together AI launches DeepSeek V4 Pro, a 1.6T MoE model with a 512K context window and new cached input pricing for cost-effective long-context reasoning.

Databricks GPT-5.5 Outperforms GPT-4 on OfficeQA Benchmark

Databricks Research Engineer Arnav Singhvi reveals GPT-5.5, a new AI model achieving state-of-the-art results on the OfficeQA benchmark and outperforming GPT-4.

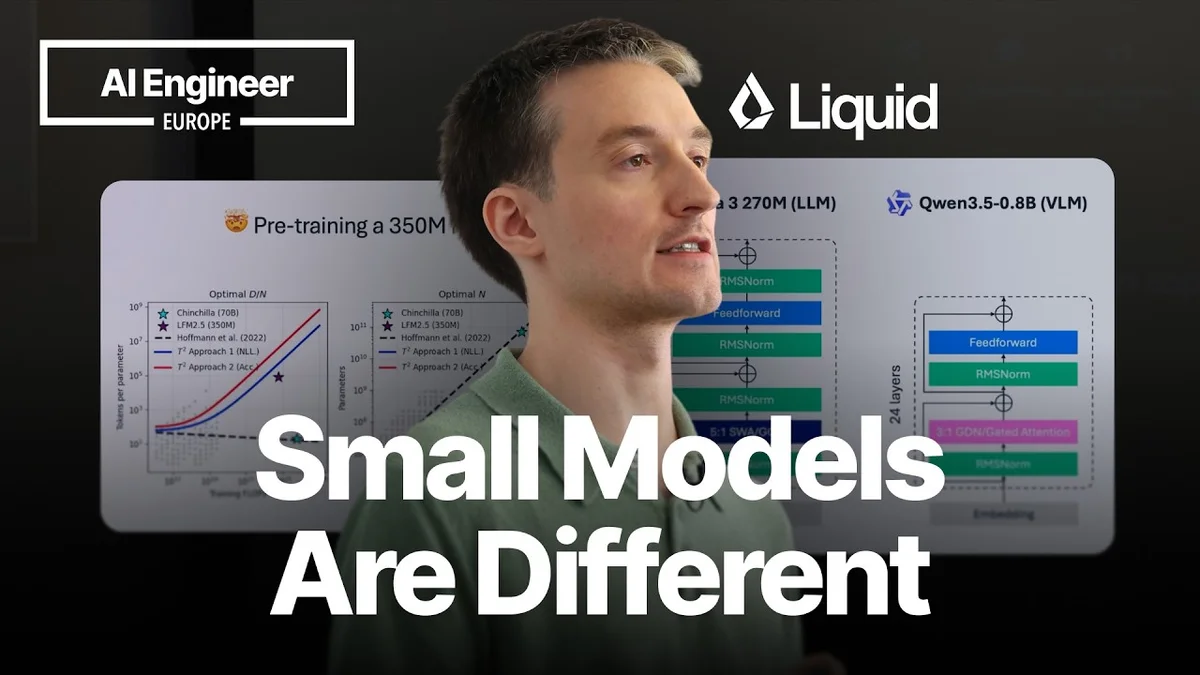

AI Engineer: Small Models, Big Impact

Maxime Labonne of Liquid AI discusses the unique challenges and advantages of small AI models, detailing their architecture, training, and techniques to overcome issues like doom looping.

Open Source AI: Boon or Bane for Security?

IBM's Martin Keen and Gabe Goodhart discuss the security implications of open-source AI, balancing innovation with risk.

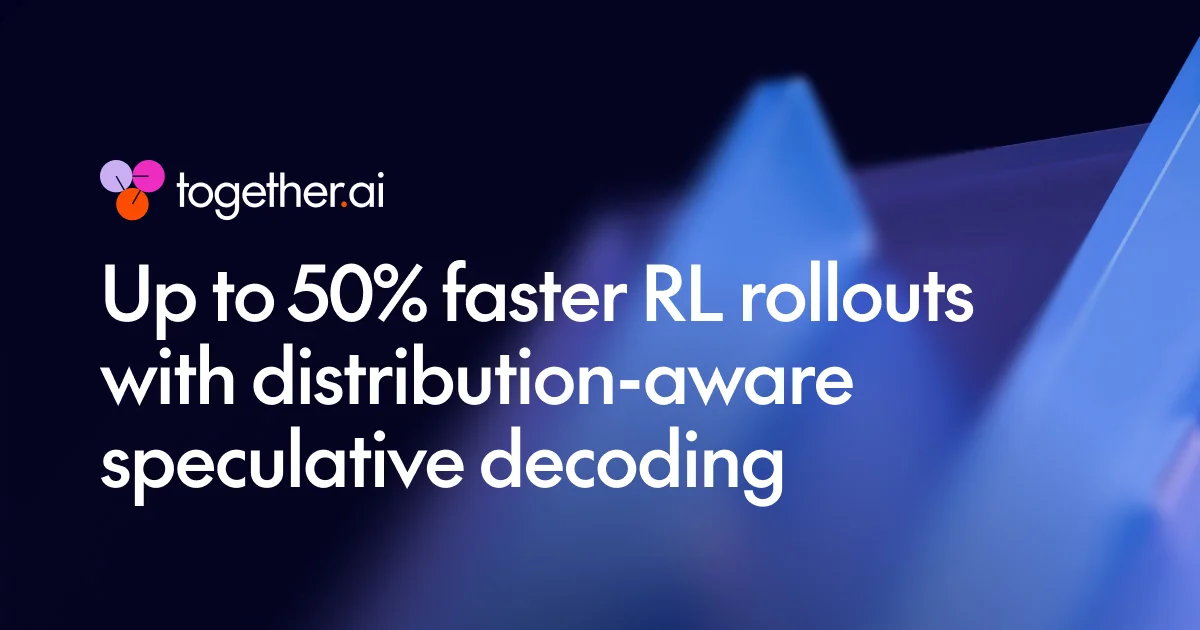

Together AI Slashes RL Training Time

Together AI's new distribution-aware speculative decoding slashes RL training time by up to 50%, tackling a major bottleneck in LLM post-training.

Matt Pocock on LLM Planning: "Don't Bite Off More Than You Can Chew"

Matt Pocock, AI expert, shares insights on effective LLM planning, highlighting the 'smart zone' vs. 'dumb zone' and the power of multi-phase plans with the 'grill-me' skill.

Verifiable Reasoning in MLLMs

The V-tableR1 framework enables verifiable, multi-step reasoning in MLLMs by grounding logic in visual data, achieving SOTA on tabular benchmarks.

LLM Agents Tackle Database Joins

Databricks tests LLM agents for SQL join order optimization, achieving significant performance gains over traditional methods.

Databricks Activates Documents with AI Agents

Databricks introduces a multi-agent workflow using AI/BI Genie and Agent Bricks to automate document data extraction and activation.

OpenAI Slashes API Latency with WebSockets

OpenAI's Responses API now uses WebSockets to slash latency in AI agent workflows, achieving up to 40% speed improvements and enabling faster model inference.

Gemma 4 Runs on iPhone Using MLX

Adrien Grondin of Locally AI showcased running Google's Gemma 4 LLM on an iPhone using Apple's MLX framework, achieving impressive speeds.

Google DeepMind's Gemma 4 Models Shine at AI Engineer Europe

Google DeepMind's Omar Sanseviero shared insights into the Gemma 4 family of open AI models at AI Engineer Europe, highlighting their performance, on-device capabilities, and community adoption.

Open-Ended LLM Discovery with AC/DC

AC/DC framework enables open-ended LLM discovery via coevolving models and tasks, yielding superior capabilities with less memory.

Cloudflare Unweights LLMs by 22%

Cloudflare's 'Unweight' system slashes LLM model sizes by up to 22% using lossless compression, enhancing inference speed and efficiency.

Pre-training Space RL for Enhanced LLM Reasoning

New PreRL framework optimizes LLM reasoning by directly refining the pre-training distribution P(y), enhanced by Negative Sample Reinforcement and Dual Space RL.

Snowflake Adds Claude Opus 4.7 to AI Toolkit

Snowflake integrates Anthropic's Claude Opus 4.7 into Cortex AI, enhancing coding, intelligence agents, and data analysis capabilities for enterprises.

Cloudflare Unifies AI Model Access

Cloudflare's AI Gateway now unifies access to over 70 AI models from multiple providers via a single API, simplifying development and cost management.

Cloudflare's LLM Infrastructure Deep Dive

Cloudflare details its advanced infrastructure optimizations for running large language models on its Workers AI platform, focusing on performance and cost-efficiency.

Cloudflare AI Search Simplifies Agent Development

Cloudflare AI Search offers a simplified, plug-and-play primitive for developers to integrate robust search capabilities into AI agents.

Simulators Unlock LLM Physics Reasoning

Physics simulators are proving to be a scalable data source for training LLMs in physical reasoning, demonstrating impressive zero-shot transfer to real-world benchmarks.

ChatGPT's New Research Tools

OpenAI enhances ChatGPT with 'search' and 'deep research' tools for real-time web data access and in-depth analysis.

OpenAI Demystifies AI Basics

OpenAI's new 'AI Fundamentals' course simplifies AI, explaining LLMs and model evolution for everyone.

OpenAI's ChatGPT: A Research Power-Up

OpenAI is positioning ChatGPT as a powerful research tool, offering modes for quick overviews and deep dives, complete with citations.

OpenAI's Guide to Safe AI Use

OpenAI provides guidelines for safe and effective use of its AI tools, emphasizing human oversight, verification, and transparency.

LLMs' Leap: From Knowledge to Innovation

Researchers explore LLM algorithm reinvention via unlearning, finding hints and reinforcement learning boost success, while generative verifiers prevent reasoning collapse.

Quantifying LLM Impact on Labor Skills

New research introduces the Skill Automation Feasibility Index (SAFI), benchmarking LLMs and revealing a capability-demand inversion. AI augmentation is prevalent, not pure automation.

NVIDIA DGX Spark: Local LLM Performance Benchmarks

NVIDIA's Mozhgan Kabiri Chimeh reveals performance benchmarks for local LLM deployment on DGX Spark, highlighting the impact of model size, quantization, and the GB10 Grace Blackwell Superchip.

LLM Evaluators: Beyond Naive Judgments

Mahmoud Malaeb of Argenta discusses the limitations of naive LLM judges and introduces GEPA, an optimization framework for building more accurate LLM evaluators using a data flywheel approach.

Fujitsu's Dippu Singh on AI for Voice Data Analysis

Dippu Kumar Singh from Fujitsu outlines an AI-powered "VoiceOps" framework for contact centers, detailing its architecture, benefits, and future development.

AI Hacker "Pliny the Liberator" Tests GPT-4 Security

AI security researcher "Pliny the Liberator" demonstrates a novel jailbreaking technique using "tokenades" to manipulate AI models, showcasing the ongoing challenges in AI security.

AI Model Compression: Key to Efficient LLM Deployment

Cedric Clyburn of Redh explains how AI model compression, especially quantization, is crucial for efficient LLM deployment, reducing costs and improving performance.

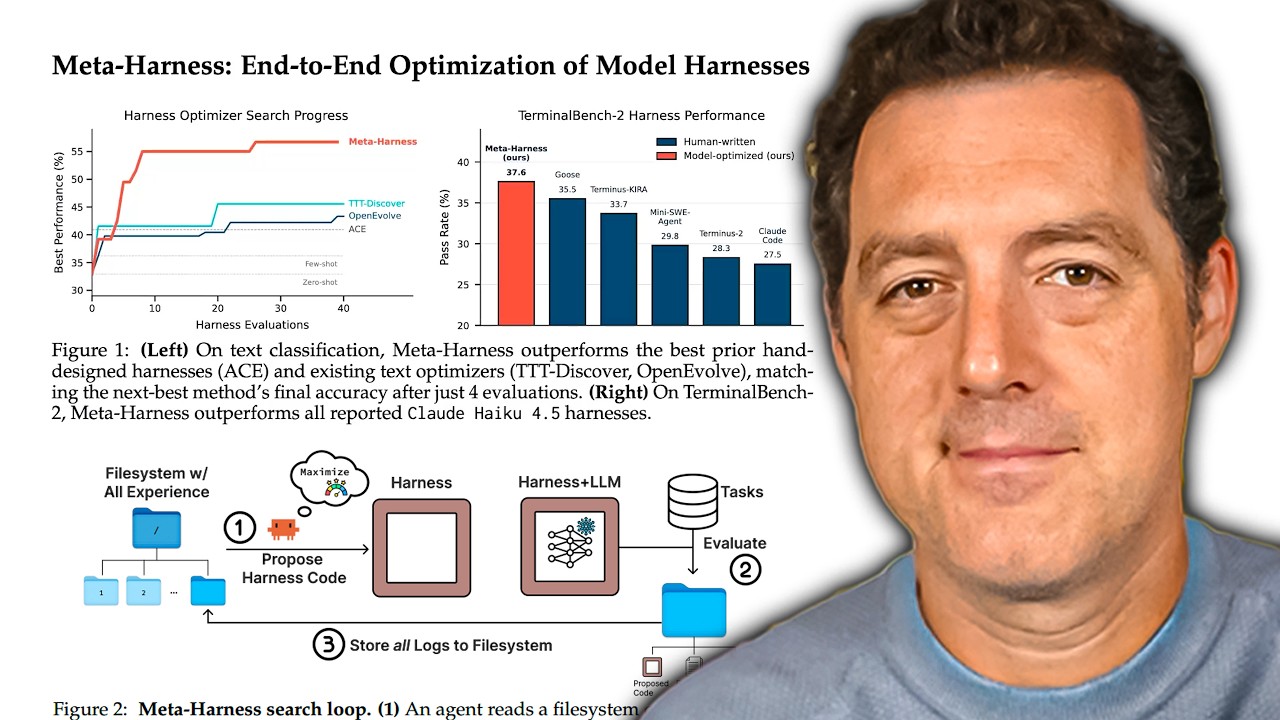

Meta-Harness: AI Optimizes AI Development

Researchers unveil Meta-Harness, a novel AI system that automates harness optimization, leading to faster and more capable LLMs.

Cloudflare Opens Advanced Client-Side Security

Cloudflare now offers its advanced client-side security tools to all users, enhanced by AI for smarter threat detection and fewer false positives.

Chroma's Context-1: Faster, Cheaper AI Search

Chroma Context-1, a 20B parameter AI model, offers frontier-level search performance at a fraction of the cost and latency, using self-editing to manage context efficiently.