In a significant leap forward for AI development, researchers from Stanford, MIT, and KRAFTON have introduced Meta-Harness, a system designed to automate the optimization of Large Language Model (LLM) harnesses. This innovative approach promises to streamline the creation of more effective and efficient AI models by allowing them to learn and improve their own operational code.

The core idea behind Meta-Harness is to enable LLMs to iteratively propose, evaluate, and log new harnesses, creating a self-improving loop. This process is crucial because, as the paper highlights, the performance of LLM systems depends not only on their model weights but also on their harnesses – the code that determines what information to store, retrieve, and present to the model. Traditionally, these harnesses are designed manually, a process that is often inefficient and yields suboptimal results.

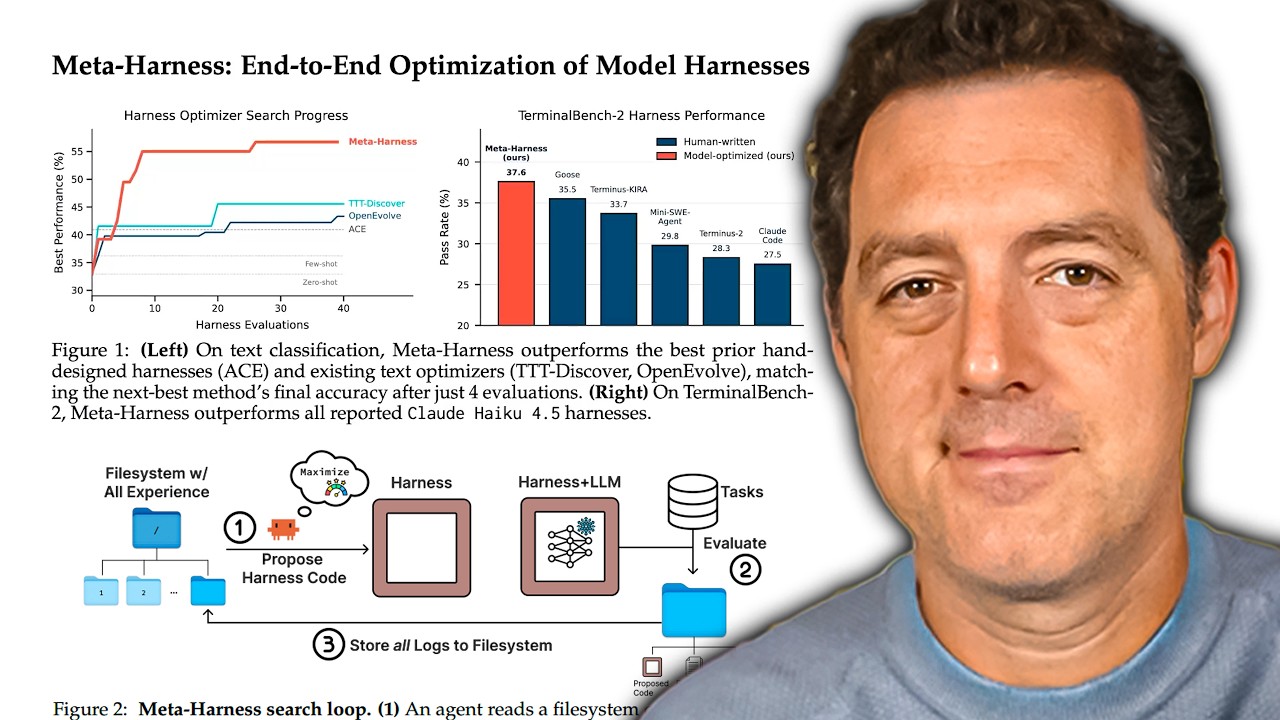

The Meta-Harness system acts as a coding agent, leveraging a language-model-based system to invoke developer tools and modify code. This allows it to search for optimal harnesses by exploring the vast landscape of possibilities within the code. The system's ability to learn from its own experiences, storing source code, evaluation scores, and execution traces, allows it to progressively refine its approach.