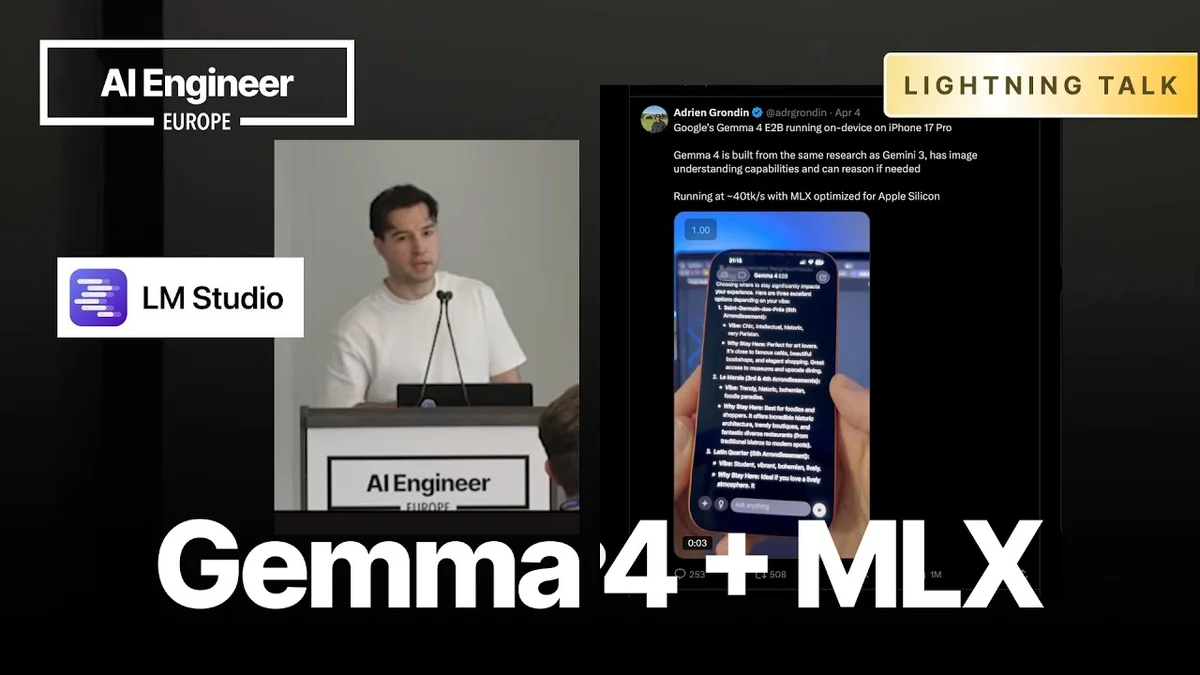

Adrien Grondin, Founder of Locally AI, demonstrated how to run Google's Gemma 4 large language model on an iPhone using Apple's MLX framework. This development signifies a significant step towards enabling powerful AI capabilities directly on mobile devices, bypassing the need for cloud connectivity for certain tasks.

Adrien Grondin and Locally AI

Adrien Grondin, founder of Locally AI, presented the demonstration. Locally AI is a startup focused on bringing AI models to on-device applications, making AI more accessible and efficient for end-users. Grondin's work highlights the growing trend of democratizing AI by enabling its deployment on consumer hardware.

Running Gemma 4 on iPhone with MLX

The core of the demonstration revolved around running Gemma 4, a family of open models developed by Google DeepMind, on an iPhone. Grondin showcased how to use the MLX framework, developed by Apple, to optimize these models for Apple Silicon chips found in iPhones and Macs. MLX is designed to facilitate efficient on-device machine learning tasks, including natural language processing and image generation.

Grondin explained that while the full-sized Gemma 4 model can be resource-intensive, quantized versions are available and perform exceptionally well on mobile hardware. He specifically mentioned the utility of 4-bit, 6-bit, and 8-bit quantized models, which offer a balance between performance and accuracy, making them suitable for on-device applications.

Performance and Quantization

The presentation highlighted the speed and efficiency achieved by running Gemma 4 on an iPhone. Grondin stated that the model can process approximately 40 tokens per second. He noted that this performance is achievable even on older iPhone models, indicating the optimization capabilities of MLX.

The choice of quantization level impacts performance and resource usage. While 8-bit quantization offers higher accuracy, 4-bit quantization provides a significant reduction in model size and memory footprint, making it ideal for devices with limited resources. Grondin suggested that developers can experiment with different quantization levels to find the optimal balance for their specific use cases.

MLX and Model Availability

MLX, being an Apple-developed framework, is optimized for Apple Silicon. This allows for efficient execution of models like Gemma 4 across various Apple devices, including iPhones, iPads, and Macs. Grondin pointed to Hugging Face as a primary source for finding and downloading these models, noting that the MLX community is actively contributing new models and updates.

The availability of pre-quantized models on platforms like Hugging Face simplifies the process for developers. Grondin demonstrated how users can easily download the desired model and integrate it into their applications using the MLX framework.

Future Implications

The ability to run advanced LLMs like Gemma 4 directly on smartphones opens up a wide range of possibilities for new AI-powered applications. This includes enhanced on-device chatbots, personalized AI assistants, and more sophisticated content generation tools that do not rely on constant cloud connectivity. The trend towards on-device AI also addresses privacy concerns, as data can be processed locally without being transmitted to external servers.

Grondin concluded by encouraging developers to explore the MLX framework and the growing ecosystem of on-device AI models. He provided a QR code for users to access the necessary resources and experiment with running Gemma 4 on their own devices.