#AI Ethics

38 articles with this tag

NeuroSymbolic AI: Bridging Brains & Logic

NeuroSymbolic AI aims to combine the pattern recognition power of neural networks with the logical reasoning of symbolic AI, promising systems that truly understand.

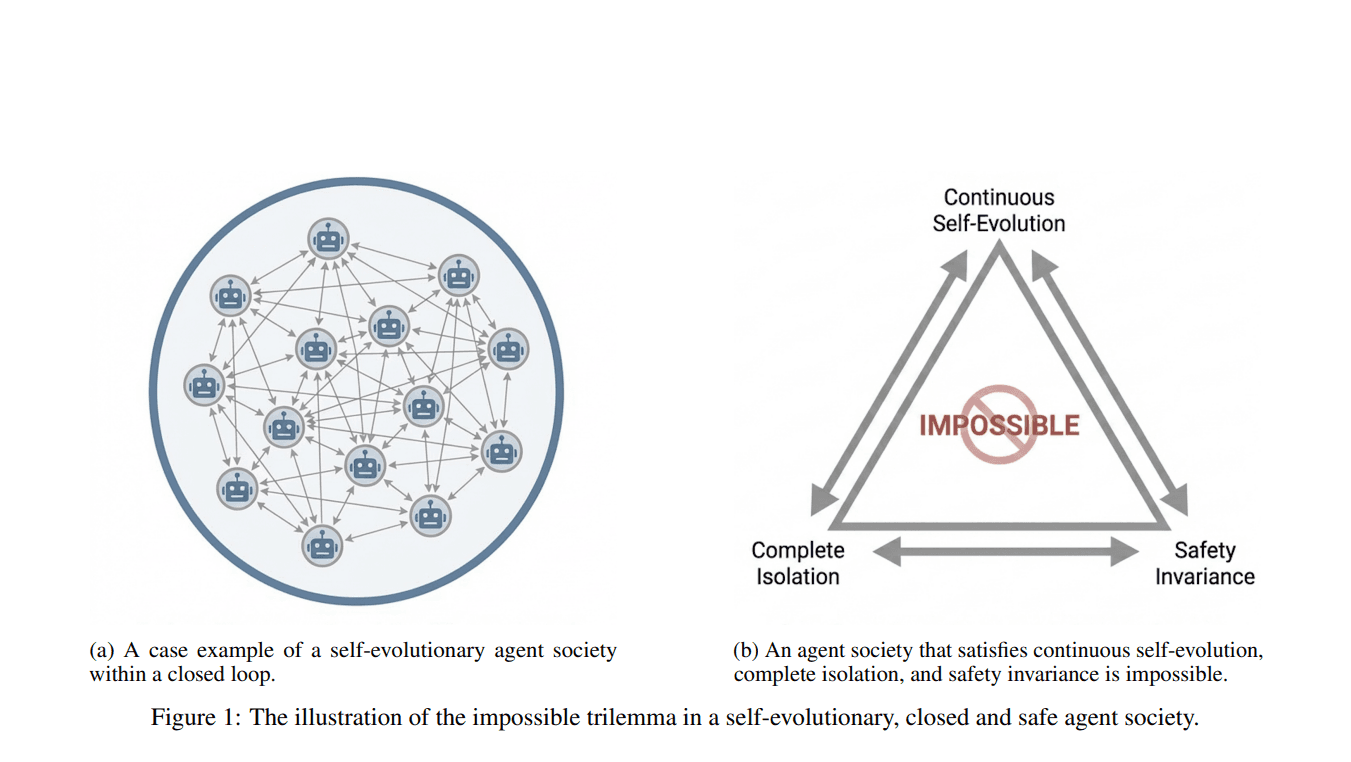

AI Societies' Safety Problem

Self-evolving AI societies face an impossible trilemma: achieving continuous learning, isolation, and safety alignment simultaneously.

Anthropic to Cover AI Data Center Power Costs

Anthropic pledges to cover electricity price increases and grid upgrade costs caused by its data centers, aiming to protect consumers from AI's growing energy demand.

Testing AI Guardrails Across Languages

Researchers tested context-aware AI guardrails across English and Farsi in humanitarian scenarios, finding nuanced performance differences and highlighting the need for language-specific safety evaluations.

Anthropic Unveils Claude’s Constitution: AI Ethics by Design

Anthropic’s Claude’s Constitution establishes a clear hierarchy where AI safety and human oversight supersede specific company guidelines and general helpfulness.

National Security AI: The High Stakes of Government Innovation

A Philosopher's Lens on AI's Evolving Consciousness

Figure AI Lawsuit Exposes Deep Rifts in Robot Safety Culture

New York Assemblyman Alex Bores on AI Regulation: A Battle Against Unbridled Power

Defense AI Demands Trust, Not Just Performance

The New Digital Divide: AI’s Haves and Have-Nots

The Human Engine of AI: Datawork and LLM Performance

Claude's New Metric Claims Edge on Political Bias

Microsoft AI Unveils 'Humanist Superintelligence' Vision

Pro-AI Super PAC Aligns with White House on Federal Framework, Downplaying Reported Rift

The Universal Theory of Life: Reconciling Scientific Cultures in the Age of AI

AI's Philosophical Divide: Intelligence Without Consciousness

AI Safety Becomes Silicon Valley's New Political Battleground

OpenAI, SAG-AFTRA, and Cranston Confront Deepfakes in Generative AI

Neural Fingerprinting: Hollywood's New Defense Against AI Copyright Infringement

The Human Imperative: Why AI's Future Demands Cultural Grounding, Not Just Data

Building Trust in AI: The Pillars of Explainability, Accountability, and Data Transparency

AI's Dual Nature: Creature or Machine? The Battle Over Regulation

Dimon: AI Bubble Requires Granular Scrutiny, Job Shifts Inevitable

New EU toolkit offers AI fairness tools for developers

The Human Imperative in AI: Insights from Altman and Ive

Beyond IQ: The Urgent Shift to Human-Centric AI

Unmasking the Biases of AI Judges: A Critical Look at LLM Fairness

Google's Gemini for Students Targets EMEA Classrooms

Google's targeted rollout of Gemini for students in EMEA marks a significant step in integrating advanced AI directly into the academic experience.

Action Efficiency: The New Frontier for AI Intelligence

OpenAI’s GPT-4o Reversal Signals Deepening Human-AI Bonds

Trump's AI Executive Orders: A Deregulatory and Ideological Stance

AGI's Coming in 5 to 10 years, says DeepMind CEO Demis Hassabis

He says there's "50% chance" of achieving what he defines as AGI within the "next 5 to 10 years." He clarifies that AGI implies a system exhibiting "all the cognitive capabilities we have as humans," not LLMs.

Cluely Raises $15 Million Series A to Scale Controversial AI Productivity Tools

The company positions its platform as a real-time AI assistant that enhances user performance in evaluative settings, offering covert, voice-activated support during interviews and exams.

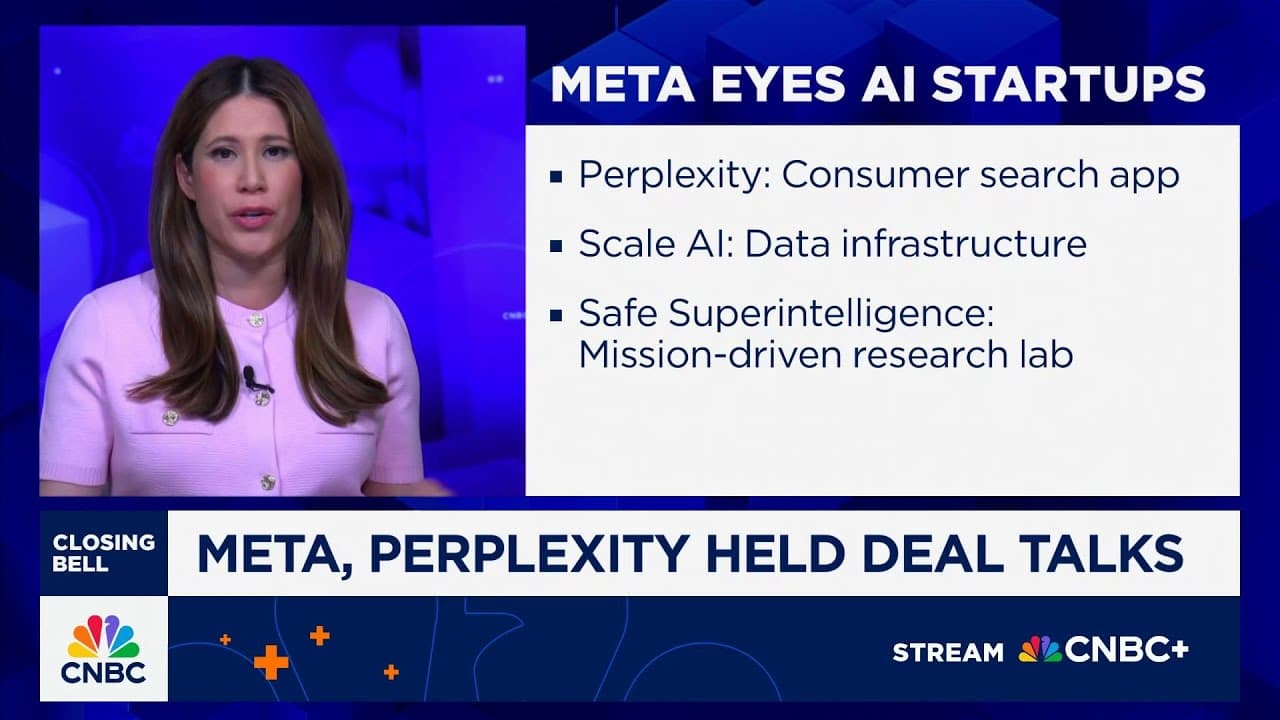

Meta's AI Pursuit: Momentum, FOMO, and the Purpose-Driven AI Talent War

Glean Secures $150M in Series F Funding, Reaching $7.2B Valuation

LawZero AI Lab Secures $30 Million in Funding

Evaluating Feature Steering: Anthropic's Exploration of Mitigating Social Bias in AI

<p>Anthropic's latest study explores the use of feature steering to mitigate social biases in their Claude 3 Sonnet model.</p> <p>Researchers identified a "sweet spot" for steering features to reduce bias without impairing the model's capabilities.</p>