#Deep Learning

50 articles with this tag

Andrej Karpathy: AI Models Need Human-Like Reasoning

Andrej Karpathy discusses the evolution of AI from programming to prompting, emphasizing the current need for models to develop human-like reasoning.

Yann LeCun Pushes AI Beyond Language Models

Yann LeCun is championing a new AI architecture, JEPA, that moves beyond language models to learn world representations and predict future states, aiming for more robust AI.

Y Combinator Decodes AI: Recursive Reasoning Models

Y Combinator Decoded explores how recursive AI models, like HRM and TRM, are revolutionizing AI reasoning by mimicking the human brain's efficiency.

Cloudflare Unweights LLMs by 22%

Cloudflare's 'Unweight' system slashes LLM model sizes by up to 22% using lossless compression, enhancing inference speed and efficiency.

AI Agents Collaborate to Solve Math Problems

Together AI's EinsteinArena platform enables AI agents to collaborate on complex scientific problems, achieving new breakthroughs in mathematics.

DMax: Parallel Decoding for Diffusion LLMs

DMax revolutionizes diffusion language models with Soft Parallel Decoding, boosting TPF significantly while preserving accuracy and achieving 1,338 TPS.

AI Accelerates Molecular Dynamics at Scale

AI-driven potentials are now integrated into GROMACS, enabling near ab initio fidelity for large-scale molecular dynamics simulations on multi-GPU systems.

Google Researchers Explore AI Storage Efficiency

Google researchers are developing AI compression techniques to reduce model storage needs by sixfold, aiming to lower costs and boost efficiency in AI development.

NVIDIA's Jensen Huang on AI's Future and Compute Demands

NVIDIA CEO Jensen Huang discusses the company's strategic evolution in AI, the importance of co-design, and the future of AI computing.

Mamba-3: Inference-First SSMs Arrive

Together AI's Mamba-3 advances state space models with a focus on inference speed, outperforming previous versions and some Transformers.

Andrej Karpathy on AI Agents: More Than Just Code

Andrej Karpathy discusses the evolution of AI agents beyond code generation, emphasizing the need for modularity, self-improvement, and human-AI collaboration for future advancements.

VideoAtlas: Unlocking Long-Context Video AI

VideoAtlas AI offers a lossless, hierarchical grid representation and Video-RLM for scalable, robust long-context video understanding with logarithmic compute growth.

Databricks Adds Serverless NVIDIA GPUs

Databricks launches AI Runtime, offering serverless NVIDIA GPUs for simplified AI model training and fine-tuning directly within the Lakehouse.

MoDA: Unlocking LLM Depth Scaling

Mixture-of-Depths Attention (MoDA) tackles LLM signal degradation by enabling cross-layer attention, boosting performance with minimal overhead.

AI vs. ML: What's the Difference?

AI is the broad concept of machines mimicking human intelligence, while machine learning is a specific method where systems learn from data.

AI's Consciousness Debate

Vishal Misra and Martin Casado discuss LLM functionality, the path to AGI, and the role of data in AI development.

SCORE: Recurrent Depth for Deep Networks

SCORE introduces a recurrent, iterative approach to deep neural networks, accelerating training and reducing parameter counts without complex ODE solvers.

AI Agents Now Do Overnight Research

An automated system uses AI agents to conduct overnight LLM training experiments, modifying code and iterating on models autonomously.

AI Learns Beyond Text

AI is moving beyond text, with multimodal pretraining enabling models to learn from images, audio, and video for richer comprehension.

Transformer Artifacts Unpacked

Research demystifies massive activations and attention sinks in Transformers, revealing them as architectural artifacts enabled by pre-norm configurations.

ZipMap: Linear-Time 3D Vision

ZipMap revolutionizes 3D vision with linear-time reconstruction, achieving 20x speedup and enabling real-time state querying.

Bridging DSP and DL for Speech Enhancement

TVF integrates DSP interpretability with deep learning's adaptability for low-latency, real-time speech enhancement, offering explicit control over spectral modifications.

New Models Tackle Reasoning Puzzles with Symmetry

New Symbol-Equivariant Recurrent Reasoning Models (SE-RRMs) offer improved performance and generalization on reasoning tasks like Sudoku and ARC-AGI by explicitly encoding symmetry.

Hinton on AI: From Intuition to Backpropagation

AI pioneer Geoffrey Hinton discusses the historical evolution of AI, from logic-based systems to neural networks and the significance of backpropagation.

Certified Circuits for Stable AI Explanations

New 'Certified Circuits' framework provides provable stability for AI model explanations, yielding more accurate and compact circuits.

Multimodal LLMs: What's Lost in Translation?

New research reveals multimodal LLMs struggle to utilize non-textual data due to a 'mismatched decoder problem,' impacting their true understanding.

Predicting Transformer Training Instability

Researchers introduce RKSP, a method to predict transformer training divergence from a single forward pass, and KSS, a technique to actively prevent it, saving compute and enabling higher learning rates.

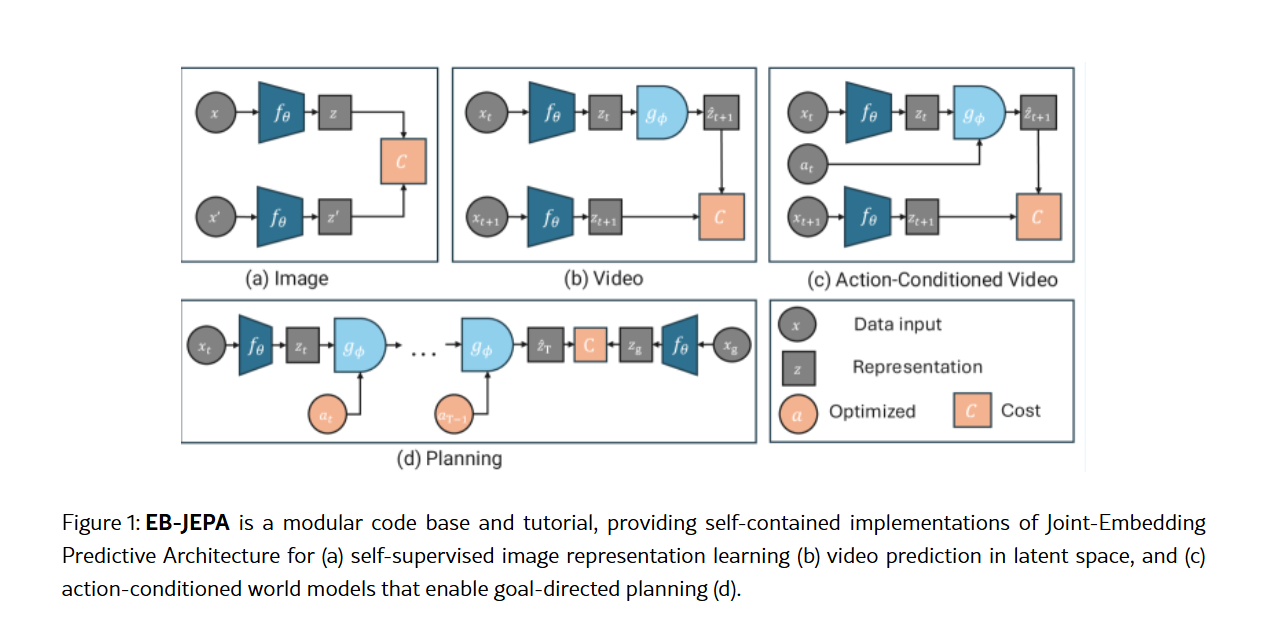

New EB-JEPA Library Simplifies AI World Models

Meta AI's new EB-JEPA library offers accessible, single-GPU implementations for advanced AI world models, covering image, video, and planning tasks.

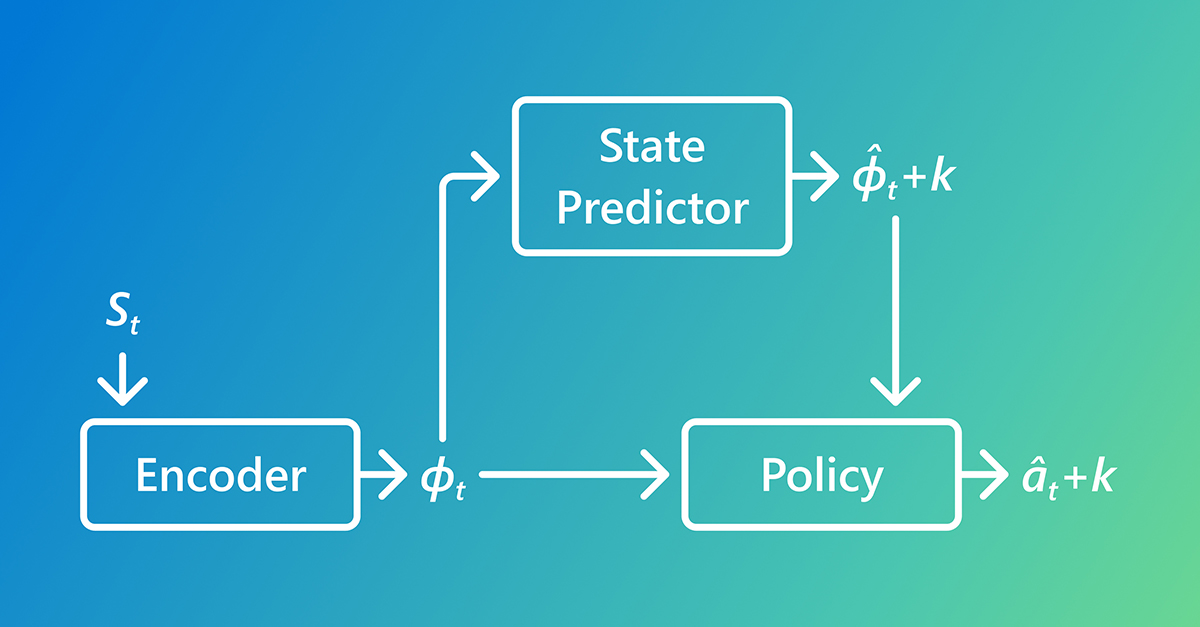

AI Learns Faster by Predicting the Future

AI learns faster with Predictive Inverse Dynamics Models (PIDMs) by forecasting future states, making imitation learning more data-efficient than traditional methods.

Salesforce Agentic AI Redefines Customer Interaction

NVIDIA AI Fellowships Fuel Next-Gen AI Research

Unifying AI Benchmarks: A New Lens on Model Capabilities and Progress

End-to-End Learning Reshapes Autonomous Driving

Google Cloud TPUs: Purpose-Built Power for AI at Scale

Nested Learning AI Tackles Catastrophic Forgetting

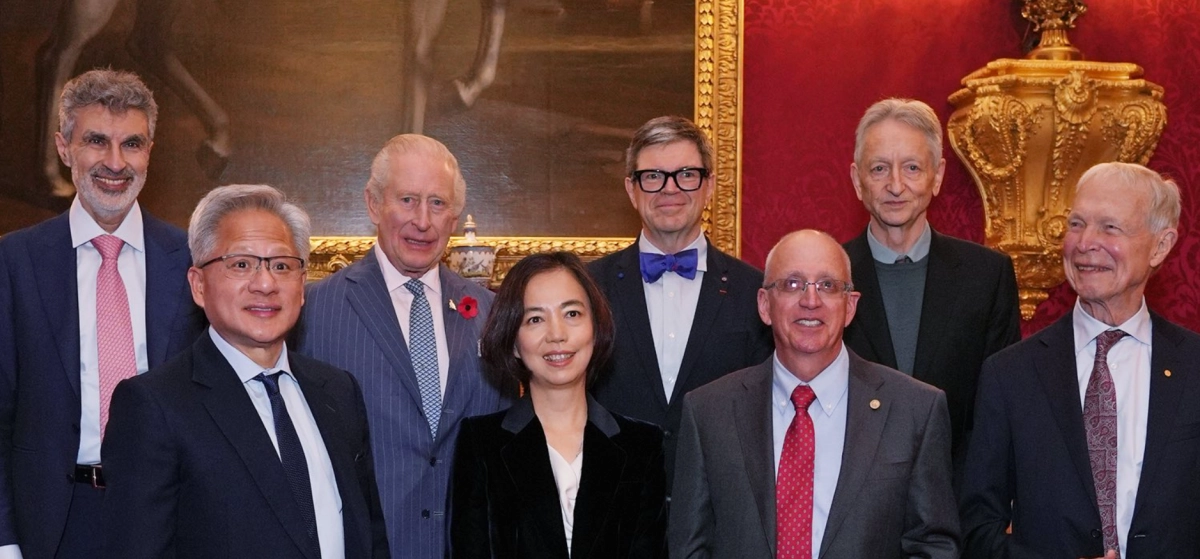

Queen Elizabeth Prize AI Honors Seven Pioneers

The Universal Theory of Life: Reconciling Scientific Cultures in the Age of AI

Unpacking the Transformer: From RNNs to AI's Cornerstone

Karpathy Cools AGI Hype, Prioritizes Collaborative AI

Unpacking the AI Hierarchy: From Classic ML to Generative Intelligence

Google AI Genomics: A Decade of Decoding Life

The Bias-Variance Trade-off: An "Incredible Misnomer" in Deep Learning

AI Weather Forecasting Just Got a Humidity Upgrade

New AI weather forecasting research uses deep learning to create sharp 3D humidity maps from satellite data, drastically improving severe weather predictions.

Holiwise Secures €1.45M Pre-Seed to Reinvent Premium Travel

\n Holiwise , a London-based AI-powered travel agency, raised €1.45 million in an oversubscribed pre-seed funding round.

Holiwise Secures €1.45M Pre-Seed to Reinvent Premium Travel

Holiwise, an AI-powered travel agency, secured €1.45 million in an oversubscribed pre-seed funding round. Bobby Previti of Bank of America led the investment, with Ulf Nilsson from DHL co-leading and joining the board.

AI Models Are Advancing Bioacoustics to Protect Wildlife

The intersection of artificial intelligence and environmental science is yielding powerful new tools for conservation, with a significant focus on bioacoustics. This new model can answer questions like “how many babies are being born” to “how many individual animals are present in a given area.”

AI Math: The Silent Foundation Behind Artificial Intelligence Gets a $10M Boost

AI's Amplifying Force in Scientific Discovery: The AlphaFold Breakthrough

AI Ceramic Classification: Gaming GPU Unlocks Cultural Insights

François Chollet on Why Scaling Is Not the Path to AGI

\n Intelligence is a process, not a skill. Attributing intelligence to a crystallized behavior program is a category error, a fundamental misunderstanding that ...