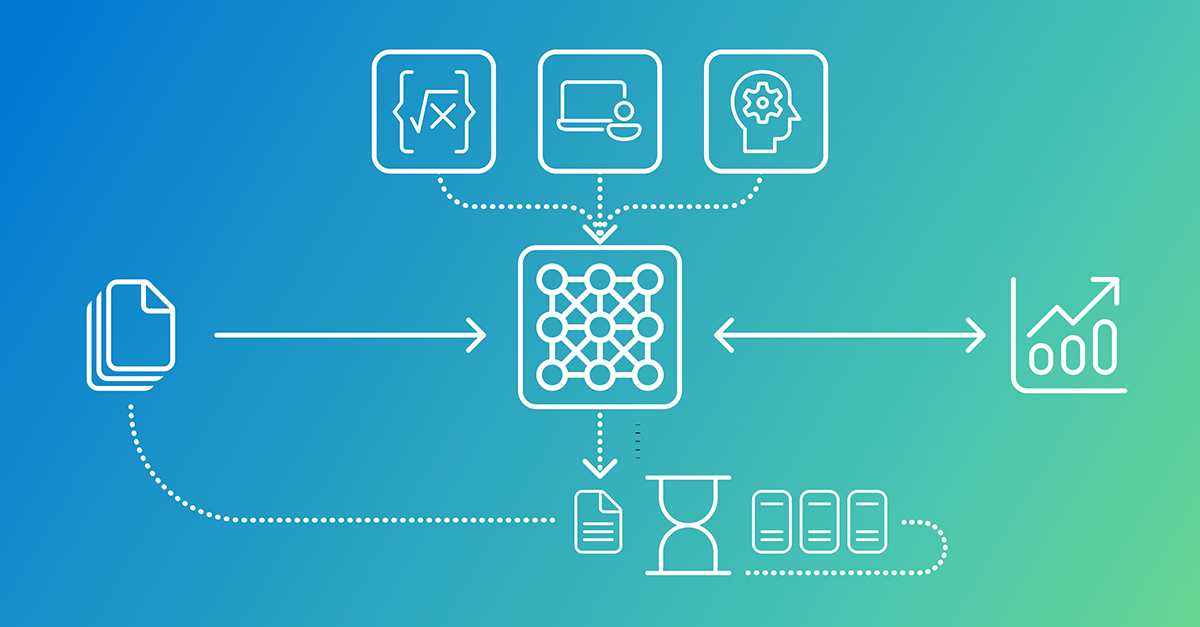

Microsoft Research has unveiled Phi-4-reasoning-vision-15B, a 15 billion parameter open-weight multimodal model designed for efficient reasoning across vision and language tasks. This compact AI model, available on platforms like HuggingFace and GitHub, aims to deliver strong performance without the substantial compute and data demands of larger models. It excels in areas like math and science reasoning, and understanding user interfaces, positioning itself as a competitive option in the rapidly evolving AI landscape. According to Microsoft Research, the model provides an appealing value proposition, pushing the Pareto frontier between accuracy and computational cost.

The development of Phi-4-reasoning-vision-15B addresses a growing trend towards larger, more resource-intensive models. Microsoft Research emphasizes a counter-movement focused on smaller, efficient models, building on the success of its previous Phi family of language models. This new multimodal offering demonstrates that capable vision-language tasks, including image captioning, document reading, and interactive screen understanding, can be achieved without relying on massive datasets or complex architectures. The goal is to enable structured reasoning on more accessible hardware.