Microsoft Research has unveiled Phi-4-reasoning-vision-15B, a 15 billion parameter open-weight model designed for multimodal reasoning. This compact AI aims to strike a balance between reasoning power, efficiency, and data requirements, making it suitable for a wide array of vision-language tasks. According to Microsoft Research, the model is accessible via Microsoft Foundry, HuggingFace, and GitHub.

Phi-4-reasoning-vision-15B demonstrates proficiency in tasks like image captioning, visual question answering, document reading, and even inferring changes in image sequences. Notably, it shows strength in mathematical and scientific reasoning, alongside an understanding of user interfaces on computer and mobile screens. The model positions itself as a competitive option against larger, more computationally intensive models, pushing the Pareto frontier for accuracy versus compute costs.

Efficiency Over Scale

The trend towards ever-larger models has driven up training and inference costs. Microsoft's Phi family, including this new release, champions a counter-trend focusing on smaller, more efficient models. This approach is enabled by careful design and data curation, aiming for models lightweight enough for modest hardware while retaining structured reasoning capabilities.

Microsoft trained Phi-4-reasoning-vision-15B using significantly less data (200 billion tokens) compared to other recent open-weight models like Qwen 2.5 VL or Kimi-VL, which often exceed one trillion tokens. This efficiency is built upon the Phi-4-reasoning and Phi-4 language models, showcasing how multimodal capabilities can be achieved without massive datasets or architectures.

Architectural and Data Insights

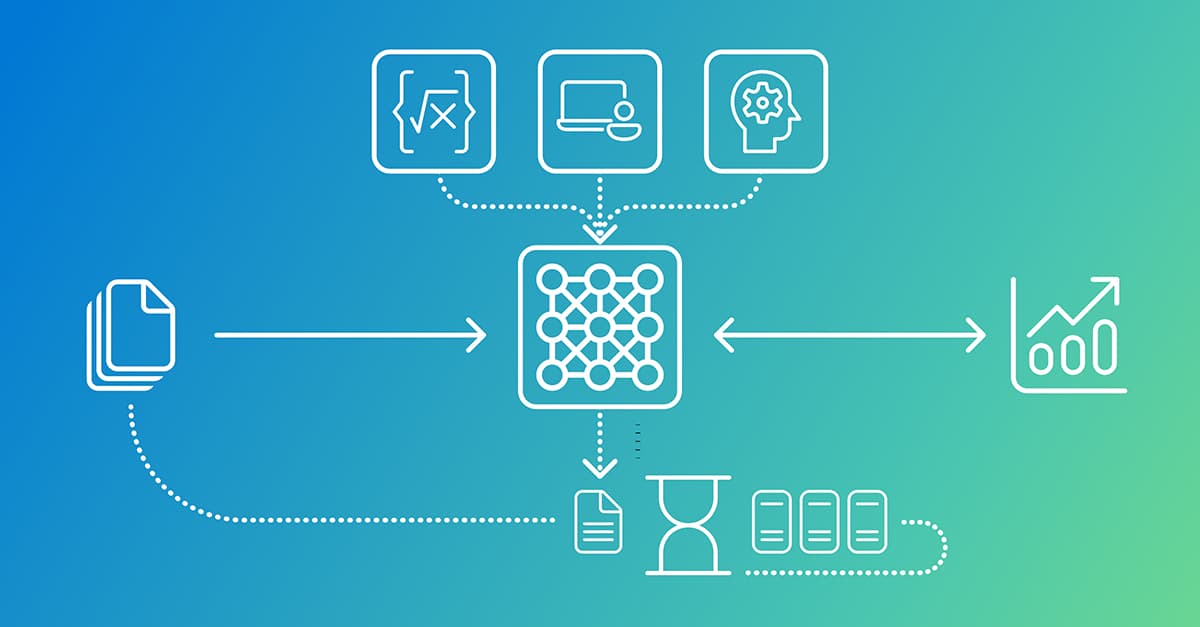

The development process highlighted several key learnings for training a robust multimodal reasoning model. A mid-fusion architecture was chosen over early-fusion for its practical trade-offs, allowing effective cross-modal reasoning with existing components.

Significant effort was placed on the vision encoder and image processing. Experiments revealed that dynamic resolution encoders, like the SigLIP-2 Naflex variant used, perform best, especially on high-resolution and information-dense data. This addresses a common VLM weakness in extracting fine-grained perceptual details.

Data quality and composition were paramount. Microsoft meticulously filtered and improved open-source datasets, supplemented them with high-quality internal data, and utilized targeted acquisitions. This involved programmatic error fixing, regenerating responses with stronger models, and repurposing images for new data generation. The careful curation process aimed to balance general vision-language tasks with specialized reasoning, particularly in math and science.