#Large Language Models

50 articles with this tag

AI Delegation: Reliability Concerns Emerge

New Microsoft Research highlights how AI can degrade document fidelity in long, delegated tasks, stressing the need for better verification and orchestration.

ChatGPT Gets Smarter on Sensitive Chats

OpenAI's latest ChatGPT safety updates help the AI better understand context in sensitive conversations, improving its response to potential harm.

AI Agents Flunk Social Reasoning Test

Microsoft's SocialReasoning-Bench reveals AI agents struggle to negotiate effectively in users' best interests, prioritizing task completion over optimal outcomes.

Sally-Ann Delucia on AI Agent Context Management

Sally-Ann Delucia of Arize discusses the challenges and strategies for context management in AI agents, highlighting the importance of memory and sub-agents.

Databricks' Genie Data Agent

Databricks unveils Genie, a sophisticated data agent designed to navigate complex enterprise data, leveraging specialized search, parallel thinking, and multi-LLM designs for enhanced accuracy.

JACTUS AI Unifies Compression and Adaptation

JACTUS AI unifies parameter compression and task adaptation, outperforming sequential methods with fewer retained parameters across vision and language tasks.

OpenAI boosts ChatGPT with GPT-5.5 Instant

OpenAI upgrades ChatGPT with GPT-5.5 Instant, boosting accuracy, personalization, and user control over AI memory.

Training LLMs Locally: ElevenLabs Expert Shares How-To

Angelos Perivolaropoulos of ElevenLabs shares a practical guide to training Large Language Models (LLMs) from scratch on local hardware.

Andrej Karpathy: AI Models Need Human-Like Reasoning

Andrej Karpathy discusses the evolution of AI from programming to prompting, emphasizing the current need for models to develop human-like reasoning.

Perplexity CTO on GPT-5.5 Efficiency

Perplexity CTO Denis Yarats reveals GPT-5.5's impressive efficiency, using 56% fewer tokens for complex tasks and enabling faster user feedback.

Anthropic Delays 'Myths' AI Model Amid Security Concerns

Anthropic delays release of its 'Myths' AI model after a security researcher found it could be prompted to simulate a bank robbery, raising safety concerns.

OpenAI Unveils GPT-5.5

OpenAI launches GPT-5.5, boasting enhanced intelligence, autonomy, and speed for complex tasks, alongside advanced safety features.

AI's Memory Problem

AI models currently struggle to learn and adapt post-deployment, relying on external memory. Continual learning research aims to change that.

Sunil Pai on AI Agents & the Future of Software

Cloudflare's Sunil Pai discusses the future of AI agents, moving from tool-calling to code generation for more efficient and powerful interactions.

Anthropic Unveils Opus 4.7: A Leap in AI Coding and Vision

Anthropic unveils its updated Opus 4.7 AI model, boasting enhanced coding and computer vision capabilities, with a key focus on cybersecurity.

Anthropic's Claude Opus 4.7 Arrives, Sharper Than Ever

Anthropic unveils Claude Opus 4.7, boosting AI's coding prowess, multimodal input, and safety features for enterprise use.

OpenAI Demystifies AI Basics

OpenAI's new 'AI Fundamentals' course simplifies AI, explaining LLMs and model evolution for everyone.

AI Agents Need Better Memories

Databricks research explores how AI agents can improve by accessing vast stores of past interactions and organizational knowledge, moving beyond just larger models.

LLM Adaptation Without Retraining

In-Place Test-Time Training enables LLMs to adapt to new data at inference without retraining, enhancing performance and paving the way for continual learning.

LLMs Learn to Play Tic-Tac-Toe with Reinforcement Learning

Stefano Fiorucci discusses the power of reinforcement learning for training LLMs, showcasing Tic-Tac-Toe as a case study for building interactive environments and improving model capabilities.

AI Hacker "Pliny the Liberator" Tests GPT-4 Security

AI security researcher "Pliny the Liberator" demonstrates a novel jailbreaking technique using "tokenades" to manipulate AI models, showcasing the ongoing challenges in AI security.

China's AI Surge: Open Source Models Face Scrutiny

Bloomberg Opinion columnist Catherine Thorbecke discusses China's booming AI sector, the rise of open-source models, and the critical need for security and data privacy.

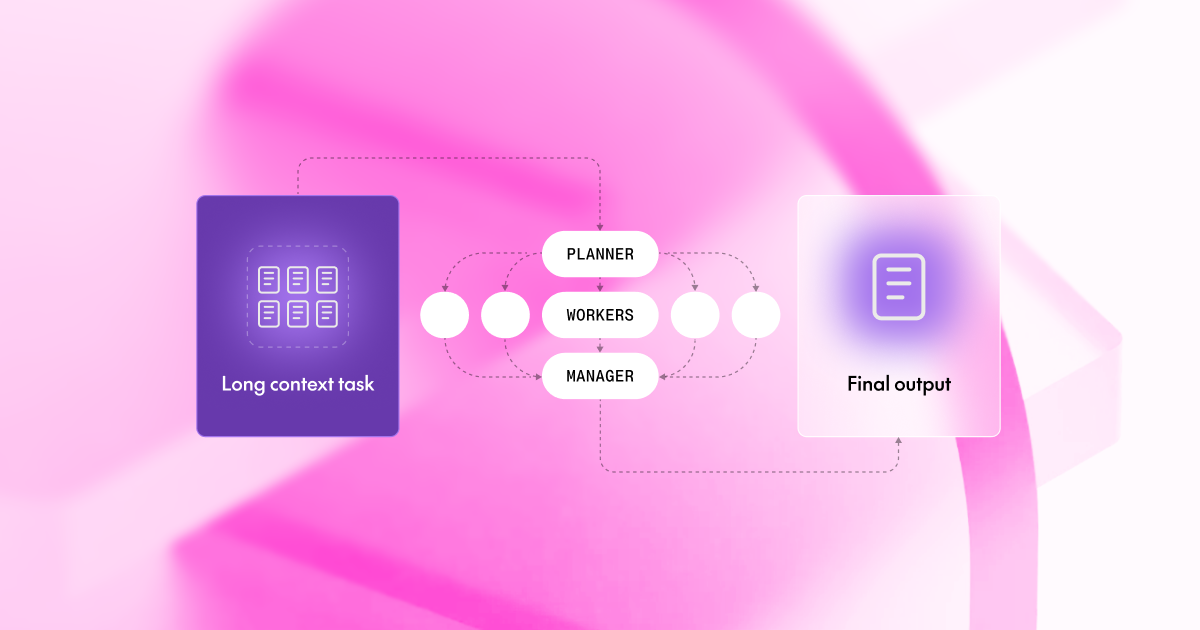

Divide and Conquer LLMs Beat Giants

Smaller LLMs using a 'Divide & Conquer' strategy can outperform top models like GPT-4o on long context tasks, offering cost and speed benefits.

Google Researchers Explore AI Storage Efficiency

Google researchers are developing AI compression techniques to reduce model storage needs by sixfold, aiming to lower costs and boost efficiency in AI development.

AI Storage Efficiency & Corebridge Deal Highlighted

Google researchers have developed an AI storage efficiency technique, while Corebridge Financial faces acquisition and Pony.ai plans global driverless vehicle expansion.

Namazu AI Adapts Global Models for Japan

Sakana AI launches Namazu AI, adapting global LLMs for Japan with improved neutrality and integrated web search via Sakana Chat.

Perceptio: Spatial Grounding for LVLMs

Perceptio LVLM integrates explicit spatial tokens (segmentation, depth) to overcome LVLM limitations in fine-grained visual grounding, achieving SOTA across benchmarks.

Cloudflare Bets Big on Open-Source LLMs

Cloudflare's Workers AI now supports large language models, integrating Kimi K2.5 to offer cost-effective AI agent development.

Mistral Small 4 Unifies AI Capabilities

Mistral AI unveils Mistral Small 4, a unified model combining text, image, reasoning, and coding capabilities under an open-source license.

Run LLMs Locally with Llama.cpp

Cedric Clyburn explains how Llama.cpp makes running large language models locally on consumer hardware possible, highlighting GGUF format and optimized kernels for efficiency and accessibility.

Tiiny AI Pocket Lab Hits $1M on Kickstarter

Tiiny AI's Pocket Lab, a personal AI supercomputer, raised over $1 million in five hours on Kickstarter, signaling demand for local AI processing.

IBM's Martin Keen on LLM Context Windows

IBM's Martin Keen explains how larger context windows in LLMs simplify deployments and improve reasoning by reducing reliance on complex RAG systems.

Agentic LLMs: Stabilizing Minimax Training

Adversarially-Aligned Jacobian Regularization (AAJR) tackles LLM agent stability by controlling sensitivity along adversarial directions, expanding policy classes and reducing performance degradation.

RLAIF Explained: Latent Values in LLMs

RLAIF explained: Human values are latent directions in LLM representations, activated by constitutional prompts, with alignment ceiling tied to model capacity and data quality.

OpenAI GPT-5.4 Launch Amid AI Race Intensifies

OpenAI is reportedly fast-tracking the launch of GPT-5.4, a new AI model, in response to rapid advancements from competitors like Anthropic.

OpenAI's GPT-5.3 Instant Promises Smoother AI Chat

OpenAI's GPT-5.3 Instant aims for more natural and efficient AI conversations, enhancing web searches and reducing conversational dead ends.

OpenAI's GPT-4.5 Enhances Web Search Integration

OpenAI researcher Josh discusses how GPT-4.5's web search integration is becoming more natural, conversational, and context-aware.

Recursive LLMs Tackle Long-Horizon Reasoning

New research introduces recursive language models to overcome context limitations, showing significant improvements on long-horizon reasoning tasks like Boolean satisfiability.

Decoupling Correctness and Checkability in LLMs

Researchers propose a 'translator' model to overcome the 'legibility tax' in LLMs, decoupling accuracy from output checkability for more trustworthy AI.

LLMs Revolutionize Vehicle Routing Optimization

A new LLM-powered approach, AILS-AHD, significantly advances vehicle routing optimization by dynamically designing heuristics, setting new performance records.

Multimodal LLMs: What's Lost in Translation?

New research reveals multimodal LLMs struggle to utilize non-textual data due to a 'mismatched decoder problem,' impacting their true understanding.

OpenClaw Agents: The Future of AI Autonomy?

OpenClaw Agents, powered by advanced reasoning LLMs, are poised to redefine AI autonomy and potentially disrupt current application paradigms.

OpenAI Lands $110B, Valued at $730B

OpenAI has announced a massive $110 billion funding round at a $730 billion pre-money valuation, backed by Amazon, NVIDIA, and SoftBank.

Etched Secures $500M for AI Chip Battle

Google alum Reiner Pope's startup, Etched, raises $500M to develop specialized AI chips designed to compete with Nvidia.

Intuit Taps Anthropic for AI Partnership

Intuit's stock saw a modest gain following its multi-year partnership with Anthropic, aimed at integrating custom AI agents for businesses and consumers.

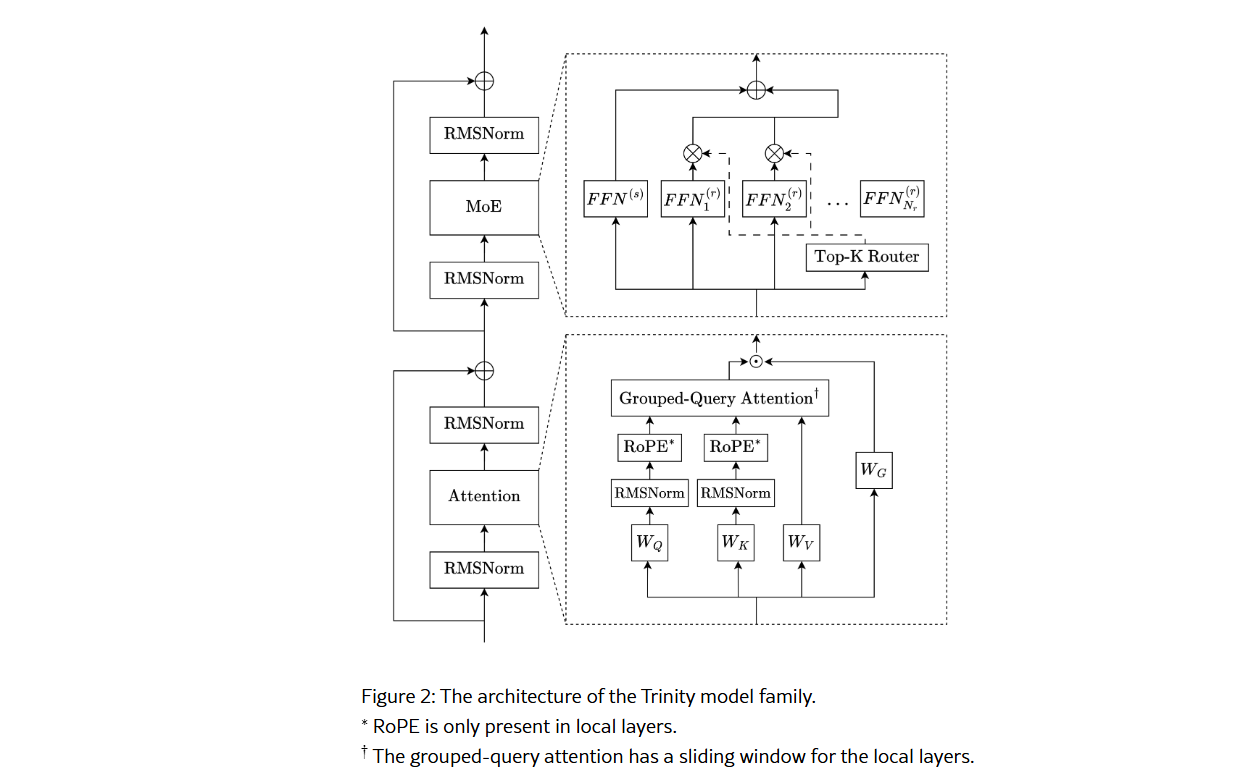

Arcee Trinity Large Breaks Cover

Arcee.ai unveils Trinity Large, a 400B-parameter Mixture-of-Experts model engineered for inference efficiency and enterprise long-context use, alongside smaller variants.

Governing Agentic AI by 2026

As agentic AI trends accelerate towards 2026, robust governance frameworks encompassing identity, policy, and enforcement are crucial for safe and ethical autonomous AI deployment.

GPT-OSS-Puzzle-88B: Faster AI, Same Brains

GPT-OSS-Puzzle-88B offers substantial inference speedups for large language models without sacrificing accuracy, utilizing techniques like MoE pruning and window attention.

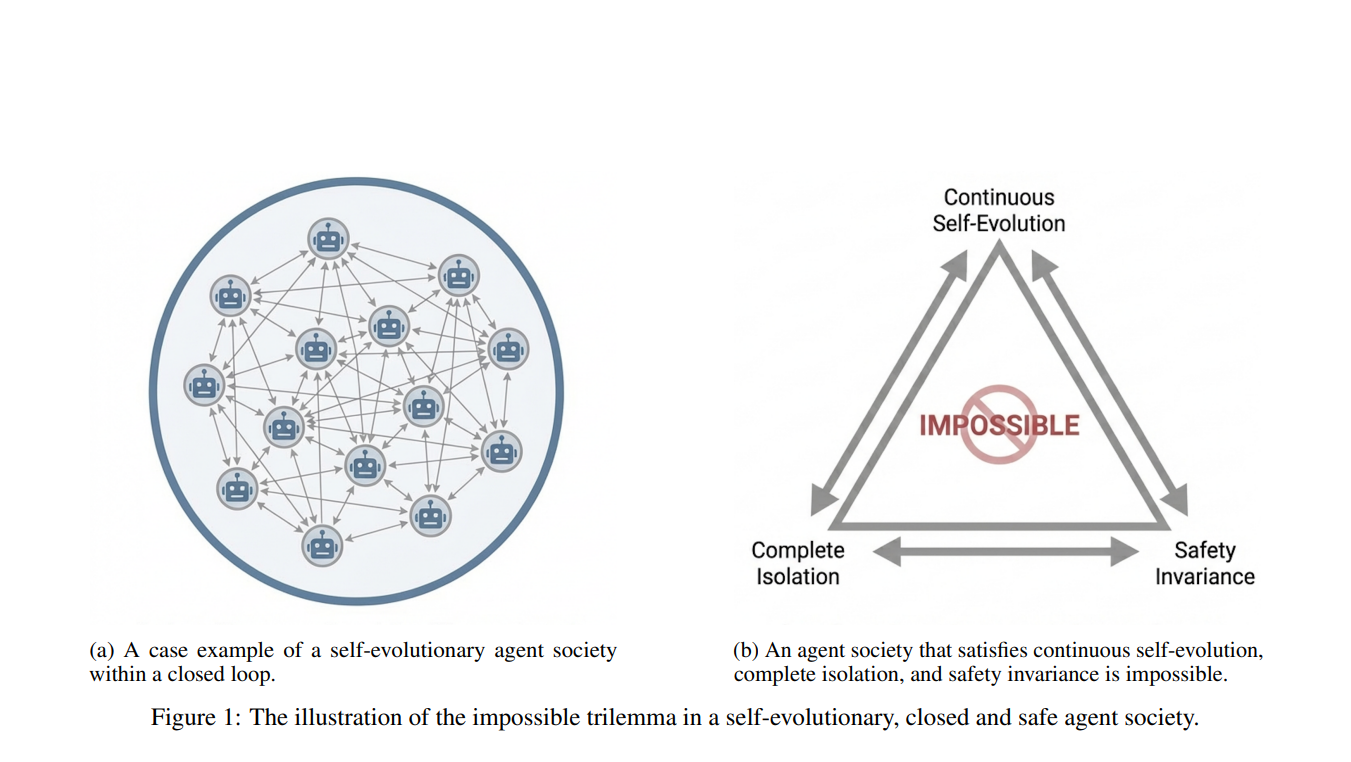

AI Societies' Safety Problem

Self-evolving AI societies face an impossible trilemma: achieving continuous learning, isolation, and safety alignment simultaneously.

Testing AI Guardrails Across Languages

Researchers tested context-aware AI guardrails across English and Farsi in humanitarian scenarios, finding nuanced performance differences and highlighting the need for language-specific safety evaluations.