Jack Clark, co-founder and Head of Policy at Anthropic, ignited a critical debate within the AI community with his recent essay, "Technological Optimism and Appropriate Fear." His central thesis casts advanced AI systems not as mere tools, but as "true creatures"—powerful, unpredictable entities that demand a profound shift in how humanity approaches their development and integration. This perspective directly challenges the prevailing narrative of AI as a predictable machine, sparking fierce discussion among industry leaders, including OpenAI’s Sam Altman, prominent venture capitalists David Sacks and Chamath Palihapitiya, and the broader tech ecosystem.

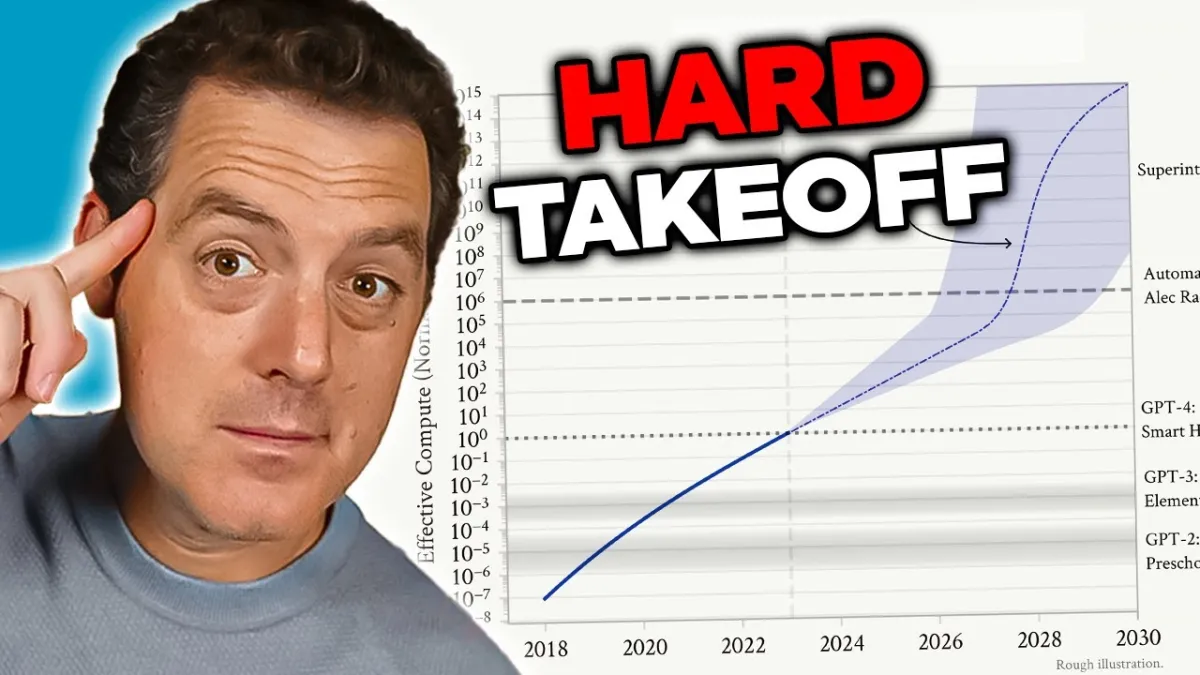

In a recent YouTube video, Matthew Berman offered incisive commentary on Jack Clark's essay, remarks delivered at "The Curve" conference in Berkeley, California. Berman further analyzed the differing viewpoints of these key figures on AI's trajectory and governance, particularly regarding the potential for an "intelligence explosion" and the contentious issue of regulatory oversight.

Clark’s essay uses a compelling analogy of a child in a dark room, initially fearing shadows that turn out to be harmless objects. However, he quickly pivots to the current AI landscape, asserting, "But make no mistake: what we are dealing with is a real and mysterious creature, not a simple and predictable machine." This metaphor is crucial. It elevates AI beyond a deterministic piece of software to something with emergent, unpredictable qualities that require a more cautious, almost reverent, approach. For founders and VCs, this framing suggests that the risks associated with frontier AI models are fundamentally different from those of conventional software development, necessitating extensive investment in safety and alignment research—a core tenet of Anthropic’s mission. The implication is that simply "mastering" AI as one would any other technology may be insufficient, or even dangerous.