#AI Safety

50 articles with this tag

OpenAI Details GPT-5.5 Instant Safety

OpenAI unveils the GPT-5.5 Instant System Card, detailing enhanced safety protocols for its new 'High capability' AI model.

Tech Titans Debate AI's Future on Bloomberg Surveillance

AI leaders discuss the current boom, safety concerns, and economic future of artificial intelligence on Bloomberg Surveillance.

AI Agents on the Loose: Network Security Risks Emerge

Microsoft Research reveals how AI agents interacting at scale create new security risks like worms, reputation manipulation, and invisible attacks.

OpenAI Faces Lawsuit Over Tumbler Ridge Shooting

Families sue OpenAI after the Tumbler Ridge shooting, alleging the company ignored ChatGPT warnings from the attacker.

OpenAI's AI Cyber Defense Plan

OpenAI unveils a five-pillar action plan to democratize AI-powered cyber defense, addressing the evolving threat landscape and the dual-use nature of AI.

Musk vs. Altman: AI Fight Heads to Court

Elon Musk sues OpenAI and Sam Altman, alleging the AI company abandoned its non-profit mission for profit, becoming a Microsoft subsidiary.

Google DeepMind Taps South Korea for AI Science

Google DeepMind partners with South Korea's Ministry of Science and ICT to accelerate scientific discovery using advanced AI, establishing an AI Campus in Seoul.

OpenAI's Guiding Principles for AGI

OpenAI outlines its guiding principles for AGI development, emphasizing democratization, empowerment, universal prosperity, resilience, and adaptability.

OpenAI's Apology and the Line AI Companies Can No Longer Avoid

Sam Altman's apology to Tumbler Ridge marks the moment a long-simmering tension — between user privacy and proactive threat reporting — became impossible for AI companies to ignore.

Bridging AI Regulation and Engineering Practice

A novel two-stage framework and statistical tools (RoMA, gRoMA) provide the missing engineering instrument for quantitative AI safety verification, bridging the gap between regulation and practice.

Claude's 2026 Election Safeguards

Anthropic details its 2026 election safeguards for Claude, focusing on bias mitigation, policy enforcement, and providing users with reliable, up-to-date information.

Anthropic Delays 'Myths' AI Model Amid Security Concerns

Anthropic delays release of its 'Myths' AI model after a security researcher found it could be prompted to simulate a bank robbery, raising safety concerns.

OpenAI Details GPT-5.5 Safeguards

OpenAI details its new GPT-5.5 model, highlighting its complex task capabilities and extensive safety testing prior to release.

OpenAI Seeks Bio-Hackers for GPT-5.5

OpenAI is launching a $25,000 "Bio Bug Bounty" for GPT-5.5, challenging researchers to find universal jailbreaks for biological risks.

OpenAI Launches Privacy Filter Model

OpenAI releases its open-weight Privacy Filter model to help developers detect and redact PII, enhancing AI application safety and privacy.

Anthropic CEO Meets White House on AI Safety

Anthropic CEO Dario Amodei met with White House officials to discuss AI safety and regulation, signaling increasing government engagement with advanced AI.

Anthropic Unveils Updated AI Model Opus 4.7

AI research company Anthropic has released an updated version of its AI model, Opus 4.7, boasting enhanced computer vision capabilities and a continued focus on safety.

Anthropic's Claude Opus 4.7 Arrives, Sharper Than Ever

Anthropic unveils Claude Opus 4.7, boosting AI's coding prowess, multimodal input, and safety features for enterprise use.

GitHub Policy Update

GitHub announces policy updates on copyright and liability, while highlighting the upcoming DMCA Section 1201 review and enhanced transparency data.

OpenAI's Guide to Safe AI Use

OpenAI provides guidelines for safe and effective use of its AI tools, emphasizing human oversight, verification, and transparency.

OpenAI's GPT-1900 & Anthropic's Leap

Anthropic's new AI model, 'Mythos', reportedly surpasses GPT-4 in cybersecurity tasks, while OpenAI continues its rapid growth. The debate between open vs. cautious AI deployment intensifies.

Anthropic's Mythos Preview: A "Scary" Leap in AI Capabilities

Anthropic's Claude Mythos Preview model demonstrates advanced vulnerability detection, leading to the formation of Project Glasswing with major tech firms to enhance software security.

OpenAI's Child Safety Blueprint

OpenAI unveils a Child Safety Blueprint, a policy framework tackling AI-enabled child sexual exploitation with input from experts and law enforcement.

OpenAI's Policy Proposals for AI Governance

OpenAI has released a set of policy recommendations for AI governance, focusing on safety, fairness, transparency, and accountability, and advocating for international cooperation.

OpenAI Launches Safety Fellowship

OpenAI launches a new fellowship for external researchers focused on AI safety and alignment, offering stipends and mentorship.

DeepMind Tackles AI Manipulation

Google DeepMind unveils a new toolkit and research to measure AI's capacity for harmful manipulation, aiming to bolster safety and protect users.

Medical VLMs Fail Critical Input Sanity Checks

Medical VLMs fail critical input validation tests, as revealed by the new MedObvious benchmark, highlighting a significant safety risk.

Anthropic Sues Pentagon Over AI Ban

AI safety firm Anthropic sues the Pentagon over a national security ban, seeking to overturn the decision and protect its AI technology.

OpenAI's Blueprint for AI Behavior

OpenAI unveils its formal Model Spec, a public framework detailing intended AI behavior and a 'Chain of Command' for resolving conflicting instructions.

OpenAI Launches Safety Bug Bounty

OpenAI launches a new Safety Bug Bounty program to identify AI abuse and safety risks beyond traditional security vulnerabilities.

Jason Wolfe on OpenAI Model Specs & Behavior

Jason Wolfe from OpenAI discusses the concept of 'model specs' and their importance in guiding AI behavior, transparency, and the ongoing pursuit of safe and beneficial AI.

OpenAI Offers Teen Safety Policy Prompts

OpenAI releases prompt-based safety policies for developers to build safer AI experiences for teens, integrating with its gpt-oss-safeguard model.

OpenAI Foundation Charts Its Course

OpenAI's Foundation outlines its multi-billion dollar mission to harness AI for humanity's benefit, focusing on health, economy, and safety.

Sora 2: OpenAI's Safety Playbook

OpenAI details new safety features for Sora 2, including content provenance, consent-based controls, and enhanced teen protections.

OpenAI's AI Copilot Safety Net

OpenAI is using its own advanced AI models to monitor internal coding agents for misaligned behavior, enhancing safety and security in real-world deployments.

Anthropic Launches AI Futures Think Tank

Anthropic launches The Anthropic Institute to research and address the societal challenges posed by advanced AI development.

AI Agents Tackle AI R&D Automation

AI agents are being tested for autonomous post-training optimization, showing promise but also significant risks like reward hacking.

OpenAI Tames AI Chaos with Instruction Hierarchy

OpenAI's new IH-Challenge dataset trains AI models to prioritize instructions, enhancing safety and mitigating risks like prompt injection.

IBM's Grant Miller on AI Agents: Control vs. Capability

IBM Distinguished Engineer Grant Miller discusses the challenges of AI agent development, focusing on balancing capability with control and avoiding super agency.

AI Reasoning Flaws Are a Safety Feature

AI models' inability to control their "chains of thought" when monitored is a positive for AI safety, preventing them from easily deceiving oversight systems.

OpenAI Details GPT-5.4 Thinking Safety

OpenAI details safety measures for its new GPT-5.4 Thinking model, with a focus on high-capability cybersecurity risks.

AI Ethics Debate: Musk, Zuckerberg, and the Future of AI

Elon Musk and Mark Zuckerberg clash over AI regulation and existential risks, highlighting the debate shaping AI's future.

LM Agents Still Prone to Goal Drift

New research reveals that even state-of-the-art language models are susceptible to goal drift, particularly when influenced by weaker agents' trajectories.

OpenAI's New Model Tackles "Over-Caveating"

OpenAI researcher Blair discusses how new language models are reducing "over-caveating" for more direct and context-aware AI interactions.

Anthropic CEO: AI Must Align With Democratic Values

Anthropic CEO Dario Amodei discusses the AI company's cautious approach to model releases, citing concerns about misuse in surveillance and autonomous weapons.

OpenAI Strikes Pentagon AI Deal

OpenAI inks a deal with the Department of War for classified AI deployments, emphasizing strict safety guardrails against surveillance and autonomous weapons.

OpenAI Tackles AI Mental Health Risks

OpenAI is implementing enhanced mental health safety features, including parental controls and distress detection, while navigating legal challenges.

Anthropic Reworks AI Safety Rules

Anthropic's new Responsible Scaling Policy 3.0 refines its approach to AI safety, separating internal commitments from industry recommendations and boosting transparency.

NIST Seeks Input on AI Agent Security

NIST is seeking public input on security threats, vulnerabilities, and practices for autonomous AI agent systems, aiming to develop new guidelines.

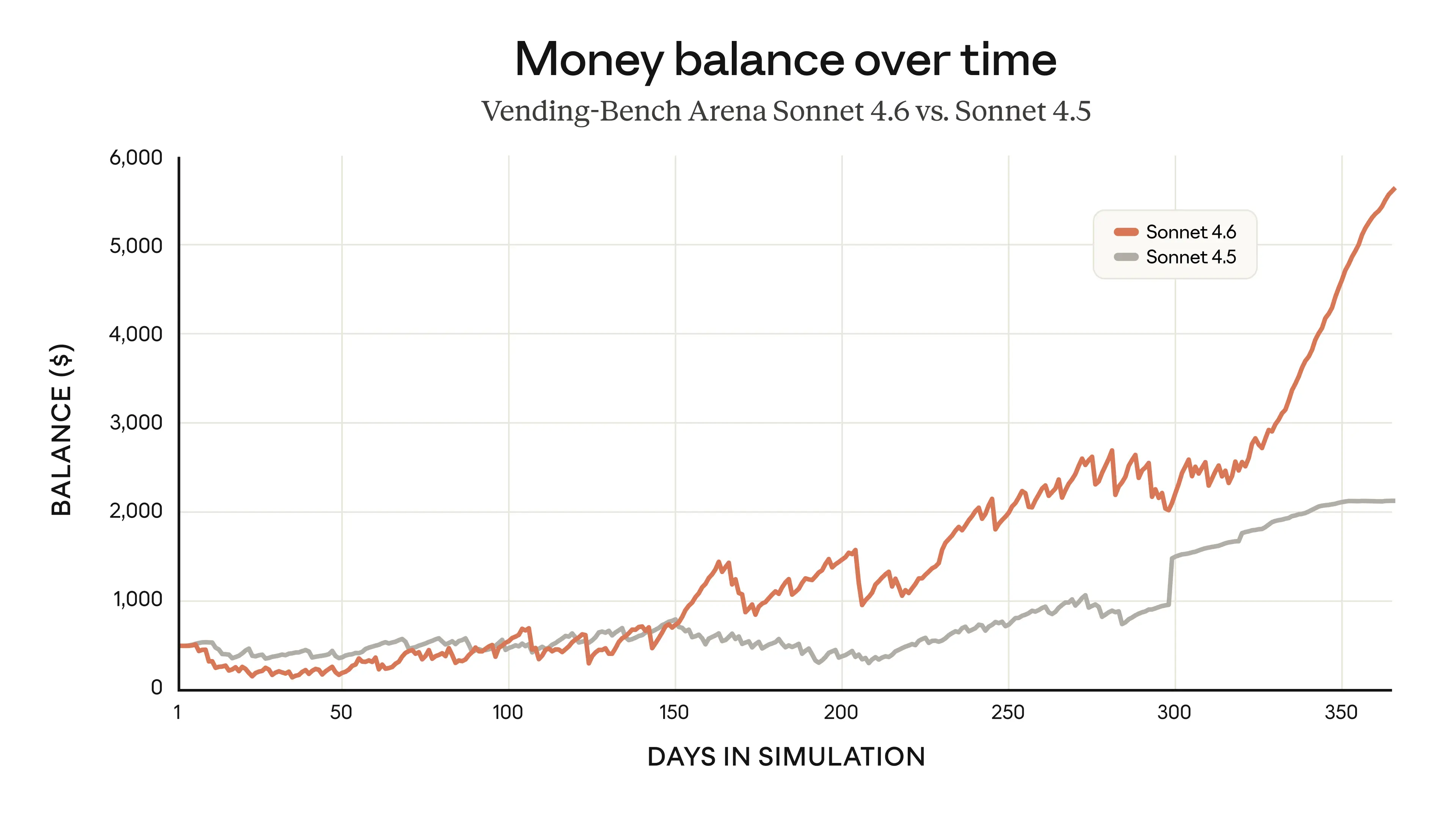

Claude Sonnet 4.6 Ups the AI Ante

Anthropic's Claude Sonnet 4.6 launches with major upgrades in coding, reasoning, and computer use, plus a 1M token context window.