Are machines intelligent? It's a question at the forefront of AI development, prompting a deep dive into the fundamental differences between today's leading AI systems and the biological marvel that is the human brain. As highlighted in Microsoft Research's The Shape of Things to Come series, this isn't just academic; it shapes the future of AI.

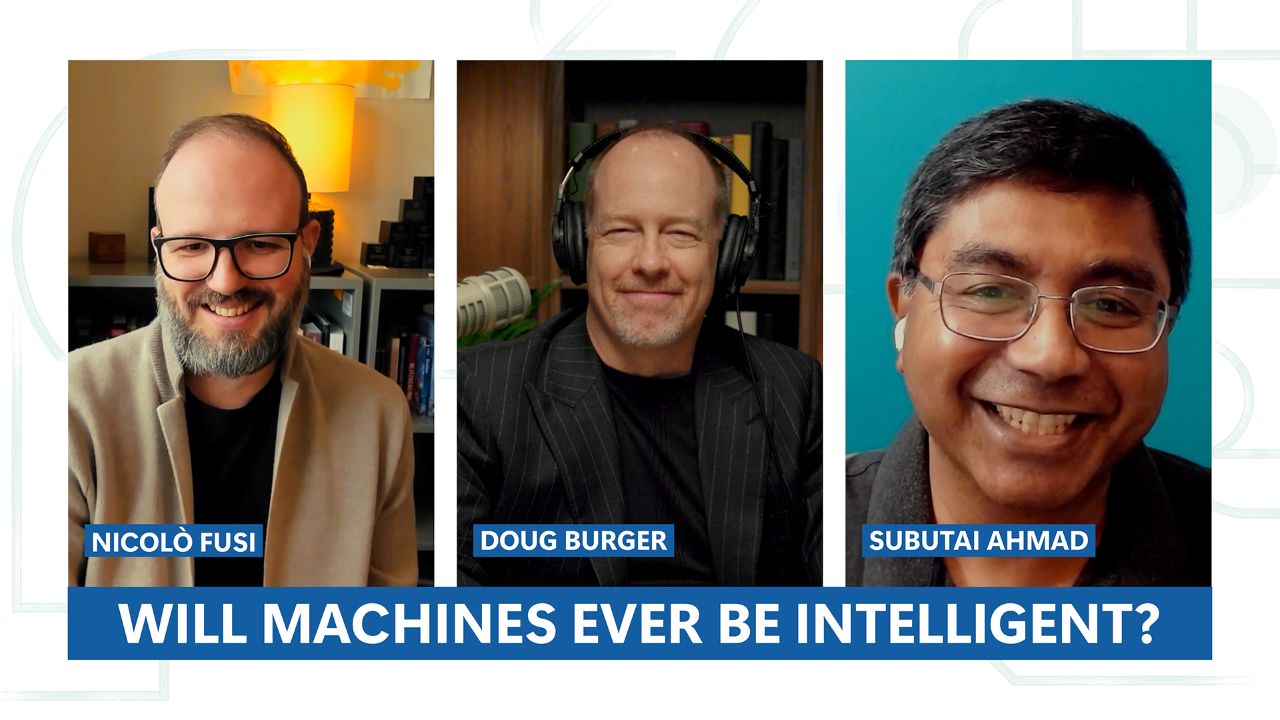

At the heart of the discussion are transformer-based large language models (LLMs), the engines behind much of today's generative AI. While incredibly powerful at processing vast amounts of data and identifying complex patterns, their architecture differs profoundly from the human brain architecture. Experts like Nicolò Fusi from Microsoft Research and Subutai Ahmad from Numenta, formerly of Microsoft Research, are exploring these distinctions.

The Transformer's Strengths and Weaknesses

Transformers, with their attention and feedforward layers, are adept at understanding context within a given sequence, like a chat history or a prompt. This allows them to generate coherent and contextually relevant text, a feat that has revolutionized natural language processing.

However, this approach is fundamentally different from how humans learn. Consider the simple act of navigating basement stairs. When one step is unexpectedly altered, the brain doesn't require a full retraining session. Instead, it performs rapid, continuous updates, often triggered by subtle sensory-motor feedback and neuromodulators.

This continuous, adaptive learning is a hallmark of biological intelligence. The brain constantly models the world, making granular adjustments without conscious effort and without forgetting everything it already knows. This contrasts sharply with current AI models, which often require massive retraining for even minor updates, a process that can be inefficient and prone to catastrophic forgetting.

The Brain's Efficient, Grounded Intelligence

Subutai Ahmad emphasizes the human brain's remarkable energy efficiency and its ability to learn in a contextual, fine-grained manner. This biological approach is a key area of research for making AI more sustainable and adaptable.

The concept of sensory-motor grounding is also crucial. Human intelligence is deeply intertwined with our physical interaction with the world. We learn through doing, seeing, and feeling, integrating sensory input with motor actions. Current transformer-based LLMs, while sophisticated in language processing, largely lack this direct connection to the physical world, which may limit their capacity for true understanding and reasoning.

This gap raises fundamental questions about the nature of intelligence itself. While transformer models excel at specific tasks, they may not be on a direct path to replicating the broad, adaptable, and efficient intelligence of the human brain. The ongoing exploration into transformer models vs human brain seeks to bridge this gap.

The Road Ahead

Understanding these architectural differences is vital for charting the future of AI. It informs how we develop systems that can truly learn, adapt, and interact with the world in a meaningful way, moving beyond pattern recognition to genuine comprehension. This ongoing dialogue is essential for ensuring that AI development leads to a positive future.