Teaching AI agents to perform tasks by showing them human examples, a process known as imitation learning, typically relies on mapping current observations directly to actions. This common method, Behavior Cloning (BC), often requires vast datasets to account for human variability, a significant hurdle in real-world applications. A new approach, Predictive Inverse Dynamics Models (PIDMs), detailed in research from Microsoft Research, offers a more data-efficient path by fundamentally rethinking how AI learns from demonstrations.

Rethinking Imitation Learning

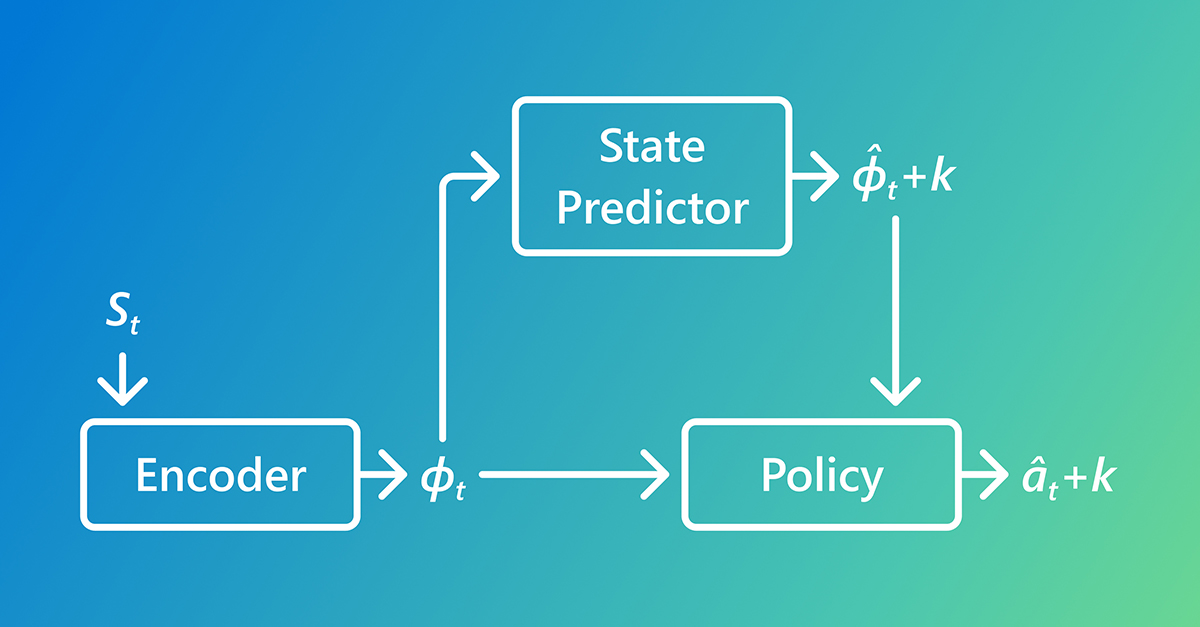

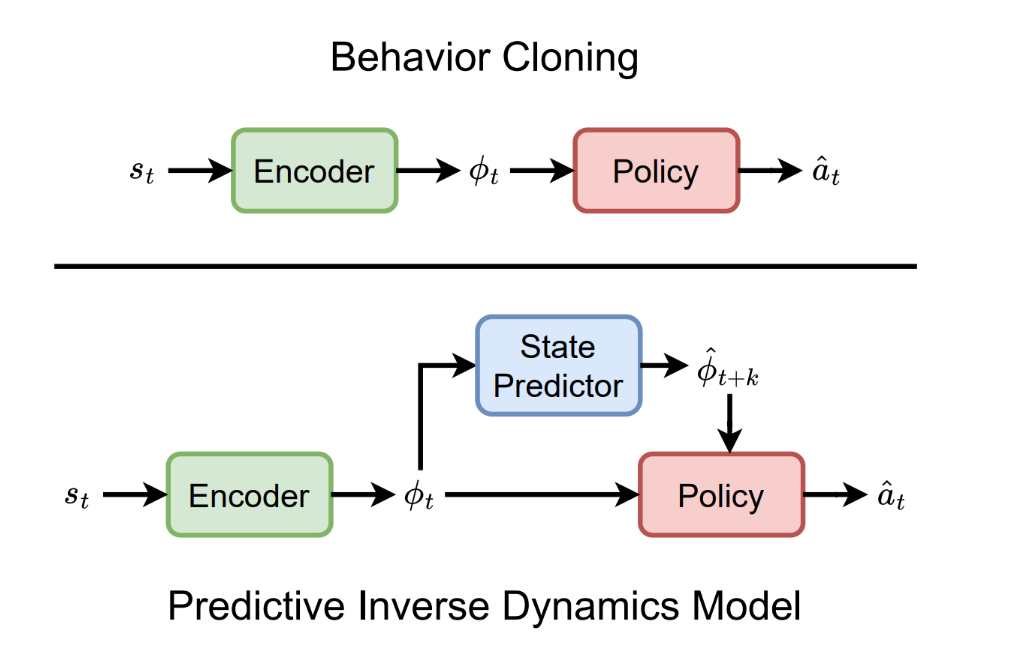

Instead of a direct state-to-action mapping, PIDMs break down imitation learning into two distinct stages. First, they employ a state predictor to forecast plausible future states. Second, an inverse dynamics model uses this predicted future state to infer the action required to transition from the current state towards that predicted future. This two-stage process reframes the core question from 'What action would an expert take?' to 'What is the expert trying to achieve, and what action leads there?'

This shift provides a crucial sense of direction, clarifying intent and reducing ambiguity in action selection. Even when predictions are imperfect, this directional guidance can significantly improve learning efficiency. PIDMs leverage this by grounding action selection in a predicted outcome, making them substantially more data-efficient than BC.

Real-World Validation in Gameplay

To validate PIDMs, researchers trained agents on human gameplay demonstrations within a complex 3D video game. The agents operated directly from raw video input, processed at 30 frames per second, and handled real-time interactions, visual artifacts, and system delays. The model learned to predict future states and choose actions that advanced gameplay toward those predicted futures, all without relying on hand-coded game variables.

Experiments conducted on a cloud gaming platform, introducing further delays and visual distortions, showed PIDM agents consistently matching human play patterns and achieving high success rates. This demonstrates the robustness of the approach even under challenging, real-world conditions.

Why PIDMs Outperform BC

The core advantage of PIDMs lies in clarifying intent. By focusing on a plausible future, the model can better disambiguate actions in the present. While prediction errors can introduce some risk, the benefit of reduced ambiguity often outweighs this, particularly when predictions are reasonably accurate.

This is especially critical in scenarios where human behavior is variable or driven by long-term goals not immediately apparent from the current visual input. BC struggles here, requiring extensive data to interpret noisy demonstrations. PIDMs, by linking actions to intended future states, sharpen these demonstrations, enabling effective learning from significantly fewer examples.

Evaluation and Results

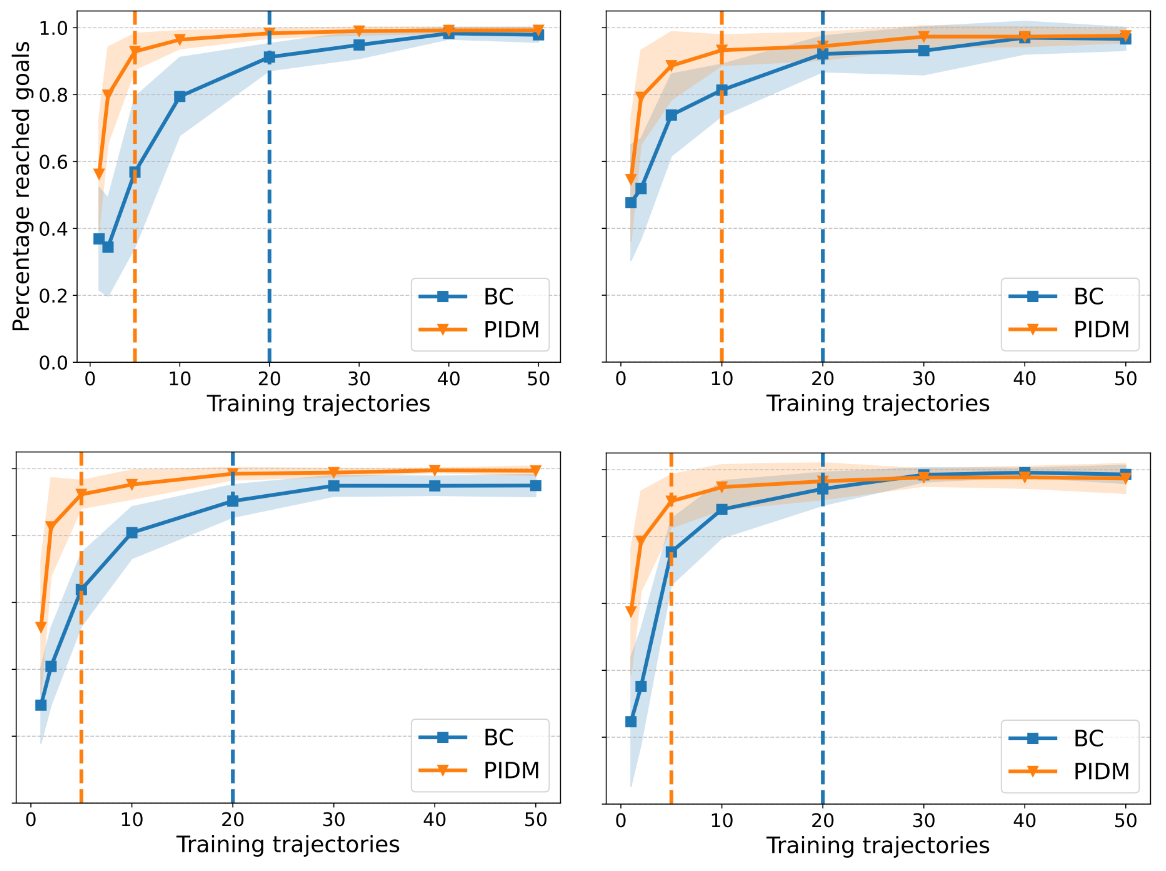

Experiments were conducted in both a simple 2D environment and a complex 3D game. PIDMs consistently achieved high success rates with fewer demonstrations compared to BC. In the 2D setting, BC required two to five times more data to match PIDM performance. In the 3D game, BC needed 66% more data to achieve comparable results.

Takeaway: Intent Matters

The core insight is that making intent explicit through future prediction significantly enhances imitation learning. PIDMs shift the paradigm from simple mimicry to goal-oriented action. While highly unreliable predictions can hinder performance, reasonably accurate forecasts allow PIDMs to excel, especially in complex scenarios with limited or variable demonstration data.

This approach offers a powerful way to learn from fewer examples by focusing on the underlying purpose of actions rather than just their superficial execution. It highlights how understanding where an agent is trying to go can be more critical than perfectly replicating past movements.