#Prompt Injection

16 articles with this tag

AI Agents: Beyond Chatbots with Open Source

Cedric Clyburn from Red Hat explores the evolution from chatbots to AI agents, detailing the architecture of OpenCopilot and its security implications.

AI Hacker "Pliny the Liberator" Tests GPT-4 Security

AI security researcher "Pliny the Liberator" demonstrates a novel jailbreaking technique using "tokenades" to manipulate AI models, showcasing the ongoing challenges in AI security.

AI Agents: The "Renting Edge" in Cybersecurity

Experts discuss how AI agents are evolving beyond prompt injection to sophisticated "promptware" attacks, necessitating a shift in cybersecurity strategies.

OpenAI Tackles AI Agent 'Prompt Injection'

OpenAI is adapting its AI security strategy to counter sophisticated prompt injection attacks, treating them as social engineering challenges.

Databricks Tackles AI Agent Security

Databricks outlines a practical guide to securing AI agents against prompt injection by applying Meta's 'Agents Rule of Two' framework and implementing layered controls.

OWASP Top 10 LLM Risks Explained

Jeff Crume from IBM breaks down the OWASP Top 10 for LLM Applications, highlighting critical security risks like prompt injection and data leakage.

AI Agents Need Zero Trust

Zero Trust principles are essential for securing autonomous AI agents, managing their non-human identities, and defending against threats like prompt injection.

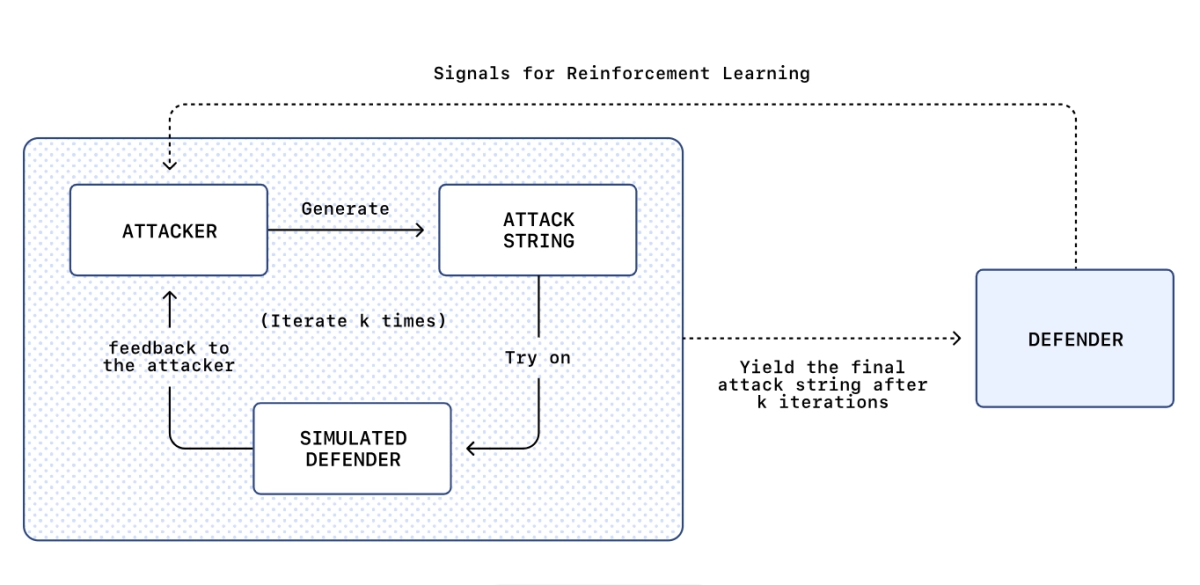

ChatGPT prompt injection is so bad they built an AI attacker

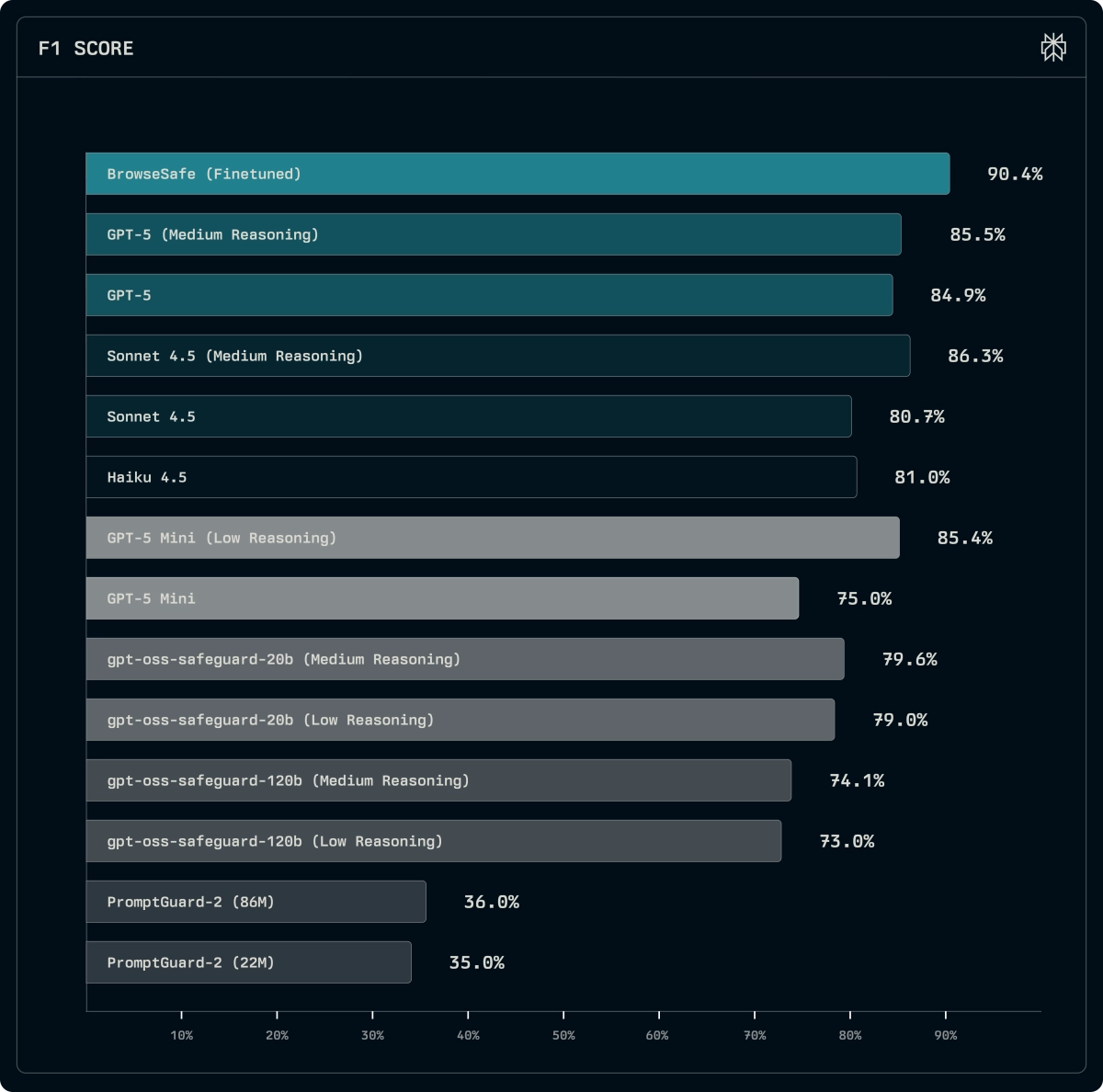

Brave AI Browsing Enters Testing, Redefining Web Interaction

New Benchmark Targets Prompt Injection Defense in AI Browsers

Autonomous AI Agent Security: Context Engineering's New Battleground

AI Agent Marketplaces Face Critical Flaws, Microsoft Research Finds

Opera Neon Hit by AI Browser Prompt Injection Flaw

AI's Double-Edged Sword: Mastering Governance and Security for Trustworthy Systems

OpenAI’s ChatGPT Agent: A New Frontier in Autonomous AI

Safeguarding Generative AI: IBM's Defense-in-Depth Approach to LLM Security

IBM's proposed solution introduces a "policy enforcement point" (PEP), acting as a proxy between the user and the LLM, and a "policy decision point" (PDP) or policy engine.