Google is rolling out its Interactions API, a new unified interface for its Gemini models. The beta API aims to simplify interactions with Gemini, offering a more robust alternative to the existing generateContent API.

The Interactions API is designed to handle state management, tool orchestration, and long-running tasks more efficiently. It supports both general use cases and specialized functions like tool calling and agent interactions.

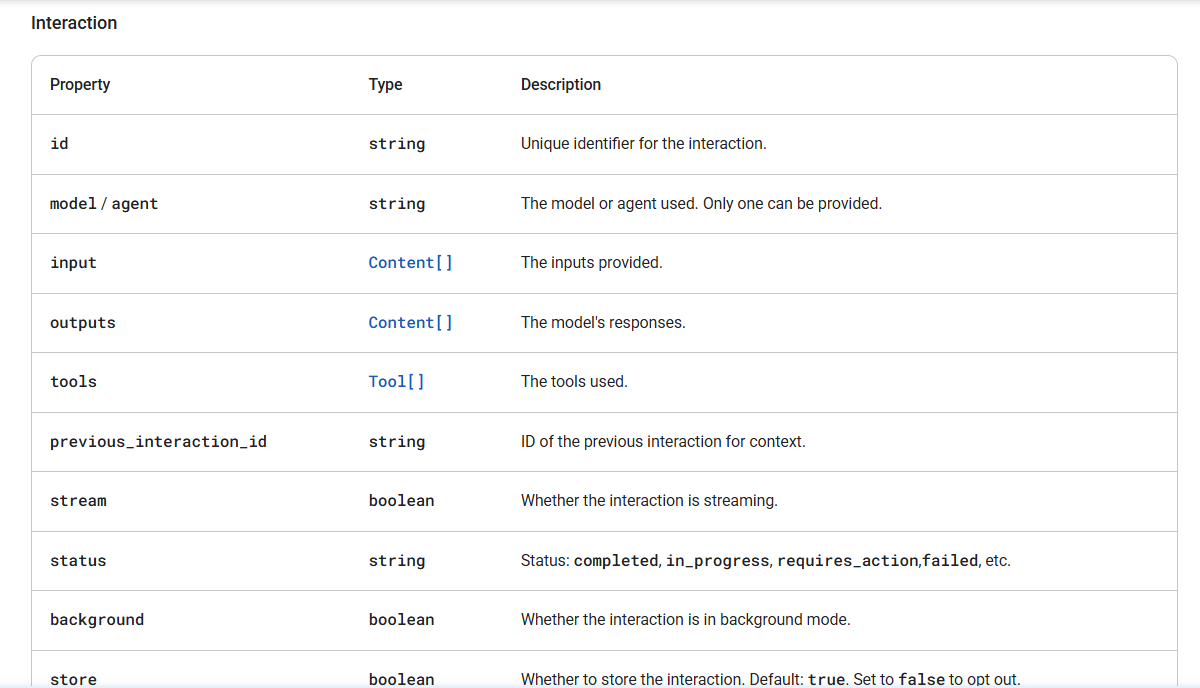

Unified Interface for Gemini Models

This new API consolidates various interaction patterns into a single, cohesive interface. It simplifies how developers engage with Gemini models and agents, promising a smoother workflow for complex AI applications.