AI coding tools are outpacing human review capabilities, creating a critical bottleneck. New research from Causal Dynamics Lab (CDL) pinpoints the issue: AI agents spend disproportionate time searching for files rather than making edits. CDL's new product, Cielara Code, addresses this directly.

In independent tests, Cielara Code outperformed both Claude Code (Opus-4.6) and OpenAI Codex (GPT-5.4) in code localization accuracy.

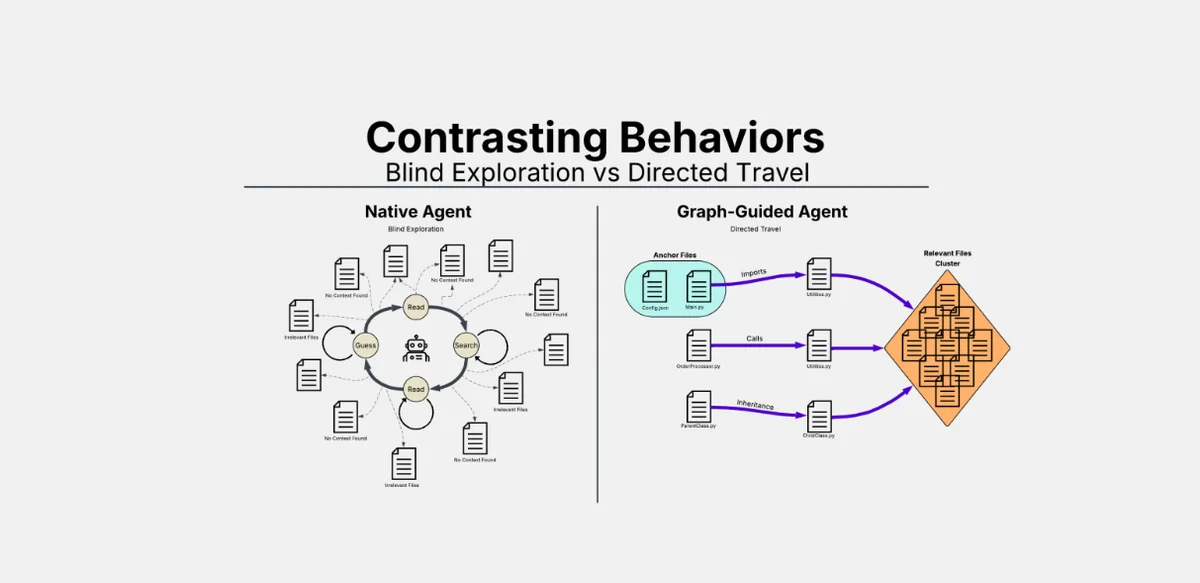

Agents Get Lost in the Code

CDL analyzed thousands of coding sessions, finding that AI agents dedicate 56.8% of their actions to reading files and 24.2% to using grep. Actual code edits accounted for less than 1%.

The problem intensifies with complexity; tasks involving more than six files saw a significant drop in recall and a fourfold increase in compute cost for failed attempts. This mirrors the "dynamic verification debt" highlighted in the 2025 DORA report, which noted a 7.2% drop in deployment stability with AI coding tools.

"Every coding agent out there today uses grep, which is like a surgeon operating without imaging," stated Hasibul Haque, CEO at Causal Dynamics Lab.