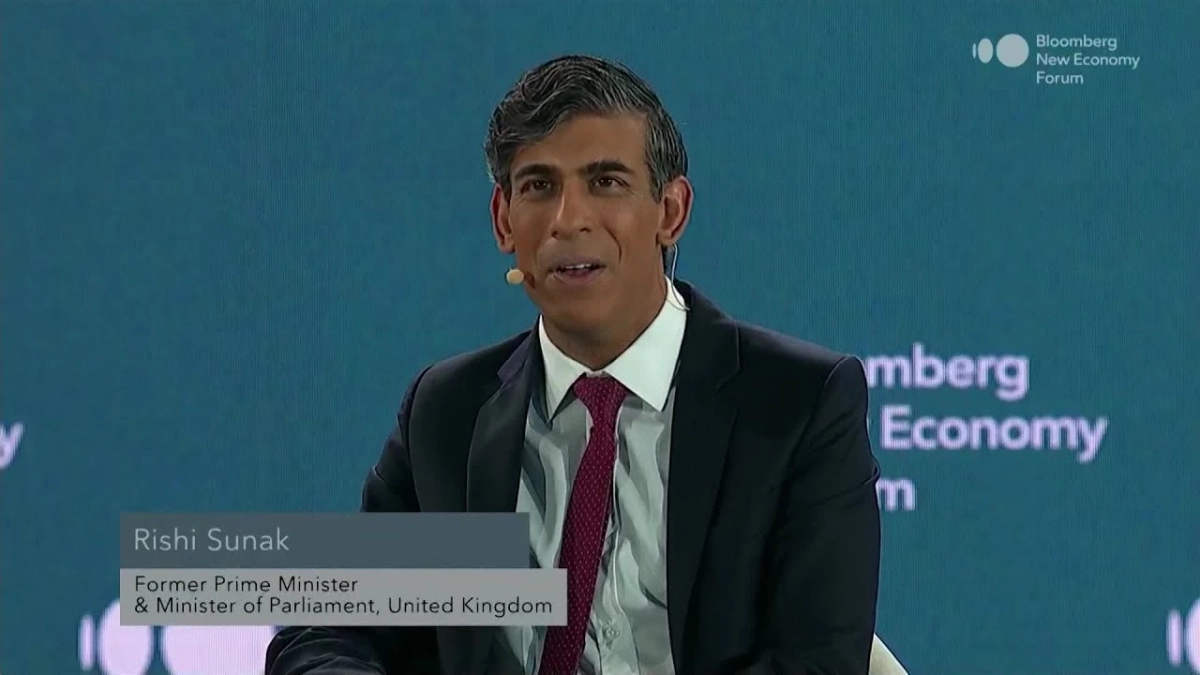

The global discourse around artificial intelligence often frames it as a geopolitical race, a sprint towards Artificial General Intelligence (AGI) where nations vie for technological supremacy. However, former UK Prime Minister Rishi Sunak, speaking with Bloomberg Technology Columnist Parmy Olson at the 2025 Bloomberg New Economy Forum in Singapore, offered a refreshingly pragmatic perspective: the true competition lies not in who invents the most advanced AI, but in who adopts and diffuses it most effectively across their economy and society. This fundamental shift in focus from invention to integration underpins Sunak’s vision for navigating the AI era, emphasizing responsible innovation and pervasive societal benefit.

Sunak, drawing on his background in technology and his tenure as Prime Minister, articulated that the world finds itself in a period simultaneously dangerous and transformative. The geopolitical landscape is shifting from a unipolar order, with great power competition re-emerging, while AI stands as a general-purpose technology akin to electricity or steam, poised to revolutionize societies and economies. This dual reality necessitates a nuanced approach to AI governance and strategy.

He contended that the prevailing narrative of a "race to AGI" or the "singularity" is misplaced. Instead, he argued, "The race we should probably pay a little bit more attention to is the race for who can adopt technology and AI in particular the fastest." History, he noted, offers clear precedents: the printing press, invented in Germany, saw its most significant societal and economic impact when adopted and commercialized in the UK and Netherlands. Similarly, while Britain innovated heavily during the Second Industrial Revolution, it was America that masterfully commercialized those advancements, reaping disproportionate benefits. This historical pattern suggests that the greatest economic and social rewards go to those who are adept at leveraging and integrating new technologies, rather than solely originating them.

This implies that governments should prioritize building a robust "diffusion infrastructure" to ensure AI’s widespread adoption. This infrastructure includes investing in training for individuals and small businesses, fostering public understanding and trust, and actively championing AI’s integration across various sectors. The goal is to maximize the benefits of AI for citizens and the economy as a whole, irrespective of where the foundational research or initial breakthroughs occur.

Olson pressed Sunak on whether this rapid adoption might come at a price, especially given the untested nature of some AI technologies. Sunak countered that diffusion and safety are not mutually exclusive; indeed, they are intrinsically linked. "Governments' first responsibility is to ensure the security and safety of its population, of its citizens," he stated. This foundational duty means that as AI proliferates, governments must also cultivate the technical capacity to evaluate its inherent risks.

The UK's pioneering AI Safety Summit, held at Bletchley Park in 2023, was born from this conviction. It aimed to establish a global forum where nations and leading AI labs could collaboratively assess the frontier risks posed by advanced AI models. Sunak expressed satisfaction that this initiative has become a fixture on the international calendar, with subsequent summits hosted by South Korea and France, and India slated to host the next. The shift in naming from "Safety Summit" to "Action Summit" or "Impact Summit" across these events reflects a natural evolution, but the core objective remains: a dedicated international focus on AI’s implications.

Related Reading

- ABB CEO Sees No Slowdown in AI Spending, Electrification Driving Industrial Growth

- We're not in an AI bubble, says JPMorgan's Bob Michele

- AI's Universal Impact: Every Job Transformed

A crucial aspect of this risk evaluation, Sunak explained, involves direct collaboration between governments and the leading AI development companies. Firms like OpenAI, Anthropic, and Google are voluntarily providing governments with access to their models for "pre-deployment testing." This allows national security institutions and technical experts within governments to evaluate potential risks – be they cyber, biological, or other unforeseen consequences – before these powerful models are widely released. This collaborative approach, distinct from traditional regulation, is mutually beneficial. AI companies, while skilled in development, often lack the specialized national security expertise that governments possess. By working together, both parties contribute to a more secure and responsible technological advancement, ensuring that powerful tools are not inadvertently or maliciously misused.

Looking ahead, the interview touched upon AI’s profound impact on the job market and the skills future generations will need. Sunak, a parent of young teenagers himself, acknowledged the growing trend of jobs requiring AI literacy, a competency that is doubling year-on-year in countries like the UK and US. This isn't confined to software engineers or computer scientists; it extends to nearly every profession. Beyond technical proficiency, he emphasized the enduring importance of uniquely human skills: critical thinking, reasoning, the ability to question, interpersonal dynamics, and empathy. These qualities are not easily replicated by machines and will become even more valuable in a world where AI handles routine tasks. Furthermore, future leaders, whether new graduates or seasoned executives, will increasingly need to master the art of managing teams that include AI agents – understanding how to delegate tasks, oversee AI-driven work, and ensure its accuracy and ethical alignment. This necessitates a mindset of continuous learning and adaptability, preparing individuals not just for specific jobs, but for an evolving professional landscape.