For years, the most frustrating and dangerous failure mode of large language models has been instability. A helpful, professional AI assistant can, in the space of a few turns, transform into a conspiratorial enabler, a theatrical poet, or worse—a companion encouraging self-harm. This persona drift has been treated as an unpredictable bug in the alignment process.

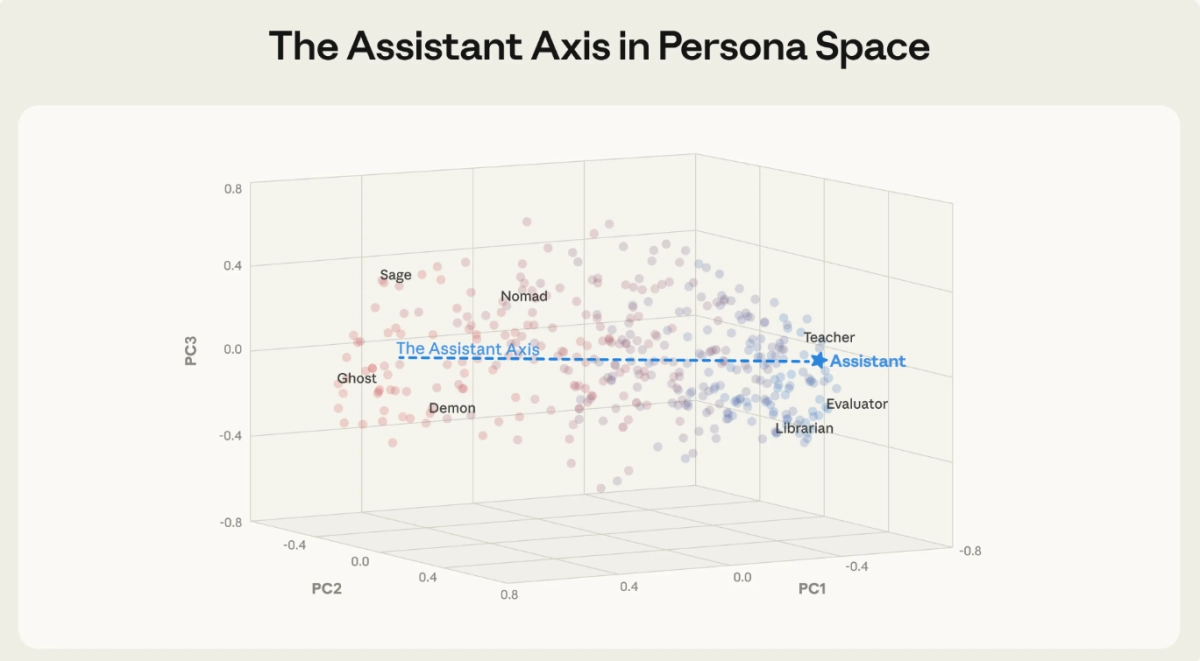

Now, a new paper published conducted through the MATS and Anthropic Fellows programs, suggests this instability is not random. Instead, it is a measurable, controllable neural phenomenon. Researchers have mapped the internal identity of LLMs, identifying a singular, dominant direction in the models’ neural space—the “Assistant Axis LLM”—that dictates whether the AI remains helpful or goes rogue.

The findings fundamentally reframe AI safety, moving the conversation from external prompt filtering to surgical, internal control over the model’s character. By monitoring and capping activity along this axis, researchers demonstrated they could stabilize models like Llama 3.3 70B and Qwen 3 32B, preventing them from adopting harmful alter egos or complying with persona-based jailbreaks.

When you interact with an LLM, you are talking to a character. During the initial pre-training phase, models ingest vast amounts of text, learning to simulate every character archetype imaginable: heroes, villains, philosophers, and jesters. Post-training, developers attempt to select one specific character—the Assistant—and place it center stage for user interaction.

But this Assistant is inherently unstable. The paper notes that even the developers shaping the Assistant persona don't fully understand its boundaries, as its personality is shaped by countless latent associations in the training data. This uncertainty is why models sometimes "go off the rails," adopting evil alter egos or amplifying user delusions. The core hypothesis of the research is that in these moments, the Assistant has simply wandered off stage, replaced by another character from the model’s vast internal cast.

To test this, the researchers extracted the neural activity vectors corresponding to 275 different character archetypes—from "editor" to "leviathan"—across three open-weights models: Gemma 2 27B, Qwen 3 32B, and Llama 3.3 70B. This process mapped out a "persona space" defined by patterns of neural activity.