Yoko Li's TetrisBench project, unveiled February 23, 2026, began as a simple curiosity: pitting LLMs against humans in Tetris. The objective wasn't merely to win, but to observe how a familiar optimization challenge would be approached by systems reasoning fundamentally differently from humans. Tetris, with its structured data and clear, turn-based mechanics, presented an ideal environment for evaluating reasoning-first AI, rather than perception.

The Initial Flop

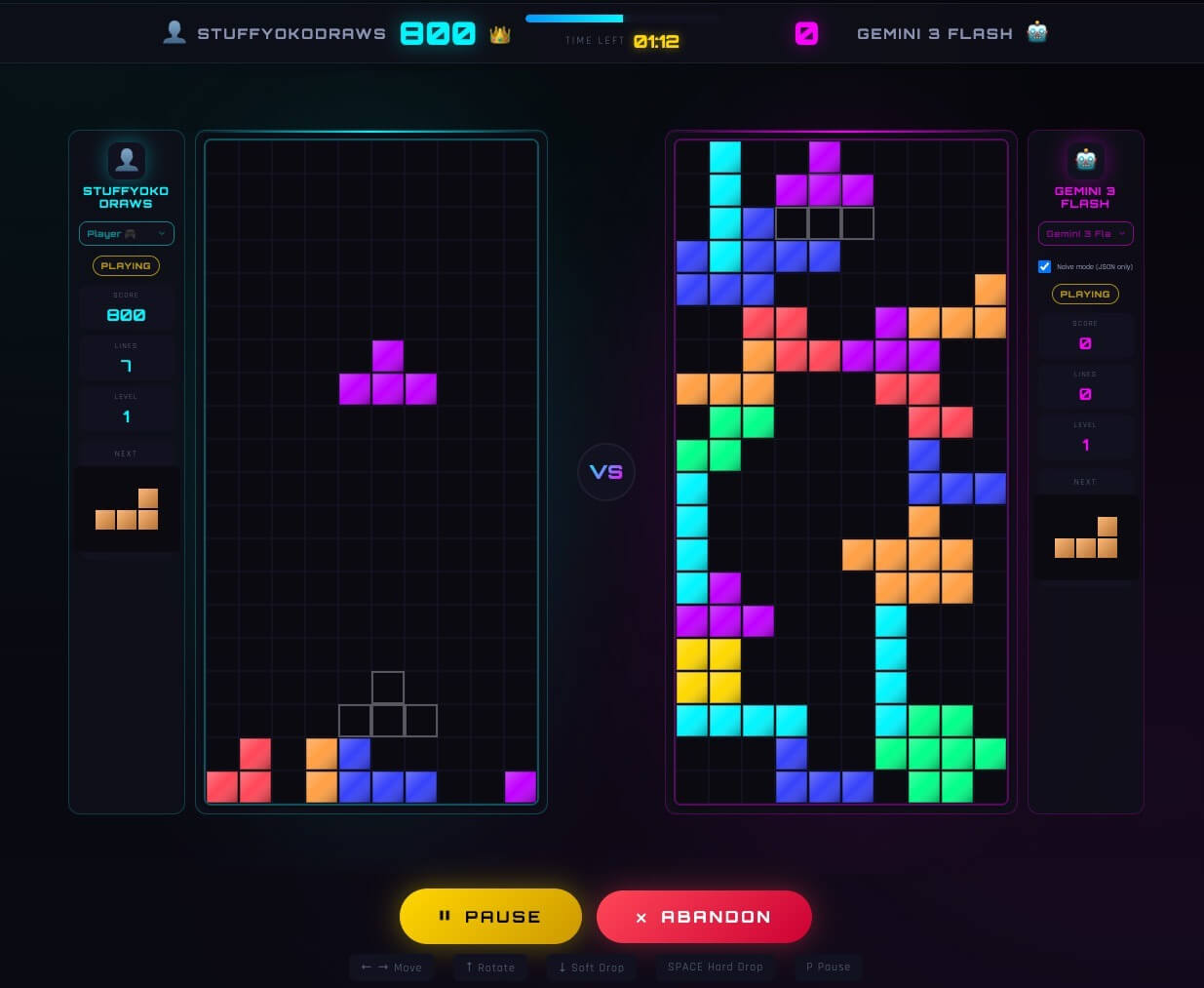

Early attempts with frontier models like Opus 4.5, GPT 5.2, and Gemini 3 Flash proved disastrous. Direct input of board states, encoded as JSON, yielded inconsistent, nonsensical, and self-destructive moves. High latency further hampered gameplay. Language models, untrained for step-by-step spatial planning over evolving states, struggled with this direct reasoning approach.