Samuel Colvin, CEO and founder of Pydantic, recently joined the Latent Space podcast to discuss the intricacies of building LLM agents, emphasizing the crucial role of type safety and well-defined APIs in achieving reliable and efficient execution.

Introducing Samuel Colvin and Pydantic AI

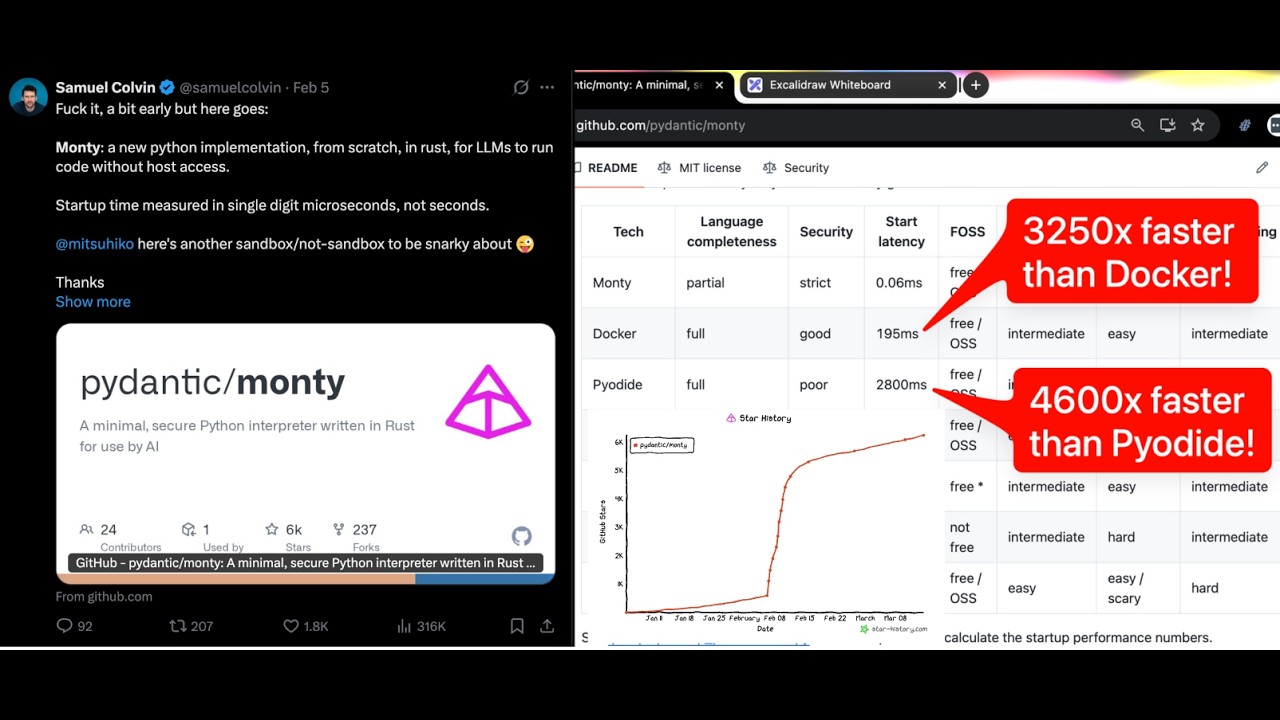

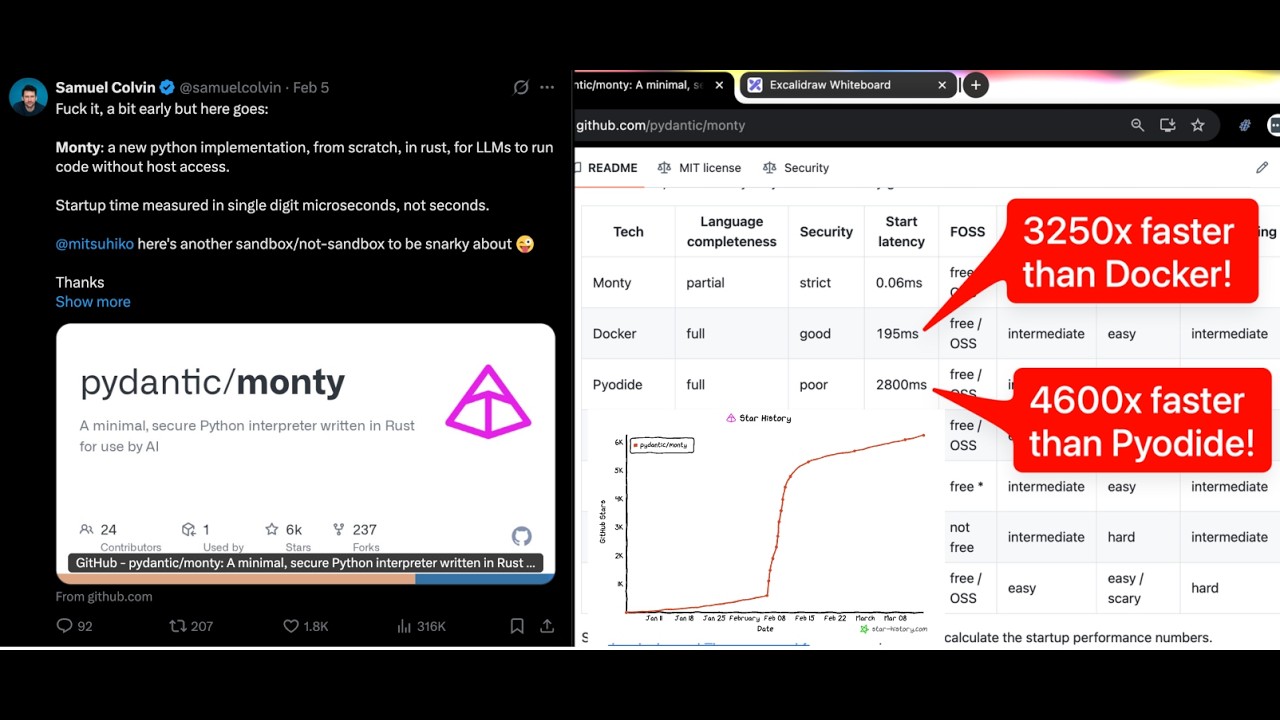

Samuel Colvin is a prominent figure in the Python ecosystem, renowned for his work on Pydantic, a data validation library that leverages Python type hints. Pydantic has become a cornerstone for many developers building data-intensive applications, offering a robust and developer-friendly way to ensure data integrity. As the CEO and founder of Pydantic AI, Colvin is now applying these principles to the burgeoning field of LLM agents, aiming to bring the same level of structure and reliability to AI-driven workflows.

The LLM Agent Landscape: Challenges and Opportunities

Colvin began by acknowledging the rapid advancements in the LLM space, noting the increasing interest in agents that can interact with external tools and perform complex tasks. He highlighted that while LLMs are powerful at generating code and understanding natural language, translating that into reliable agent behavior requires careful engineering. The conversation focused on several key areas: