OpenAI just dropped a major clue about its next-generation model, GPT-5, and it’s powering an autonomous security agent named Aardvark. Now in private beta, OpenAI Aardvark is pitched as an "agentic security researcher" designed to continuously hunt, validate, and even help patch vulnerabilities in software codebases.

According to an OpenAI announcement, Aardvark operates less like a traditional tool and more like a human security expert. Instead of relying on methods like fuzzing, it uses LLM-powered reasoning to read and understand code, analyze its behavior, write and run tests in a sandbox, and identify potential exploits. It’s a significant step toward AI agents performing highly specialized, cognitive-heavy jobs.

How it's different

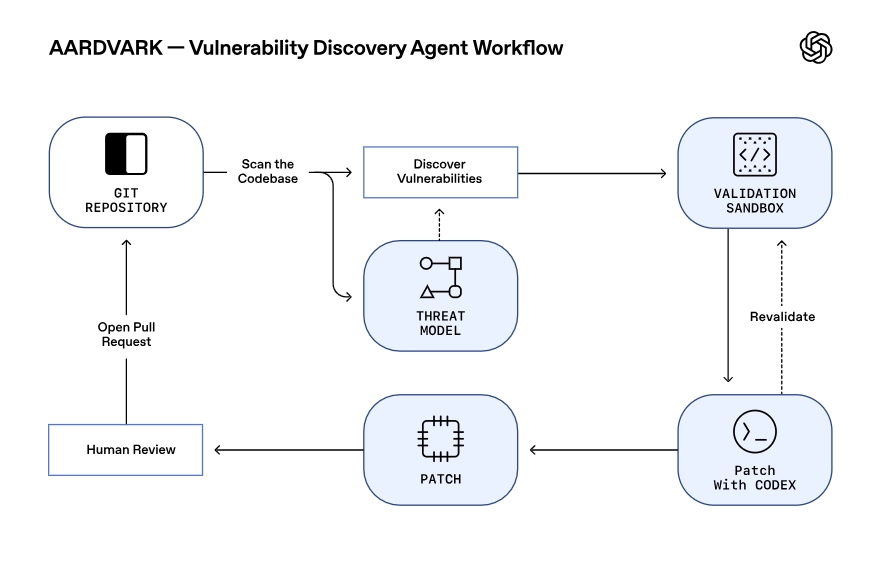

Aardvark’s workflow is designed to mimic a human researcher’s process. It starts by building a threat model of an entire code repository to understand its security design. From there, it scans new commits, comparing changes against the broader context of the codebase to spot potential issues.

Crucially, when Aardvark finds a potential bug, it doesn’t just flag it. It attempts to trigger the vulnerability in an isolated sandbox environment to confirm it’s a real, exploitable threat, a step meant to reduce the false positives that plague developer teams. For fixes, it integrates with OpenAI Codex to generate a patch, which is then attached to the finding for a human developer to review and approve.

The goal is to create a security partner that integrates directly into existing developer workflows on platforms like GitHub, catching vulnerabilities early without slowing down development. OpenAI claims that in its own testing and with alpha partners, Aardvark has already surfaced "meaningful vulnerabilities" and discovered complex bugs.

The company is backing its claims with some impressive, albeit internal, metrics. In benchmark tests, Aardvark reportedly identified 92% of known and synthetically-introduced vulnerabilities. It has also been used to find and responsibly disclose numerous vulnerabilities in open-source projects, with ten receiving official CVE identifiers.

With over 40,000 CVEs reported in 2024 already, the problem Aardvark is trying to solve is massive. By launching a private beta and offering pro-bono scanning for some open-source projects, OpenAI is positioning Aardvark as a powerful new tool for defenders in the constant cat-and-mouse game of cybersecurity.