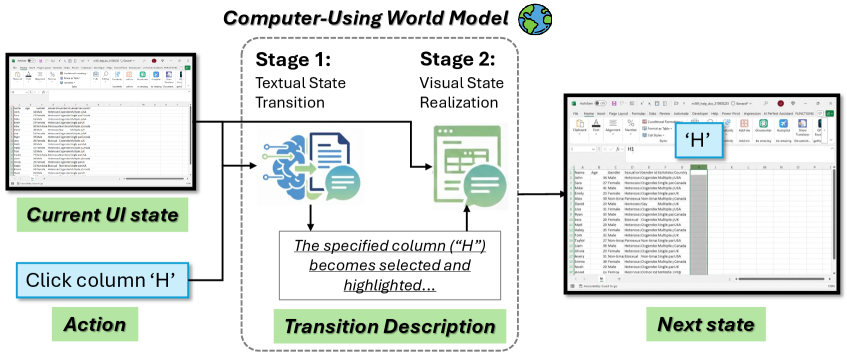

Navigating complex software environments is a hurdle for AI agents. A single wrong click can derail hours of work. Microsoft researchers have introduced a new AI system, the Computer-Using World Model (CUWM), designed to tackle this challenge.

Predicting the digital future

CUWM acts like a predictive simulator for desktop applications. It forecasts the next user interface (UI) state based on the current screen and a proposed action. This allows AI agents to 'test' actions in a simulated environment before committing to them in real software.