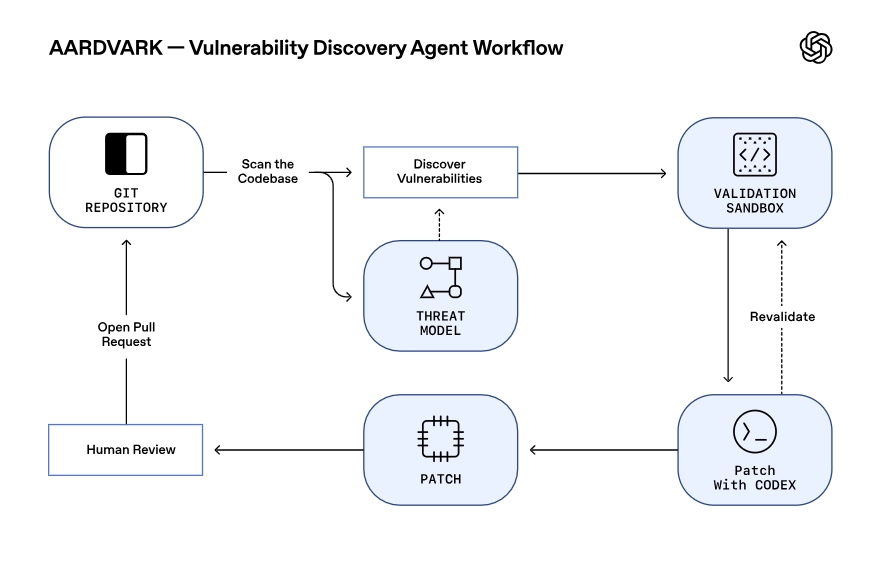

OpenAI just dropped a major clue about its next-generation model, GPT-5, and it’s powering an autonomous security agent named Aardvark. Now in private beta, OpenAI Aardvark is pitched as an "agentic security researcher" designed to continuously hunt, validate, and even help patch vulnerabilities in software codebases.

According to an OpenAI announcement, Aardvark operates less like a traditional tool and more like a human security expert. Instead of relying on methods like fuzzing, it uses LLM-powered reasoning to read and understand code, analyze its behavior, write and run tests in a sandbox, and identify potential exploits. It’s a significant step toward AI agents performing highly specialized, cognitive-heavy jobs.