Regulatory affairs is one of the most painful jobs in biotech. You spend months — sometimes years — sifting through FDA guidance documents, EMA opinions, prior approval precedents, and clinical review letters, trying to reverse-engineer what a regulator will think before they think it. Get it wrong and your drug program gets delayed or killed. Get it right and you just saved your company a billion dollars and a few years of human life.

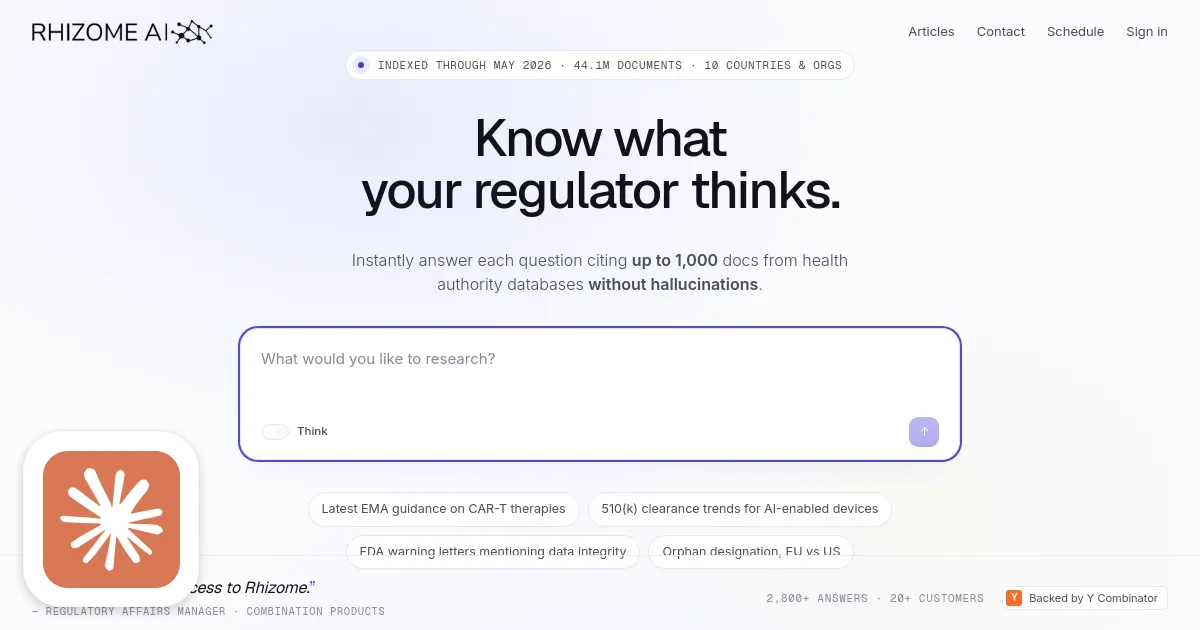

That's the market Rhizome AI is walking into with a deceptively simple pitch: always know what the FDA thinks. Under the hood it's a RAG system built on a 2.5TB proprietary corpus of regulatory intelligence spanning 44 million documents across 43 datasets and 10 countries. But calling it "just RAG" is like calling a Bloomberg Terminal "just a website." The data curation and hallucination-free output are the product, not the inference layer.

For once, the AI hype might actually be appropriate.

What They Build

Rhizome is a regulatory intelligence platform. You ask a question — "What clinical endpoints has the FDA required for similar rare disease programs?" or "How has the EMA evaluated HER2-targeting antibody-drug conjugates?" — and within minutes you get a structured answer backed by citations to the exact pages of the exact documents that support every claim.

That last part is the whole game. Regulatory professionals can't use tools that hallucinate. A wrong precedent citation in an FDA submission doesn't just embarrass you — it can trigger a complete response requirement and set your program back 18 months. Rhizome reports zero hallucinations in production through a combination of fine-tuning and inference-time verification. They're reading up to 1,000 documents per query rather than the handful most general-purpose RAG pipelines process.

The target customer is a regulatory affairs manager, director, or VP at a clinical-stage biotech, mid-size pharma, or medtech company — or the consultant advising them. These people currently pay junior analysts and junior lawyers to do research that takes days. Rhizome compresses it to minutes.

Pricing runs from $400/month for project-based access up to $30,000/year for a five-seat business plan with monthly office hours, with custom enterprise tiers above that. The model is straightforward SaaS with seats; the stickiness comes from the corpus, not the interface.