Every AI company is bleeding tokens. Not metaphorically — literally. Context windows are the new compute budget, and most teams have no idea how fast they're burning through them. A RAG pipeline that retrieves 20 documents? 30,000 tokens minimum. An agentic loop with tool calls, prior conversation, and system prompt? You're at 50,000 before the model has said a word. The bill arrives at the end of the month and finance emails you asking what "Anthropic API" is.

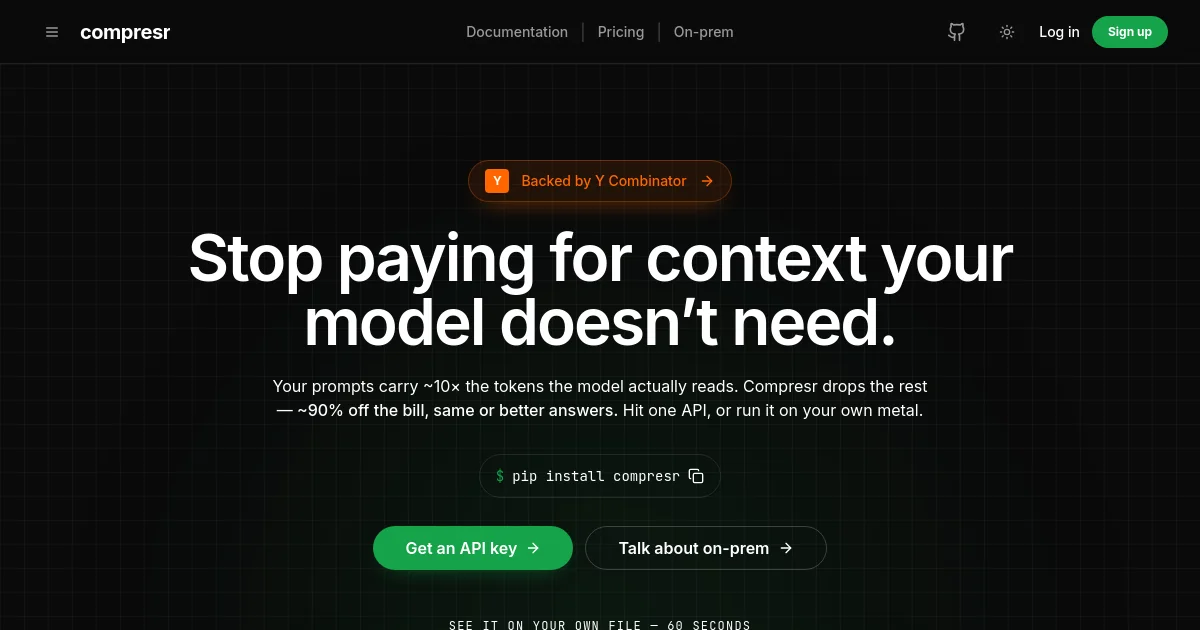

Compresr (YC W2026) thinks it has the fix. Four EPFL researchers — including a CEO who wrote his PhD specifically on LLM context compression — built an API that compresses what goes into the context window without losing what actually matters. The pitch is clean: same answers, fewer tokens, lower latency, smaller bills. Drop in their SDK or stand up their open-source proxy, and the rest just works.

This is either a clever infrastructure wedge that grows into essential AI plumbing, or a feature that Anthropic ships in a Tuesday release and vaporizes the company. Let's figure out which.

What They Build

Compresr offers two products. The first is a compression API — you send them a query plus the context you were going to inject, and they return a compressed version that preserves the semantically relevant tokens for that specific query. It's query-conditioned extraction, not dumb truncation. The second is Context Gateway, an open-source proxy (Go + TypeScript, 595 GitHub stars as of writing) that sits between your coding agent and the LLM API. It intercepts outbound context, compresses it on the fly, and forwards the leaner payload. For Claude Code or Cursor users, setup is a config file change and a Docker container.

The target customer is any team running high-token workloads: RAG pipelines ingesting large document sets, agentic systems accumulating long tool-call histories, coding assistants working across large codebases. In practice, that's almost every serious AI application today.

Business model is API usage-based — you pay per compression call, presumably at a price that undercuts the token savings you get on the downstream LLM call. There's no published pricing yet, which is very YC early-stage, but the unit economics make structural sense: if they charge $0.001 per compression and save you $0.01 in GPT-4o tokens, you're happy.

How It Actually Works

Context compression sounds simple — delete the irrelevant stuff. The hard part is knowing what's irrelevant, and that answer changes depending on what you're trying to do.