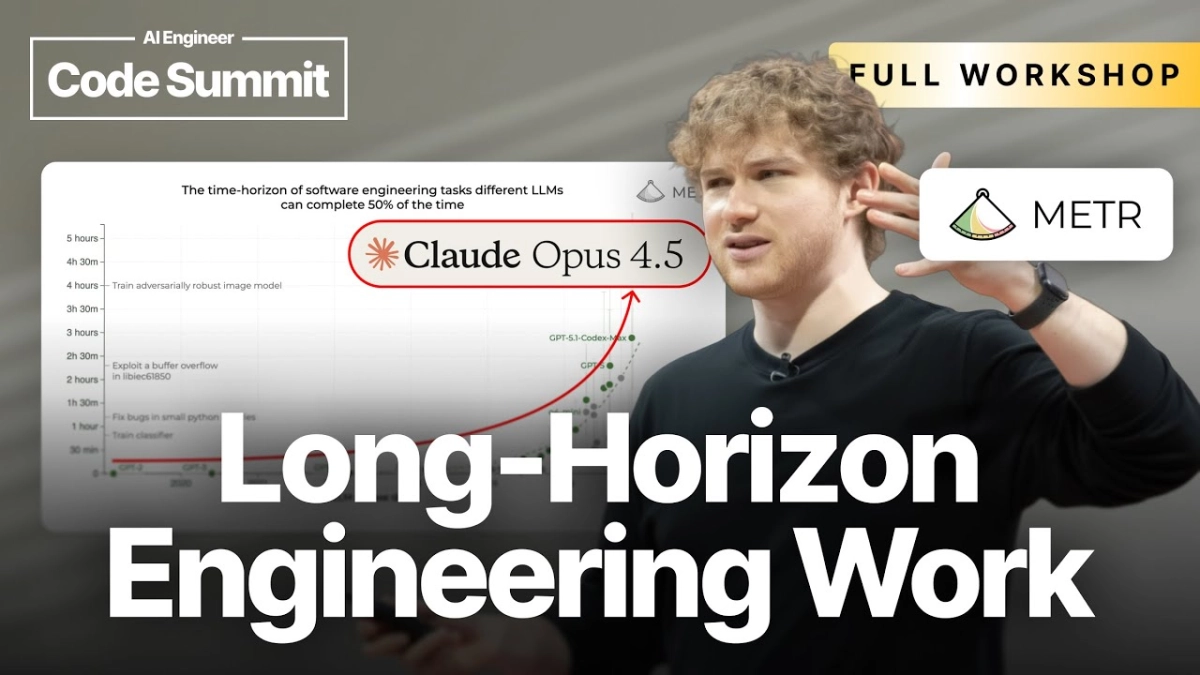

The gulf between laboratory benchmarks and real-world developer productivity is widening, and Joel Becker of METR is sounding the alarm on what this discrepancy means for the future of AI deployment. Becker spoke with an interviewer, likely at a recent industry event given the context of the presentation, about the surprising divergence between AI models excelling on standardized tests like SWE-bench and their failure to substantially accelerate experienced software engineers in field studies. The central tension explored is why impressive synthetic performance does not translate into tangible gains for developers tackling complex, long-horizon tasks.

Becker pointed out that while AI models are clearly advancing in their ability to solve discrete coding problems, the nature of professional software engineering involves a different set of constraints and requirements. He highlighted that one of the primary disconnects lies in reliability. Benchmarks often test for a correct answer, whereas production environments demand near-perfect reliability over extended periods. He noted, "If you have a task that takes a week, and the model gets it 90% right, that 10% failure rate means you’re still doing all the work, maybe more, because debugging the AI’s mistakes can be harder than writing it yourself." This reliability threshold acts as an immediate ceiling on practical acceleration for high-stakes work.