Arcee Trinity Large Breaks Cover

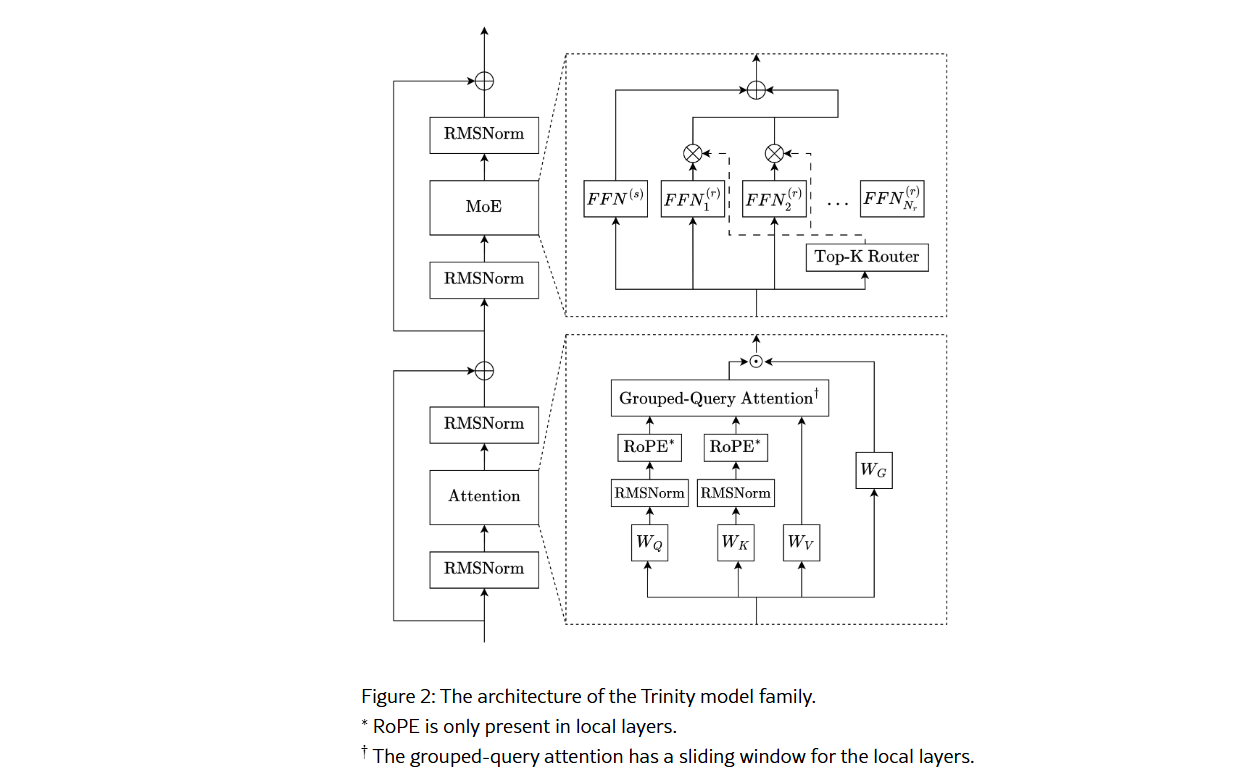

Arcee.ai unveils Trinity Large, a 400B-parameter Mixture-of-Experts model engineered for inference efficiency and enterprise long-context use, alongside smaller variants.

Feb 22 at 9:20 PM2 min read

Key Takeaways

- 1Arcee's Trinity Large is a 400B-parameter open-weight MoE model designed for efficient enterprise deployment.

- 2It features innovations like Soft-clamped Momentum Expert Bias Updates (SMEBU) and a custom multilingual tokenizer.

- 3The model was pre-trained on 17 trillion tokens, including 8 trillion tokens of DatologyAI-curated synthetic data.