#AI Security

25 articles with this tag

AI Agents Lack Identity, Risking Enterprise Trust

Enterprises are struggling with the AI agent identity problem, a critical gap in governance and accountability that hinders trust and adoption.

AI Agents: Beyond Chatbots with Open Source

Cedric Clyburn from Red Hat explores the evolution from chatbots to AI agents, detailing the architecture of OpenCopilot and its security implications.

Agentic AI Needs Smarter Guardrails

LangGuard's agentic workflow governance engine, powered by Databricks Lakebase, provides critical runtime control for enterprise AI deployments.

Anthropic's Mythos AI Accessed by Unauthorized Users

Unauthorized users gained access to Anthropic's powerful Mythos AI model, raising security concerns.

Cloudflare Builds the Agentic Cloud

Cloudflare unveils its 'agentic cloud' vision with new tools for building and scaling AI agents, addressing compute, security, and infrastructure needs.

OpenAI Opens AI Cyber Tools to Select Defenders

OpenAI launches 'Trusted Access for Cyber' to equip defenders with advanced AI, prioritizing controlled access and broad ecosystem support.

Databricks Tames Agentic AI

Databricks enhances its AI Gateway to provide unified governance, visibility, and guardrails for complex agentic AI workflows.

ClawGuard Secures LLM Agents

ClawGuard offers a deterministic runtime security framework to prevent indirect prompt injection in LLM agents by enforcing user-confirmed rules at tool-call boundaries.

GitHub's New Game Tests AI Agent Security

GitHub's new Secure Code Game Season 4 challenges developers to hack an AI agent, simulating real-world security risks.

Cloudflare's MCP Security Playbook

Cloudflare outlines its robust security architecture for enterprise-wide adoption of Model Context Protocol (MCP), integrating SASE and developer platforms.

Cloudflare Bolsters Sandbox Security

Cloudflare's new outbound Workers feature provides enhanced security and control for sandboxed AI applications, enabling dynamic authentication and Zero Trust principles.

Secure Agentic AI: Key Takeaways for MCP Servers

Tun Shwe and Jeremy Frenay of Lenses break down the security challenges of MCP servers for agentic AI, offering 5 key rules for secure design.

AI Hacker "Pliny the Liberator" Tests GPT-4 Security

AI security researcher "Pliny the Liberator" demonstrates a novel jailbreaking technique using "tokenades" to manipulate AI models, showcasing the ongoing challenges in AI security.

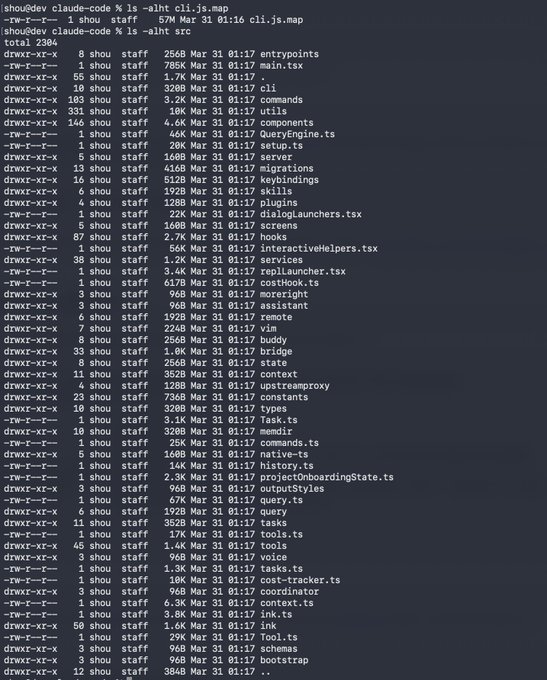

Anthropic Claude Code leak sparks backlash

Anthropic's Claude Code agent source code was accidentally leaked via an npm package, exposing internal workings and unreleased features.

IBM Expert Details Top 10 Agent Security Risks

IBM Distinguished Engineer Jeff Crume breaks down the OWASP Top 10 vulnerabilities for AI agents, including goal hijacking, tool misuse, and rogue agent behavior.

Databricks Tackles Agentic AI Risks

Databricks enhances its AI Security Framework with 35 new risks and 6 controls for autonomous agent deployment, focusing on memory, planning, and tool usage.

Snowflake Bolsters AI Governance

Snowflake enhances its AI governance capabilities by integrating Bedrock Data into its Horizon Catalog and Cortex AI, addressing critical data classification and control challenges.

Codex Security Ditches SAST Reports

OpenAI's Codex Security agent bypasses SAST reports, focusing on code behavior and intent to find deeper vulnerabilities.

IBM Experts Detail AI Agent Security Imperatives

IBM security leaders Bob Kalka and Tyler Lynch discuss critical security imperatives for AI agents, focusing on accountability, privilege management, and observability.

OpenAI Tackles AI Agent 'Prompt Injection'

OpenAI is adapting its AI security strategy to counter sophisticated prompt injection attacks, treating them as social engineering challenges.

Cloudflare Bolsters AI App Defenses

Cloudflare launches AI Security for Apps, offering threat detection and free endpoint discovery for AI applications, with new custom topic features and expanded partnerships.

OpenAI Buys Promptfoo

OpenAI is acquiring AI security platform Promptfoo to enhance the security, safety, and evaluation features within its Frontier platform for AI coworkers.

OpenAI Details Malicious AI Use in 2026

OpenAI's 2026 malicious AI report reveals how threat actors combine AI with traditional tools and multiple models, informing industry and society on prevention.

Governing Agentic AI by 2026

As agentic AI trends accelerate towards 2026, robust governance frameworks encompassing identity, policy, and enforcement are crucial for safe and ethical autonomous AI deployment.

Veria Labs raises $3.2M

Veria Labs, founded by top US hackers, raises $3.2M seed funding for its AI platform that automates continuous offensive security testing.