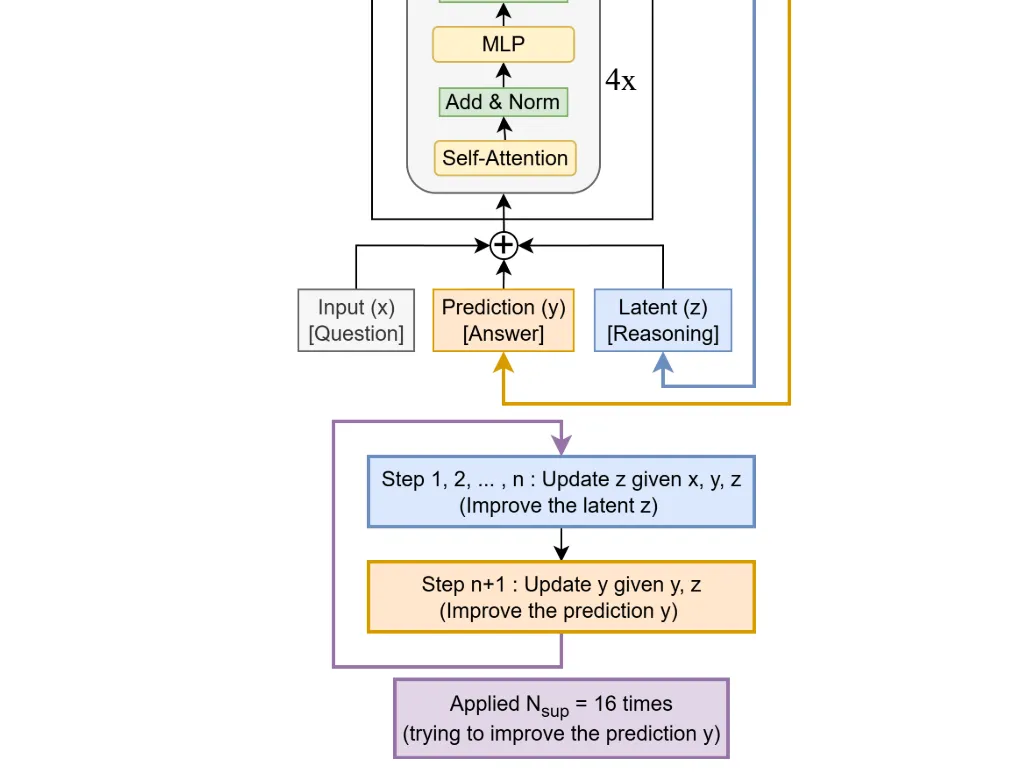

In the AI arms race, the mantra has long been "bigger is better." But a new paper from Samsung’s AI lab in Montreal is challenging that notion with a system that proves less can be much, much more. The Tiny Recursive Model (TRM), detailed in a paper by researcher Alexia Jolicoeur-Martineau, is a shockingly small 7-million-parameter model that runs circles around giant Large Language Models (LLMs) on complex reasoning tasks.

While models like GPT-4 and Gemini have billions or even trillions of parameters, they often stumble on puzzles that require strict, step-by-step logic, like Sudoku or the abstract reasoning challenges in the ARC-AGI benchmark. A single wrong step in their "chain of thought" can derail the entire answer.