The promise of 4D world modeling—creating dynamic, editable digital twins of real-world scenes—has long been hampered by a fundamental bottleneck: data. Building high-fidelity 4D models typically requires specialized, multi-view camera setups or cumbersome, offline pre-processing stages, severely limiting the ability of these systems to generalize beyond curated lab environments.

A new paper from researchers at CASIA and CreateAI, titled NeoVerse: Enhancing 4D World Model with in-the-wild Monocular Videos, proposes a solution that fundamentally shifts the paradigm. NeoVerse is a versatile monocular 4D model designed to leverage the cheapest and most abundant data source available: in-the-wild monocular videos.

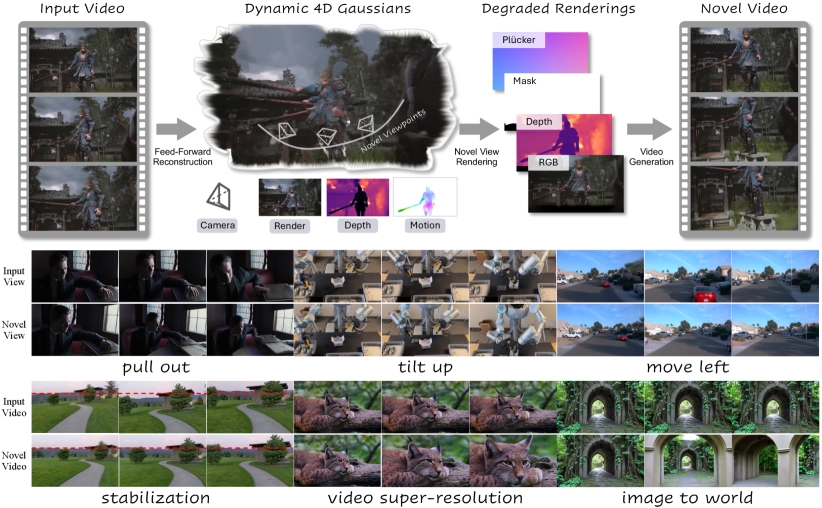

The core philosophy is scalability. Instead of relying on expensive multi-view data or heavy offline depth estimation, NeoVerse is built around a pose-free, feed-forward 4D Gaussian Splatting (4DGS) reconstruction model. This design allows the system to efficiently reconstruct dynamic scenes from sparse key frames in an online manner, making the entire pipeline scalable enough to train on over one million diverse video clips.