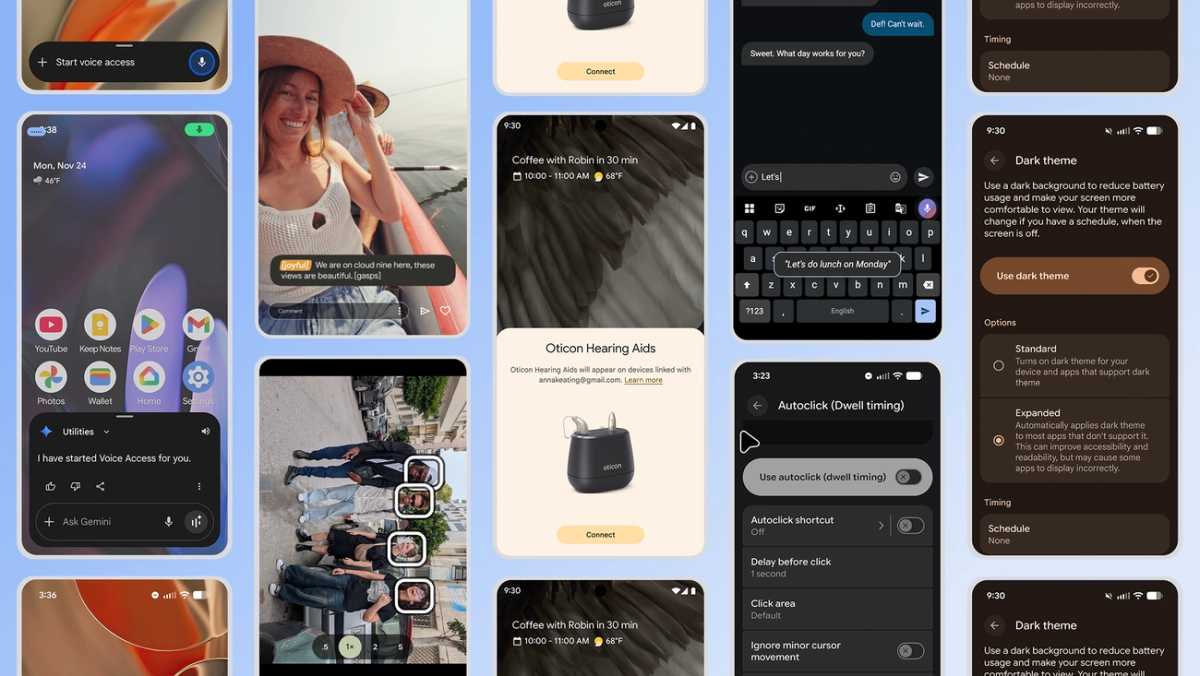

Google is rolling out significant Android accessibility enhancements, with its Gemini AI models playing a pivotal role, according to the announcement. These updates, shared ahead of International Day of Persons with Disabilities, aim to make devices more intuitive and helpful for a wider range of users. The deep integration of Gemini signals a significant shift towards AI-driven inclusive design on the platform.

The most impactful Google Gemini accessibility integration appears in the Pixel camera's Guided Frame feature. Previously offering basic object detection, Gemini now provides rich, contextual descriptions, transforming a simple "face in frame" into detailed narratives like "One girl with a yellow T-shirt sits on the sofa and looks at the dog." This shift from mere identification to nuanced environmental understanding represents a significant advancement for blind or low-vision users, offering enhanced independence and confidence in capturing moments. It underscores AI's potential to bridge sensory gaps with sophisticated interpretative capabilities.