The quest for unprecedented speed in artificial intelligence has led Cerebras Systems to construct what it proudly proclaims as the world's fastest AI infrastructure, recently unveiled in Oklahoma. This monumental achievement, delivering an astonishing 44 ExaFLOPS of new compute power to customers, is not merely about raw processing might but represents a radical rethinking of semiconductor design, cooling, and power delivery that challenges decades of conventional wisdom in high-performance computing.

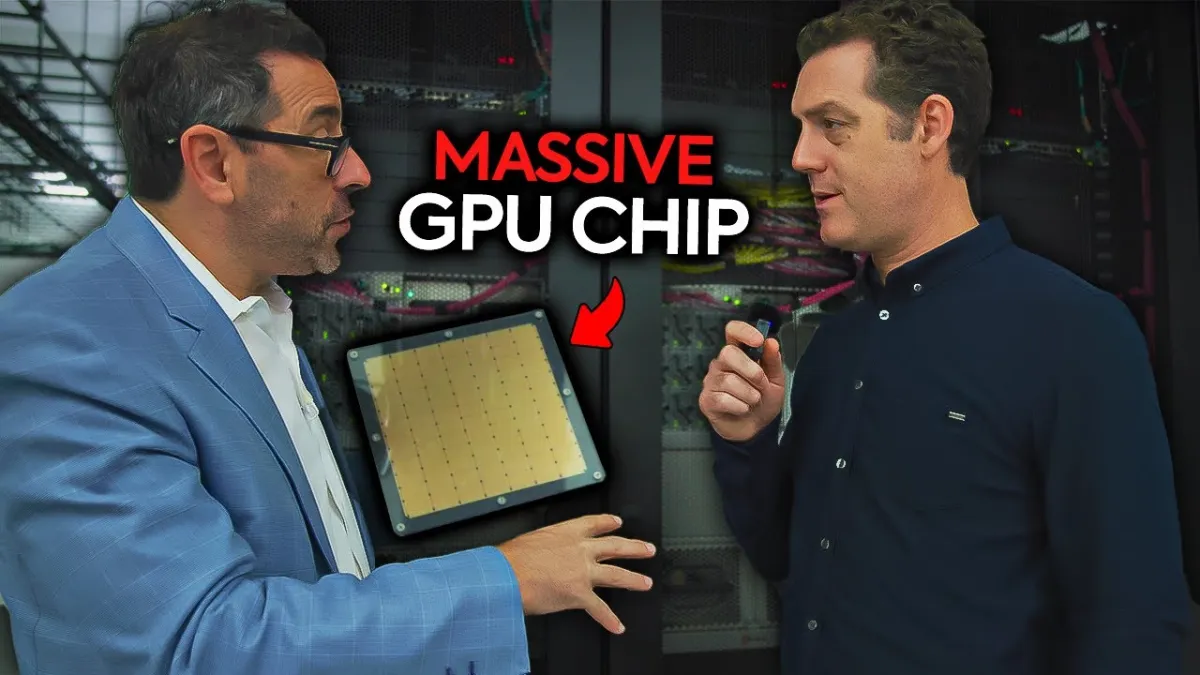

Matthew Berman of Forward Future recently toured this cutting-edge facility and spoke with Andrew Feldman, Co-Founder and CEO of Cerebras, and Billy Wooten, COO of Scale Data Centers, about the strategic decisions and technological breakthroughs that underpin this audacious endeavor. The conversation delved into everything from the surprising choice of location to the intricate engineering required to push the boundaries of AI acceleration.

Cerebras’ decision to build its flagship data center in Oklahoma City was a calculated one, moving beyond the traditional tech hubs. Feldman cited a confluence of factors: "reasonable labor costs," ample space for "build and expand," and crucially, "reasonably priced power." Beyond economics, the facility's design itself reflects its geographical reality. Constructed with reinforced concrete and engineered for tornado resilience, it ensures operational continuity in a region prone to severe weather, a critical consideration that even impacts the "cost of insurance," as Feldman noted. This foresight in physical infrastructure underscores a holistic approach to reliability.