Cursor has unveiled a significant update to its AI agents, introducing "Computers for Agents" that equip them with autonomous cloud testing environments. This fundamentally shifts the developer workflow, moving beyond mere code generation to full-stack validation. This feature, known as Cursor Agents autonomous testing, is set to redefine how software is built and reviewed.

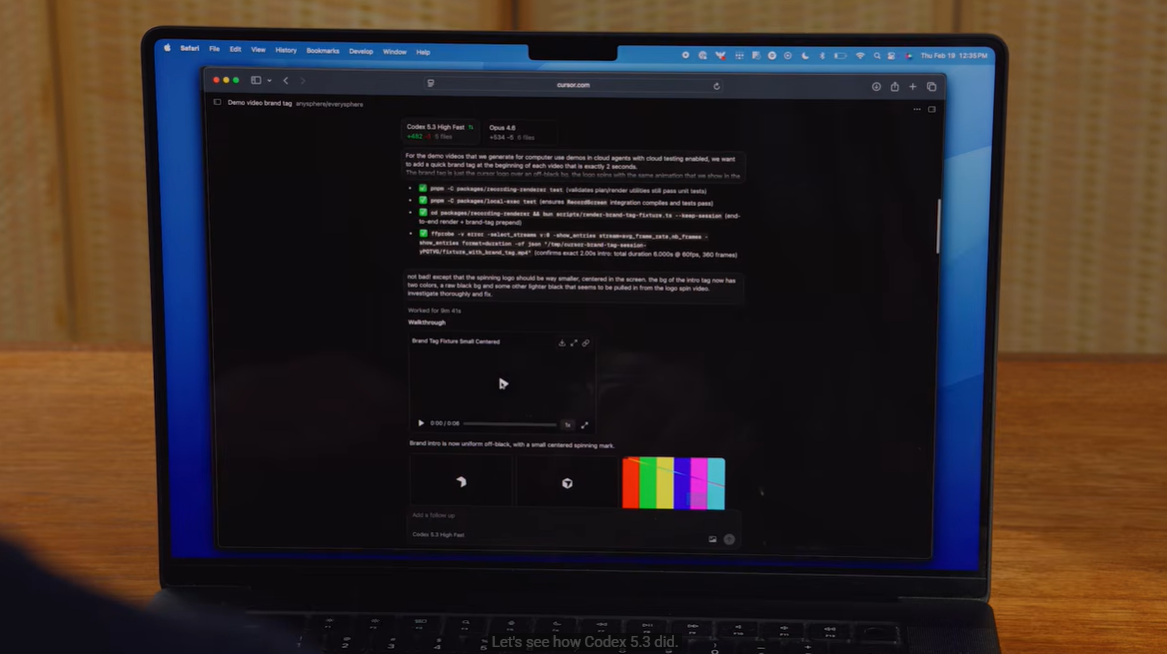

When assigned a coding task, a Cursor Agent no longer just writes code. It now spins up a dedicated cloud environment, deploys the new code, and autonomously tests the changes. Crucially, the system records the agent's interactions, simulating human inputs like mouse clicks and keyboard typing, to visually demonstrate functionality.