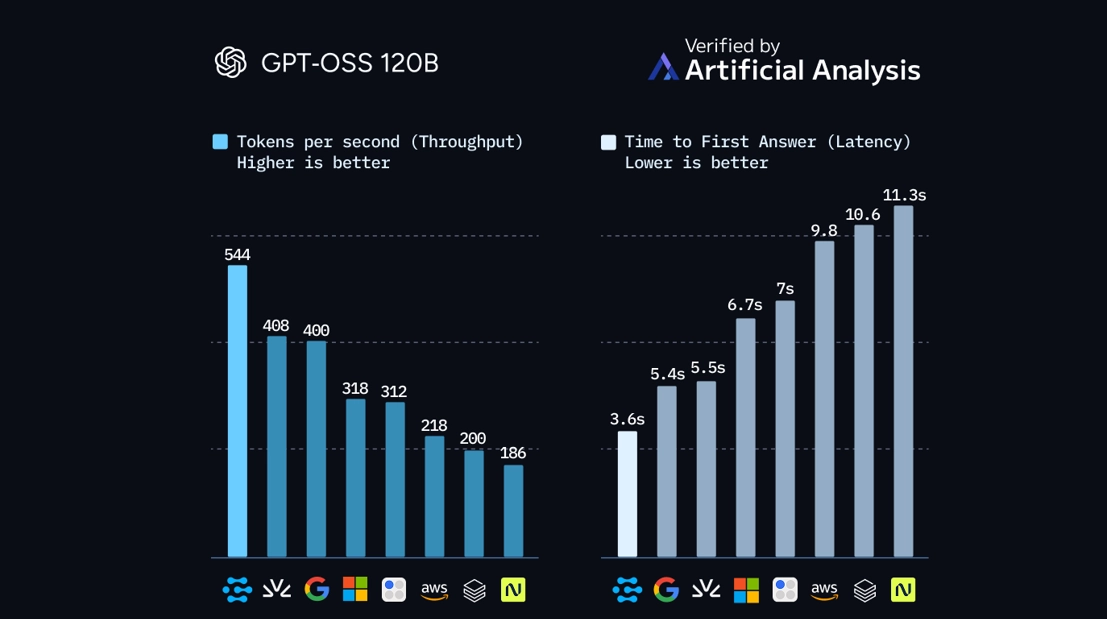

Clarifai’s latest benchmark on OpenAI’s GPT-OSS-120B model points to a quiet but important shift in AI infrastructure. Using its new Clarifai Reasoning Engine for agentic AI inference, the company recorded 544 tokens per second at a blended price of $0.16 per million tokens, as measured by independent firm Artificial Analysis. In the same benchmark, Clarifai outperformed all other providers tested, including vendors using custom inference silicon such as SambaNova and Groq.

The result doesn’t settle the GPU-versus-ASIC debate, but it does show that a well-optimized GPU stack can compete at the top end of large-model inference without custom hardware. For teams running agentic and reasoning-heavy workloads, it is a signal that software and serving architecture may now matter as much as chip choice.

Clarifai’s numbers build on earlier work. Its Compute Orchestration platform delivered 313 tokens per second on GPT-OSS-120B with a time-to-first-token (TTFT) of about 0.27 seconds, priced at the same $0.16 per million tokens. By October, the Reasoning Engine almost doubled throughput to 544 tokens per second, with TTFT increasing modestly to around 0.36 seconds. For interactive chat, that extra 90 milliseconds is noticeable but not critical; for multi-thousand-token reasoning traces, overall throughput dominates the user experience and cost equation.

The Reasoning Engine’s gains come from incremental, software-first work rather than a single feature. Clarifai uses adaptive kernel and scheduling strategies that adjust batching, memory reuse, and execution paths based on real workload patterns. Instead of relying on static configurations, the system learns from repeated request shapes common in agentic workflows: long chains of calls, similar prompt structures, and recurring tool patterns. Over time, these optimizations compound, particularly in environments where the same models and flows run at sustained volume.

A key detail is that Clarifai’s performance improvements do not appear to trade away accuracy. The company is still serving GPT-OSS-120B, not a pruned or heavily approximated variant. That matters for enterprises that want to push reasoning workloads into production without rewriting prompts or accepting degraded quality to hit performance targets.