The recent, rapid solution of a famously difficult open mathematics problem, attributed to the Hungarian polymath Paul Erdős, serves as a stark metric for the accelerating capabilities of artificial intelligence. Quant researcher Neel Somani recently announced a "Weekend win: The proof I submitted for Erdos Problem #397 was accepted by Terence Tao." The critical detail, however, was that the proof itself was generated by GPT 5.2 Pro, a powerful language model, and formalized using the verification tool Harmonic. This event, discussed by commentator Matthew Berman, highlights not just a singular achievement but the profound implications of AI models crossing the threshold into automated scientific discovery, shifting the focus from mere optimization to genuine, self-directed intellectual progress.

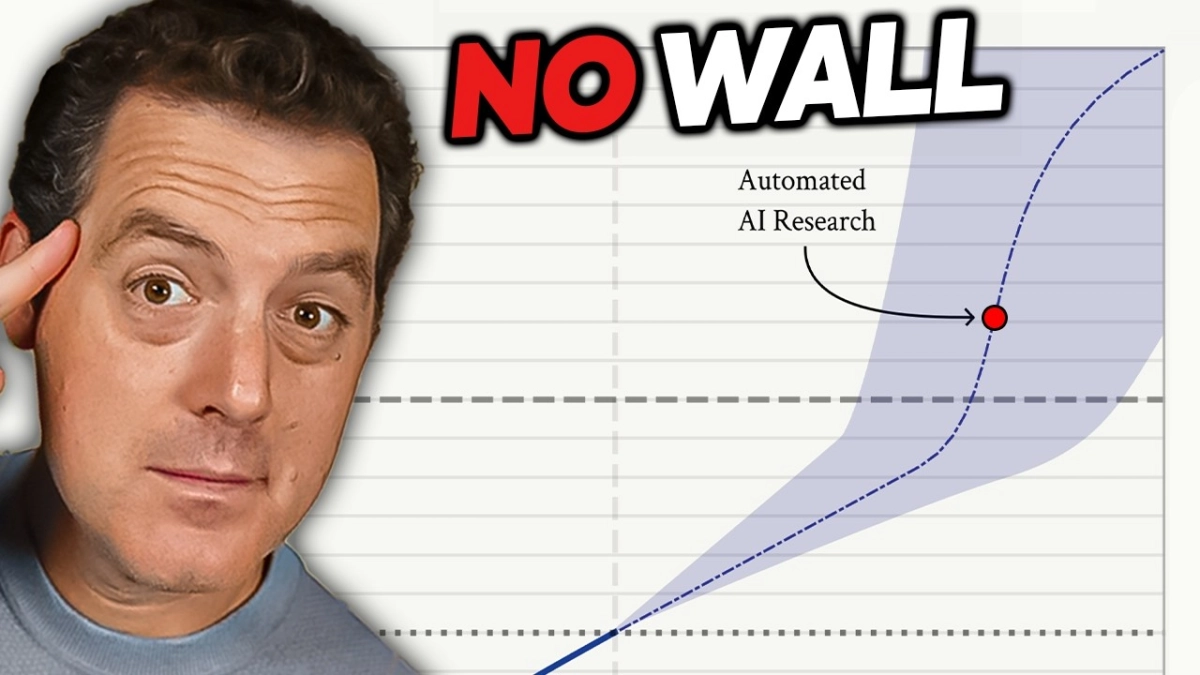

Berman’s commentary framed this breakthrough as a key indicator of the long-theorized "Intelligence Explosion," a scenario where AI systems become capable of recursively improving themselves, leading to exponential gains in capability. The Erdos problem solved—one of hundreds of open problems in number theory and combinatorics—had resisted human efforts for decades. When asked how long it took the AI to find the solution, Somani replied, "Around 15 minutes." The staggering brevity of this timeline, juxtaposed against the decades of human effort, underscores the qualitative leap now occurring in algorithmic reasoning. This capability is fundamentally different from pattern recognition; it requires generating novel proofs and abstract mathematical constructions, a domain long considered exclusively human.