The chasm between cutting-edge AI tools and the practical needs of data professionals has long been a frustrating reality, particularly for those grappling with complex, unstructured data. Rohan Kodialam, Co-founder of Sphinx, illuminated this divide in a recent interview, explaining how current AI solutions, while adept at tasks like copywriting or software engineering, often fall short for data scientists, quantitative researchers, and analysts. His company, Sphinx, has emerged from this gap, launching with significant funding to redefine how machine intelligence truly collaborates with data.

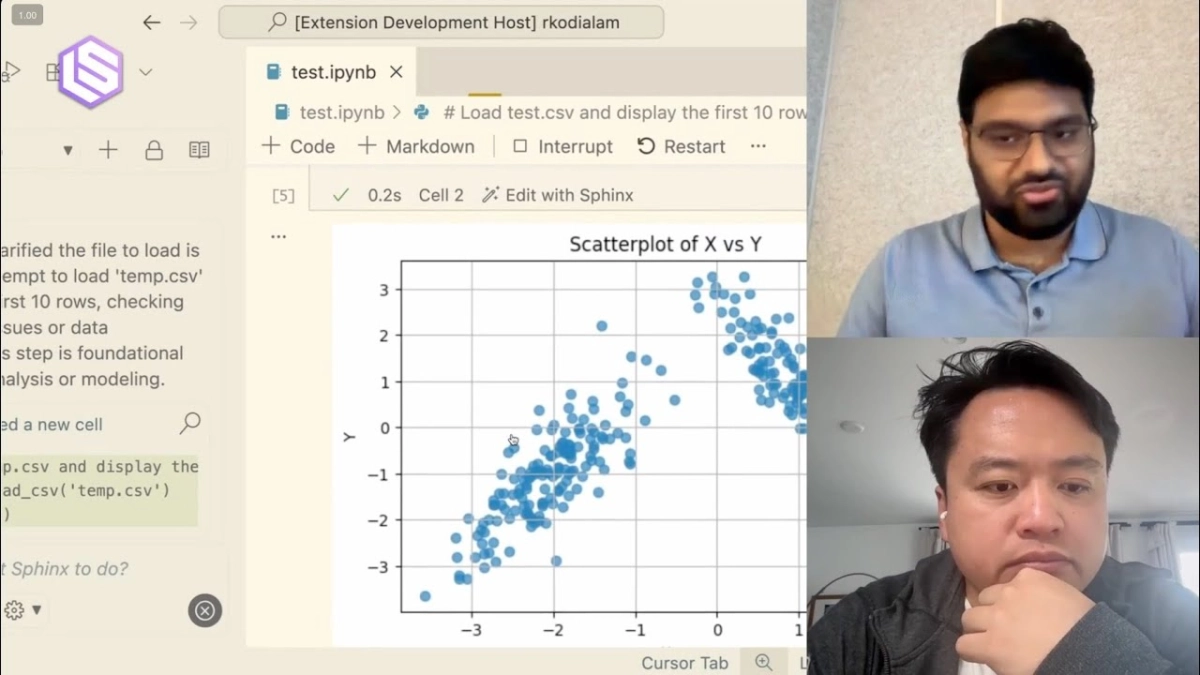

Kodialam, whose career spans deep AI research into transformer-based models and high-stakes quant finance, spoke with Swyx of Smol AI, detailing the genesis and vision behind Sphinx. His experience building AI agents capable of navigating the unpredictable terrain of the stock market instilled a profound understanding: for AI to deliver real value, it must be "objectively correct" and capable of uncovering insights from complex, often messy, data. This isn't just about looking good; it's about generating revenue and solving tangible business problems.

The core issue, Kodialam explained, stems from two primary challenges. First, the "representation problem": Large Language Models (LLMs) inherently excel at understanding natural language and code, which are essentially artificial languages. However, they struggle to natively comprehend tabular, structured, or time-series data. Feeding such traditional datasets to LLMs often results in "junk out" – unusable or nonsensical outputs. Second, the "reinforcement problem" highlights a fundamental mismatch in workflows. While many AI applications follow a linear "did I do X, if not, try again" loop, real-world data science, particularly exploratory data analysis (EDA) and cleaning, is inherently non-linear. It demands iterative exploration, backtracking, and making nebulous decisions that LLMs, prone to "mode collapse" (sticking to the most likely path), are ill-equipped to handle.