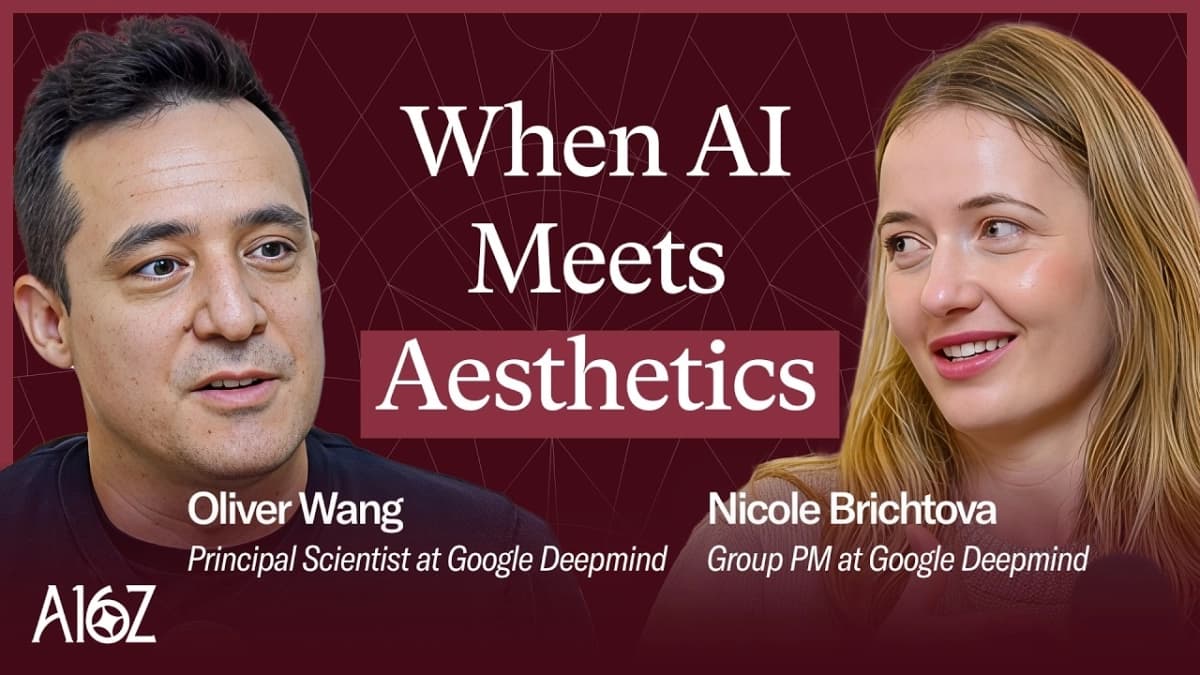

Google DeepMind's Nano Banana, the image model that recently captivated the internet, represents a pivotal moment in the democratization and evolution of digital creativity. Its creators, Principal Scientist Oliver Wang and Group Product Manager Nicole Brichtova, recently sat down with a16z partners Yoko Li and Guido Appenzeller to unravel the model's origins, its unexpected viral ascent, and the profound implications for the future of artistic and everyday digital expression. This discussion illuminated the delicate balance between advanced AI capabilities and intuitive user experience, a core tension in the burgeoning generative AI landscape.

The genesis of Nano Banana traces back to Google DeepMind’s prior work on image models, notably the Imagine family, which excelled in visual quality, and the Gemini 2.0 models, focused on interactive and conversational editing. Wang explained that Nano Banana emerged from a strategic convergence, aiming to marry Gemini’s multimodal intelligence with Imagine’s superior visual fidelity. Brichtova succinctly captured this synergy, noting, “Nano Banana... became kind of the best of both worlds in that sense, like the Gemini smartness and the multimodal conversational nature of it, plus the visual quality of Imagine.” This fusion was not merely a technical achievement but a foundational step towards a more accessible and powerful creative tool.

The model’s viral explosion was, surprisingly, not immediately foreseen by its developers. Wang recounted their initial cautious projections for user engagement on LLaMa Marina, only to witness an unprecedented surge. "We had to keep upping that number as people were going to LLaMa Marina to use the model," he remarked, describing the scramble to meet demand. The true "wow" moment, according to Brichtova, arrived when the AI generated images that strikingly resembled her, zero-shot. This personalized output fostered an emotional connection, transforming the tool from a novelty into something deeply relatable. It became deeply personal, leading to widespread adoption and sharing of images, such as the popular 80s-themed makeovers.

A key insight emerging from the discussion is AI's profound potential to empower creatives by streamlining arduous processes. Brichtova emphasized that these models allow artists to dedicate "90% of their time being creative versus 90% of their time like editing things and doing these tedious kind of manual operations." This liberation from drudgery shifts the creative paradigm, enabling a focus on conceptualization and artistic vision rather than repetitive technical execution. Guido Appenzeller concurred, asserting that AI "ultimately really empowers artists" by furnishing them with novel tools.

The conversation also underscored the critical interplay between user control and automation, a spectrum that defines the utility of AI tools. For professionals, the demand is often for granular control, akin to the intricate "knobs and dials" of traditional software. Conversely, everyday users, like Brichtova’s parents, favor a simpler, conversational interface where they can "talk to images" without needing to master complex functionalities. This duality suggests a future where AI interfaces are highly adaptive, perhaps even intelligently suggesting next steps based on user intent and context, thereby bridging the gap between sophisticated capabilities and broad accessibility.

Character consistency, a persistent challenge in earlier image generation models, was highlighted as a crucial area of focus for Nano Banana. The ability to maintain a consistent subject across multiple iterations is vital for narrative and sequential art forms. Wang noted that this, alongside customizability, was rigorously monitored during development. The iterative nature of artistic creation aligns perfectly with AI's capacity for rapid prototyping and refinement, offering artists a dynamic collaborative partner.

Oliver Wang expressed significant optimism regarding AI's role in education, particularly for visual learners. He posited that current AI tutors, predominantly text-based, fall short for a large segment of students. The integration of visual cues, images, and diagrams alongside textual explanations promises to make learning more engaging and accessible. This multimodal approach could revolutionize educational content, moving beyond static textbooks to dynamic, personalized visual learning experiences.

Related Reading

- OpenAI Recapitalization Reshapes AI Landscape with Microsoft at the Helm

- Tesla's AI Ambition: Beyond the Car, a New Industrial Revolution

- Qualcomm’s Bold AI Inference Play Challenges NVIDIA Dominance

Looking to the future, the developers discussed the concept of AI as a "force multiplier," particularly in the realm of video. The ability to generate consistent frames, leading to short videos and eventually full-length films, unlocks new creative frontiers. Latency, or the speed of generation, is paramount here; a prompt that takes ten seconds to render is engaging, while two minutes can kill the creative flow. This emphasizes that raw processing power, combined with intelligent model design, directly translates into increased creative output and exploration.

The discussion concluded by acknowledging the inherent diversity in user needs and preferences. While some professionals will always demand pixel-level control, others, and certainly the vast majority of consumers, seek effortless creation. This necessitates a landscape of diverse AI models and interfaces, each catering to specific needs and levels of technical engagement. The ultimate goal, as Wang articulated, is for AI to serve as a tool that empowers individuals to achieve more, understood through the lens of human intention and creativity.