Salesforce and Databricks are tearing down the walls between their data platforms. The two enterprise giants have announced the General Availability of a bi-directional, Zero Copy integration, signaling a major shift away from the slow, costly data pipelines that have plagued businesses for decades. The new integration allows Salesforce Data Cloud and the Databricks Data Intelligence Platform to access each other's data in near real-time, without making copies.

For years, the holy grail of a 360-degree customer view has been a mirage, shimmering behind a desert of brittle ETL processes and stale data. Companies keep their transactional and log data in powerful lakehouses like Databricks, while their customer relationship data lives in CRMs like Salesforce. Getting them to talk to each other meant building complex, expensive pipelines to constantly copy data back and forth. By the time the data arrived, it was already out of date.

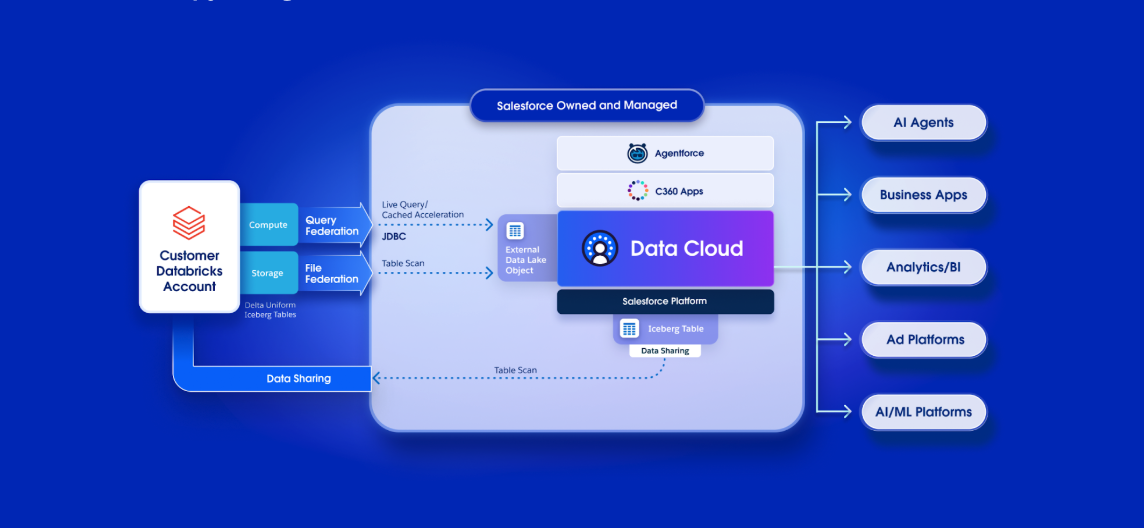

This announcement aims to end that. According to the announcement, the integration works in two directions. First, using a new feature called Zero Copy File Federation, Salesforce can now directly access and use massive datasets stored in Databricks. It doesn't query the data in a traditional sense; it pulls directly from the underlying Apache Iceberg tables at the storage layer. This means a service agent in Salesforce can see a customer's complete, up-to-the-minute transaction history—billions of rows of it—without putting any computational strain on the Databricks source system.

The second direction, Zero Copy File Sharing, allows the curated and unified customer profiles built in Salesforce Data Cloud to be shared directly into the Databricks Unity Catalog. This gives data scientists and AI developers in the Databricks environment live access to the "golden record" of a customer. They can run analytics or train machine learning models on the freshest possible data without waiting for a nightly data dump.