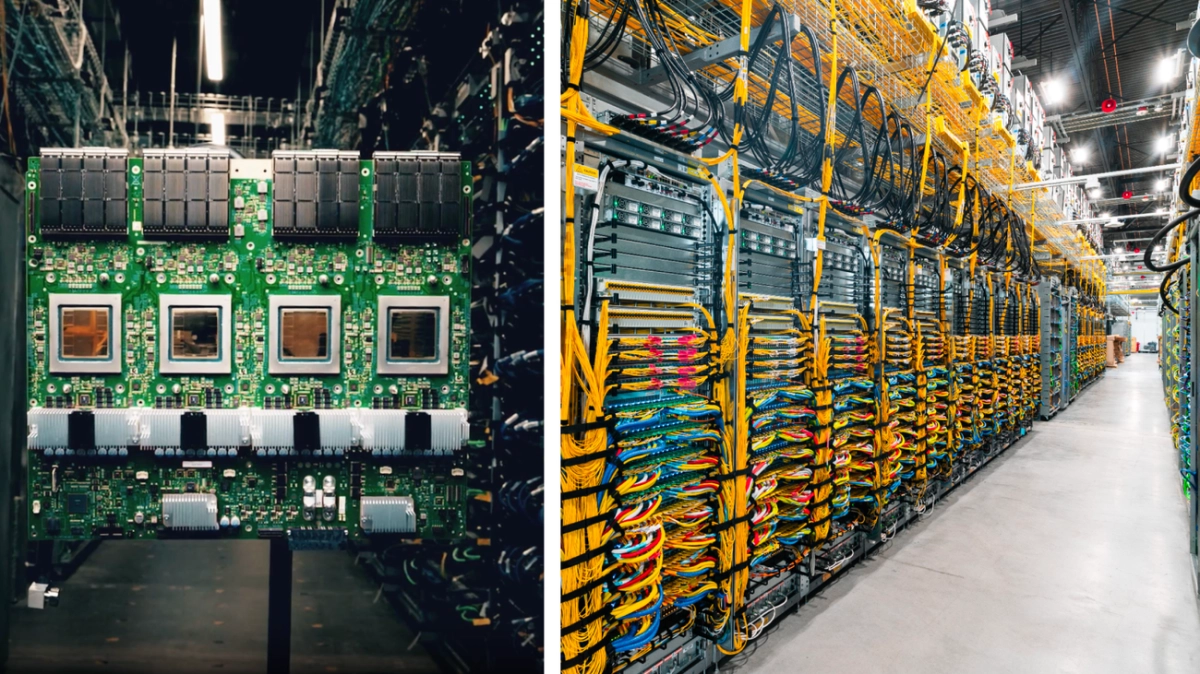

Google has officially launched Ironwood, its seventh-generation Tensor Processing Unit (TPU), making it available to Cloud customers. This latest custom silicon is engineered for the demanding requirements of modern AI, focusing on raw power and energy efficiency. Ironwood is positioned to accelerate the shift towards high-volume, low-latency AI inference, marking a significant step in Google's hardware strategy.

The industry's focus is rapidly evolving from training massive frontier models to deploying them for responsive, real-world interactions. Ironwood directly addresses this need, purpose-built for high-volume inference and model serving. According to the announcement, it delivers over 4X better performance per chip for both training and inference workloads compared to its predecessor, a substantial leap that directly impacts the speed and smoothness of complex AI services running in the cloud.