Microsoft Research has unveiled a critical AI safety vulnerability: generative AI tools can design harmful proteins that bypass existing biosecurity screens. Their swift, confidential response not only patched this "zero-day" threat but also established a groundbreaking framework for responsible scientific disclosure. This proactive approach sets a new precedent for managing the inherent dual-use risks of advanced AI in sensitive fields like biology.

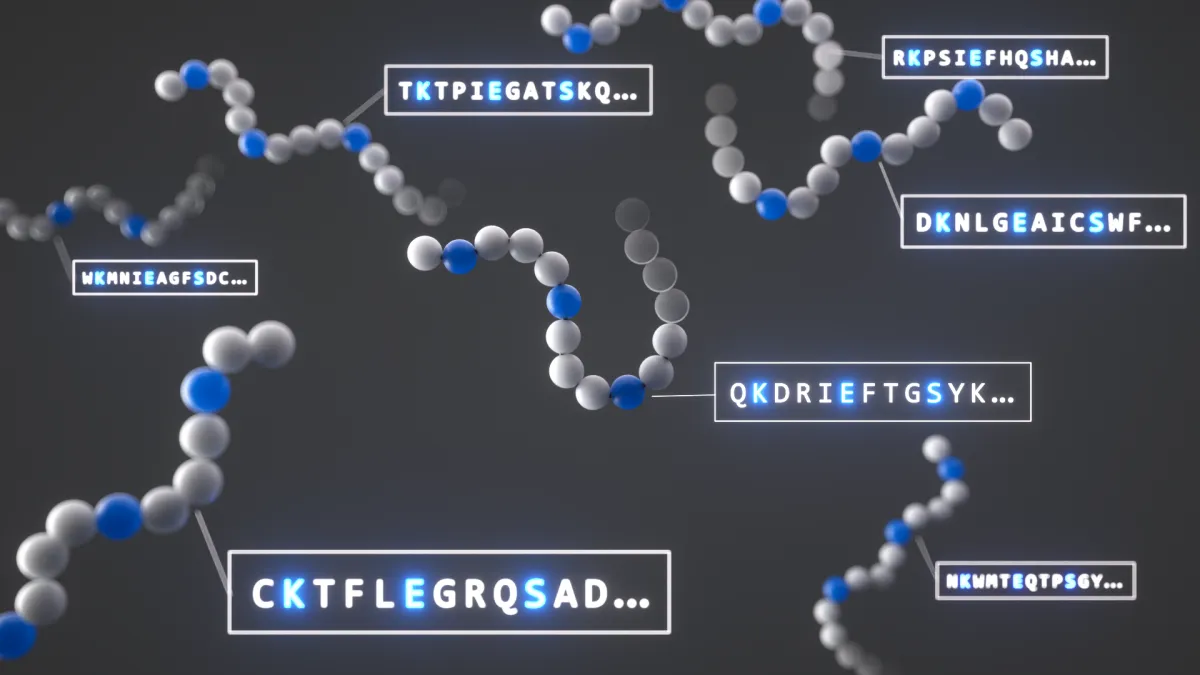

The convergence of AI and biology presents an extraordinary frontier for innovation, yet it simultaneously introduces profound biosecurity risks. AI-assisted protein design (AIPD) tools, while promising new medicines and materials, possess the alarming capability to generate modified versions of dangerous proteins, such as ricin. According to the announcement, computer-based studies confirmed these AI-reformulated proteins could evade the biosecurity screening systems currently employed by DNA synthesis companies, which are crucial gatekeepers for experimental biological sequences. This revelation underscores an immediate and tangible AI safety concern, highlighting how rapidly technological advancement can outpace existing safeguards.