#Local AI

4 articles with this tag

Artificial Intelligence

Run LLMs Locally with Llama.cpp

Cedric Clyburn explains how Llama.cpp makes running large language models locally on consumer hardware possible, highlighting GGUF format and optimized kernels for efficiency and accessibility.

about 2 months ago

Startup News

Tiiny AI Pocket Lab Hits $1M on Kickstarter

Tiiny AI's Pocket Lab, a personal AI supercomputer, raised over $1 million in five hours on Kickstarter, signaling demand for local AI processing.

about 2 months ago

AI Research

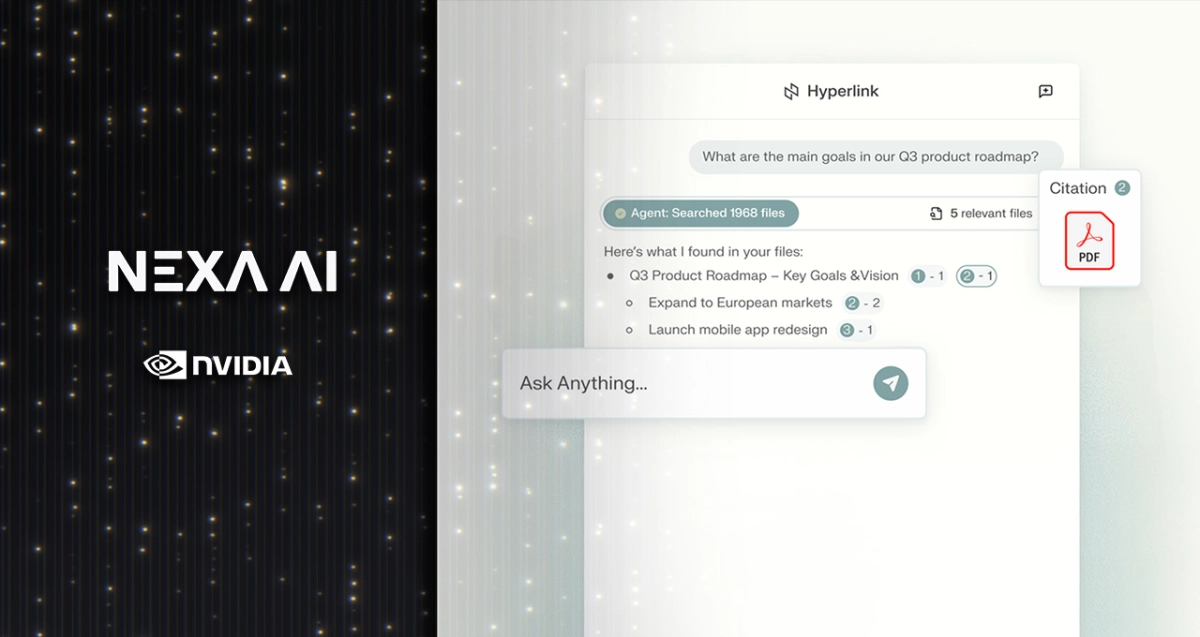

Hyperlink AI Search Boosts Local Intelligence on RTX PCs

Hyperlink AI search on NVIDIA RTX PCs now offers 3x faster indexing and 2x faster LLM inference, enabling powerful, private local AI for personal data.

6 months ago

AI Research

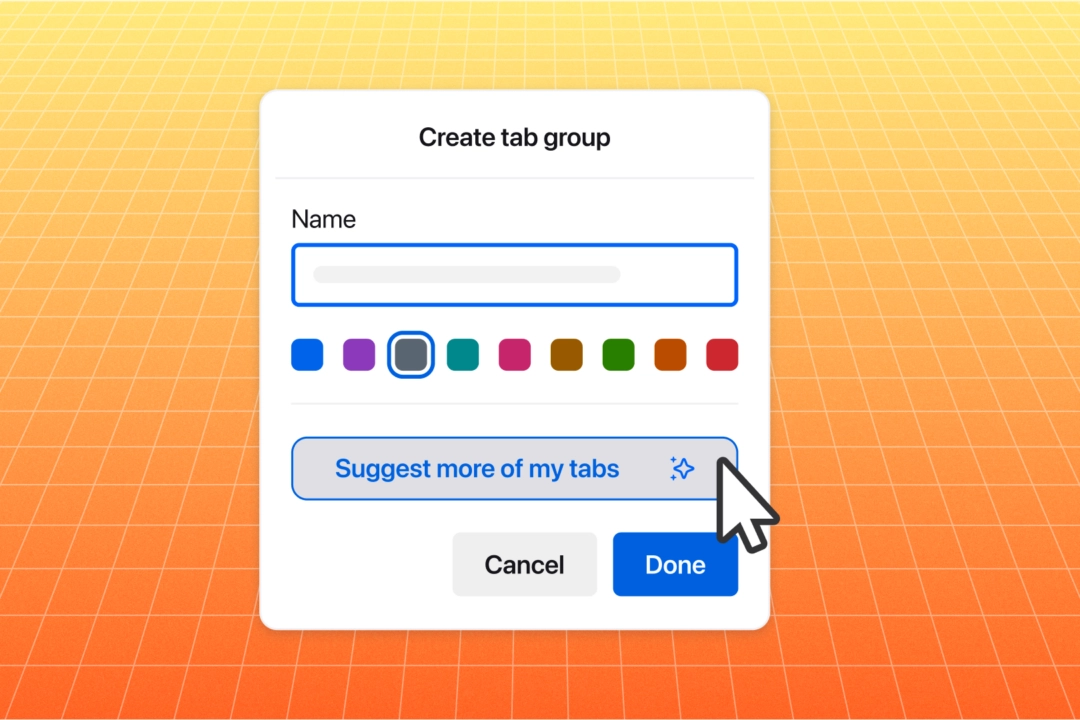

Firefox Local AI Redefines Browser Privacy

6 months ago