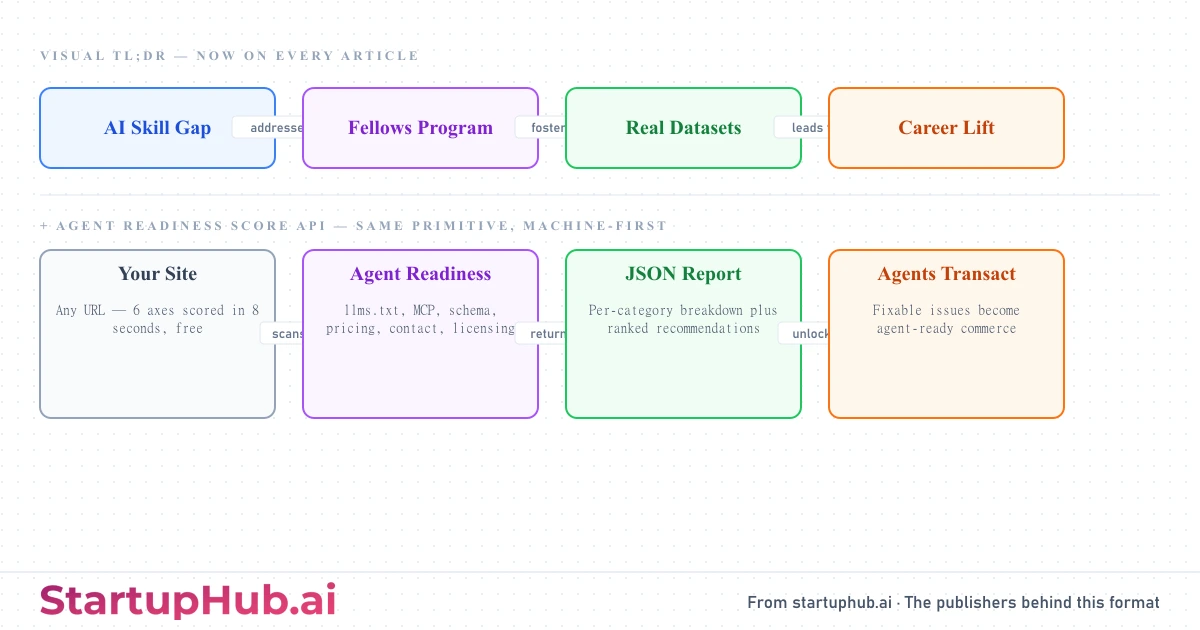

Two new things shipped on StartupHub today. Every article we publish from this moment forward carries an inline flow diagram, the Visual TL;DR, that compresses the story's argument into four to six color-coded steps. And the Agent Readiness Score is now a public API, accessible via REST, MCP, n8n, Zapier, Make, and a Claude.ai connector. Both ship the same conviction: the next billion reads of journalism will be done by AI agents, and the publisher that serves them best wins the decade.

Visual TL;DR is on every article from today

Most news still reads the way it did in 2005. A reader scans the headline, skims the first paragraph, and decides whether to keep going. That model breaks when an AI agent is doing the reading. An agent does not skim. It either pulls the structured argument in milliseconds, or it moves to the next source.

The Visual TL;DR is built for both audiences in a single artifact. Human readers get a one-glance summary showing how the story's pieces connect, problem to technology to outcome, in colors that map to function. AI agents get a graph baked into every page that they can pull as Markdown in roughly 200 tokens instead of scraping 5,000.

Nodes are color-coded by role. Causes and problems sit in blue. Core technologies and key actors sit in purple. Effects and intermediate results sit in green. Final outcomes sit in orange. Click "Show details" on any diagram and each card expands to reveal a 10 to 14 word description pulled directly from the article body. Nothing in those descriptions is generic boilerplate. Every word is article-specific and traceable.