The era of the simple text generator is officially over.

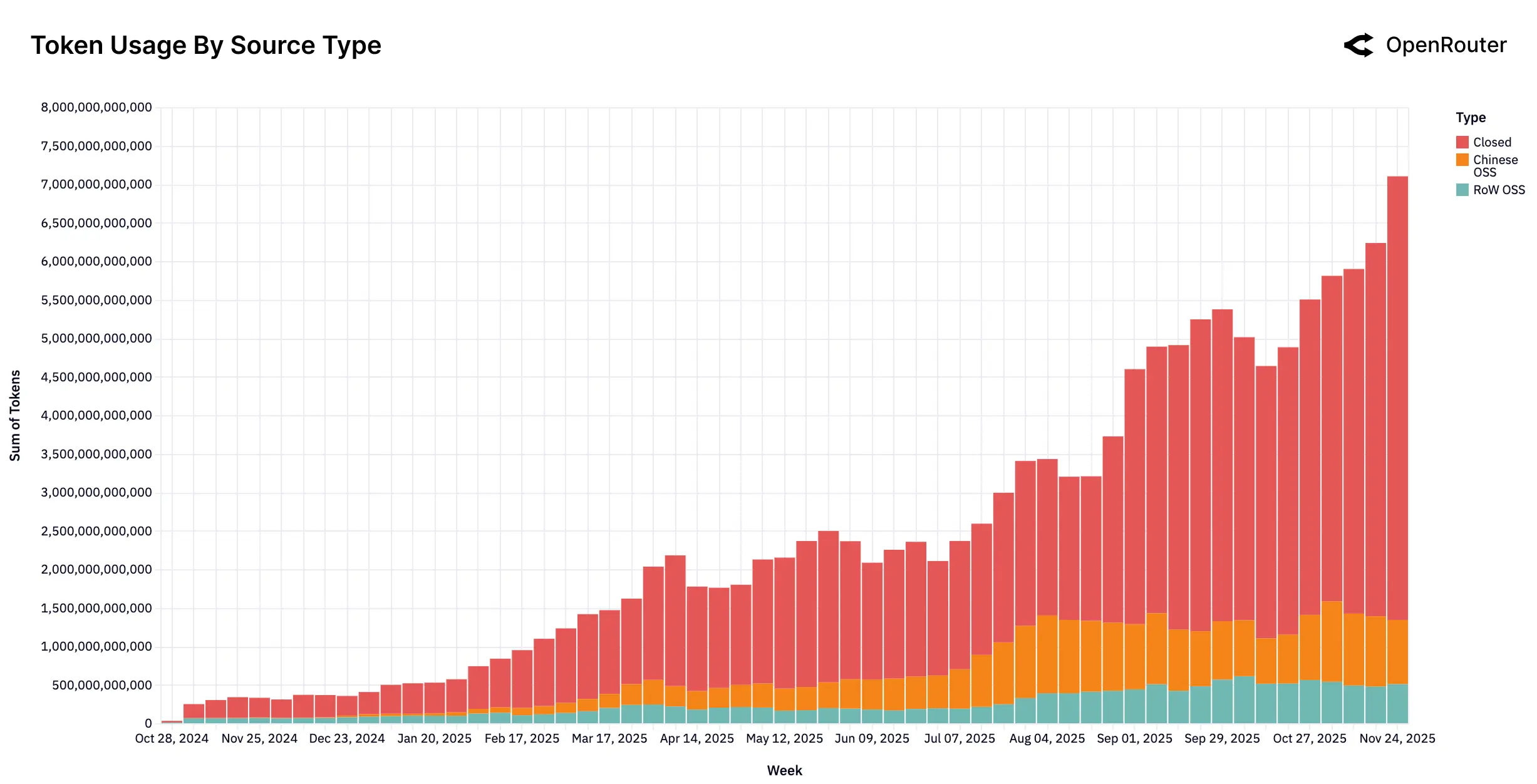

A massive new empirical study, analyzing over 100 trillion tokens of real-world usage data, confirms that the large language model market has fundamentally shifted from single-pass text generation to complex, multi-step agentic inference. The report, released by OpenRouter and a16z, paints a picture of a hyper-specialized, multi-polar ecosystem where proprietary models still lead, but open-source competitors—particularly those emerging from China—are rapidly carving out massive, sticky market segments.

The pivotal moment, according to the analysis, was the launch of OpenAI’s o1 in late 2024. Since then, models optimized for deep reasoning, like o1 and Gemini Pro, have exploded to account for over 50% of all token usage. This isn't just a minor technical tweak; it reflects a complete change in how users interact with AI.

Users are no longer typing simple questions into a chat box. They are feeding models entire codebases, documentation sets, and complex multi-step instructions. The average prompt length has ballooned fourfold, now exceeding 6,000 tokens. This complexity is driven almost entirely by programming tasks, which have surged from 11% to become the dominant professional category, consuming over half of all token volume.