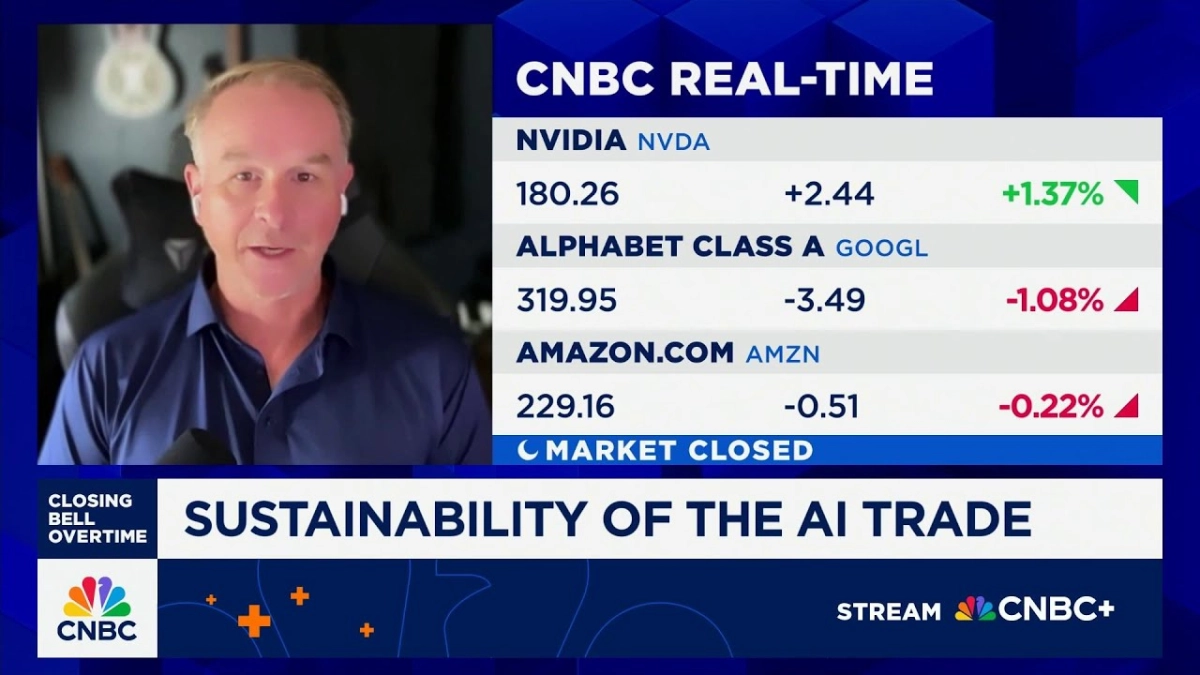

The perceived "AI chip race" between tech giants like Alphabet (Google) and Nvidia is less a head-to-head sprint and more a strategic segmentation of an expanding market. While Google's custom Tensor Processing Units (TPUs) excel at highly specialized tasks for its internal services, Nvidia's Graphics Processing Units (GPUs) maintain a commanding lead for broader, third-party AI workloads due to their architectural flexibility and extensive ecosystem. This fundamental divergence in approach defines the competitive landscape, as articulated by Ben Bajarin, CEO of Creative Strategies, during a recent CNBC "Closing Bell Overtime" interview.

Bajarin spoke with the CNBC host about where Google and Nvidia are truly competing, and where their strategies diverge. The discussion was notably prompted by recent speculation regarding Google potentially selling its specialized AI chips, TPUs, to Meta for its own AI needs, raising questions about Google's broader market ambitions. However, Bajarin quickly clarified that such a move doesn't necessarily signal a direct competitive threat to Nvidia's core business.

Google's TPUs are, by design, highly optimized for the company's vast internal AI infrastructure. "The first customer for TPUs is primarily Google," Bajarin noted, explaining that these chips are "built for their services—everything from YouTube to Search to Gemini as we see today, and it runs very, very well on that." This internal optimization allows Google to tailor hardware precisely to its unique, massive-scale AI demands, driving efficiency and performance for its proprietary applications.