Data annotation or labeling is one of the most important aspects of building a successful and scalable AI/ML project. This work provides the initial setup to train a machine learning model on what it needs to understand and analyze to come up with accurate outputs. Many companies either rely on small internal teams, business process outsourcing, or a new pocket of other startups to fill the void in annotating training data.

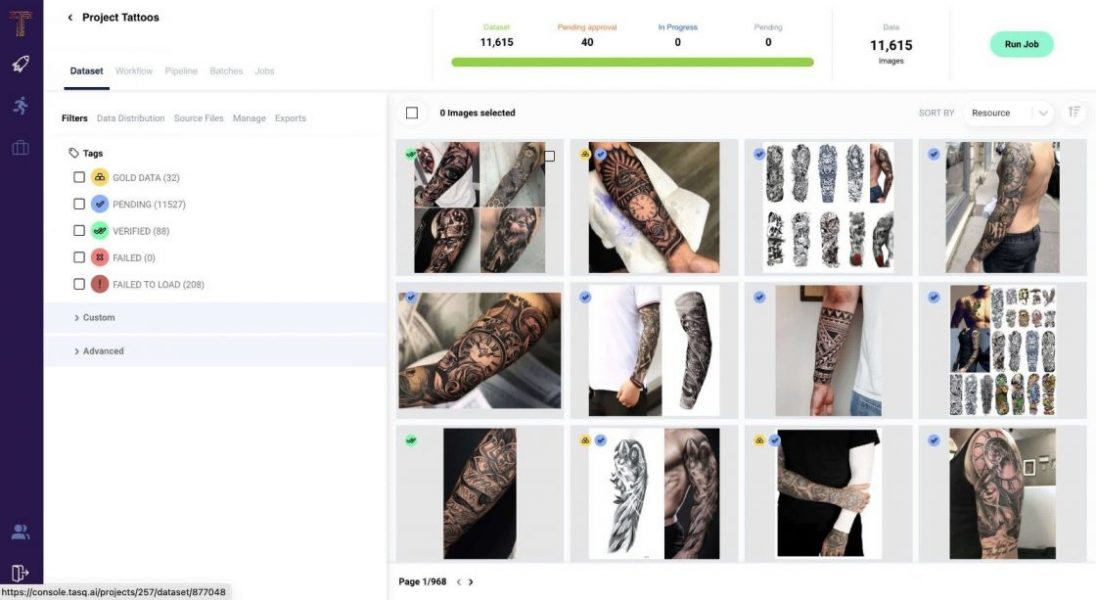

Israeli startup Tasq.ai claims it offers 30 times faster data labeling for AI than current methods by combining ML models and proprietary technology to “intelligently deconstruct complex image data,” says Erez Moscovich, CEO and co-founder of Tasq.ai. Once the data is broken into simple “micro-tasks,” it’s gamified to leverage what the company says is an untapped, unbiased global human workforce of millions to label and validate data. The company says it can offer unlimited scale without compromising the quality of the dataset or introducing biases.

Tasqers (annotators) responsible for validating results are only shown relevant portions of images and are asked whether the image they are looking at contains a particular object, the company says on its website. The Tasqers’ multiple judgments are validated, weighted, rated, and aggregated into a structured schema of actionable insights.