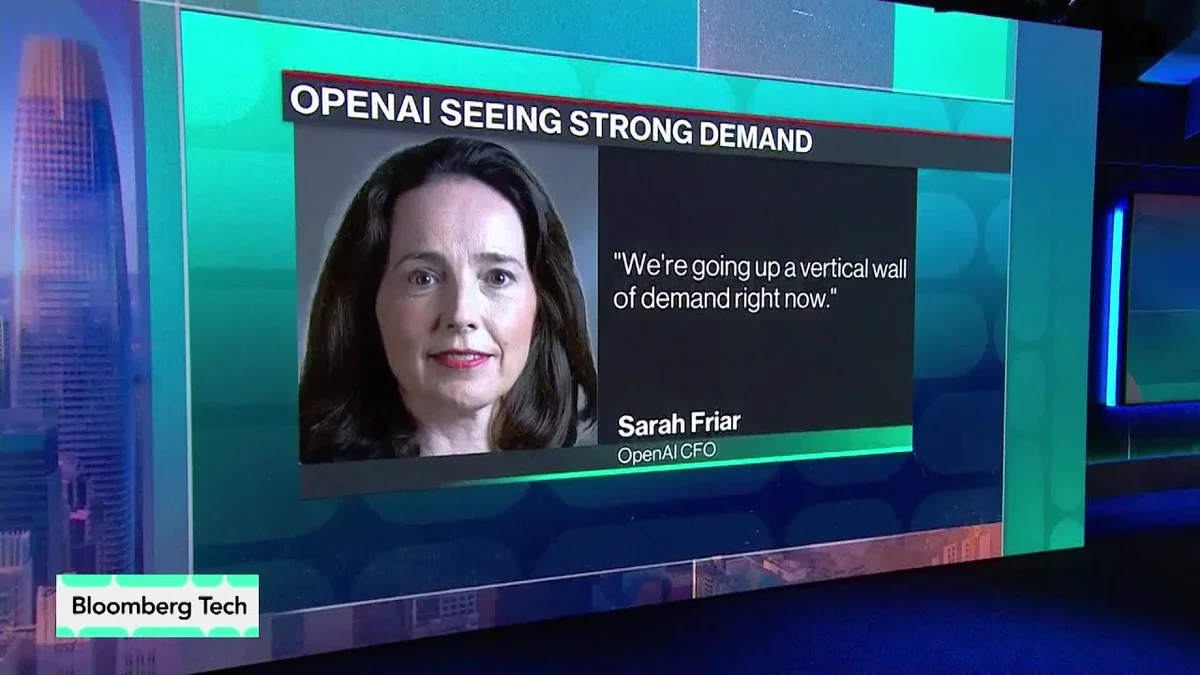

In a recent Bloomberg Tech segment, OpenAI's Chief Financial Officer, Sarah Friar, offered a stark assessment of the company's current operational climate: an overwhelming 'vertical wall of demand' for artificial intelligence compute. Friar, a seasoned financial executive with prior roles at companies like Block, Inc. (formerly Square) and Snowflake, brings a deep understanding of scaling high-growth technology ventures. Her insights into OpenAI's trajectory underscore the immense pressure and opportunity facing leading AI development firms.

Friar's perspective comes at a pivotal moment for OpenAI, a company that has rapidly ascended to the forefront of generative AI with products like ChatGPT and DALL-E. The rapid adoption of these tools has created unprecedented demand for the computational resources required to train and operate them. This demand, Friar suggests, is not merely a surge but a continuous, steep incline.

The full discussion can be found on Bloomberg Technology's YouTube channel.