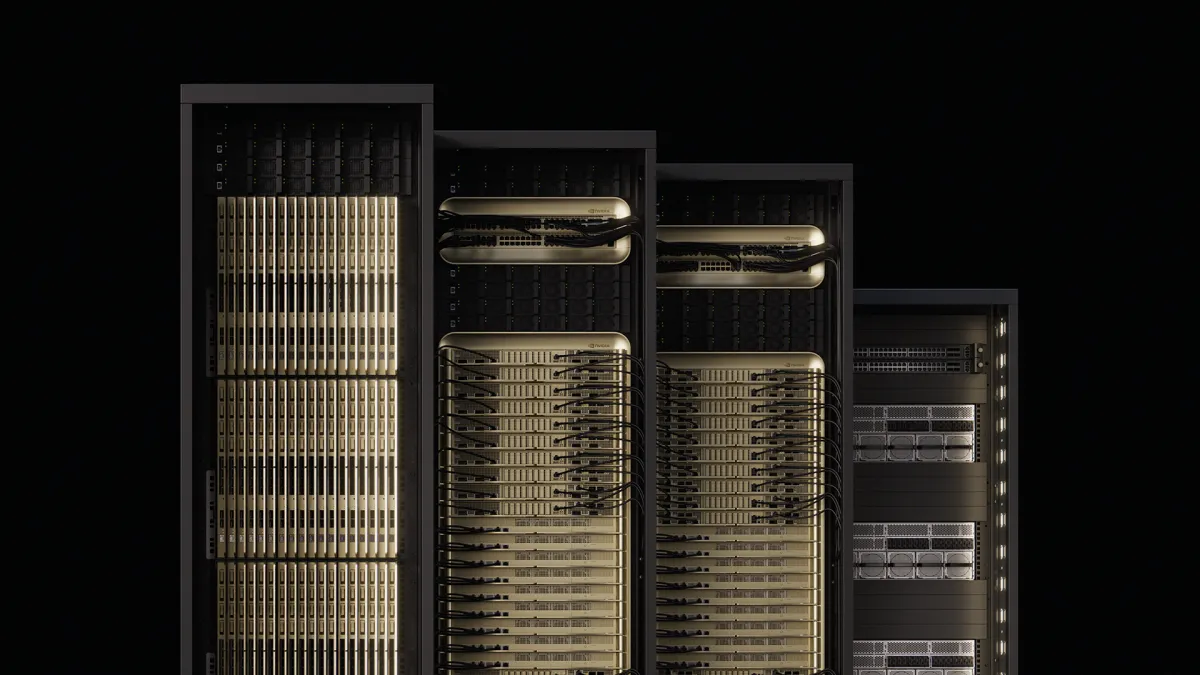

NVIDIA is revealing its plans for the next generation of AI infrastructure. It calls these "gigawatt AI factories." They center around the new NVIDIA Vera Rubin platform. At the OCP Global Summit, the company showed off its Vera Rubin NVL144 MGX-generation rack servers. It also highlighted the larger ecosystem. This represents a huge shift in data center design. It's not just about faster GPUs; it's a fundamental change in how AI compute is powered, cooled, and scaled.

The Vera Rubin NVL144 compute tray is built for extreme efficiency and performance. It has a modular, 100% liquid-cooled architecture. A central circuit board replaces traditional cables. This promises faster assembly and easier servicing. Additionally, it supports NVIDIA ConnectX-9 800GB/s networking and Rubin CPX for large-scale inference. This modular design, plus NVIDIA's plan to make these innovations open standards, aims to speed up deployment. It also lets partners combine components for rapid scaling.