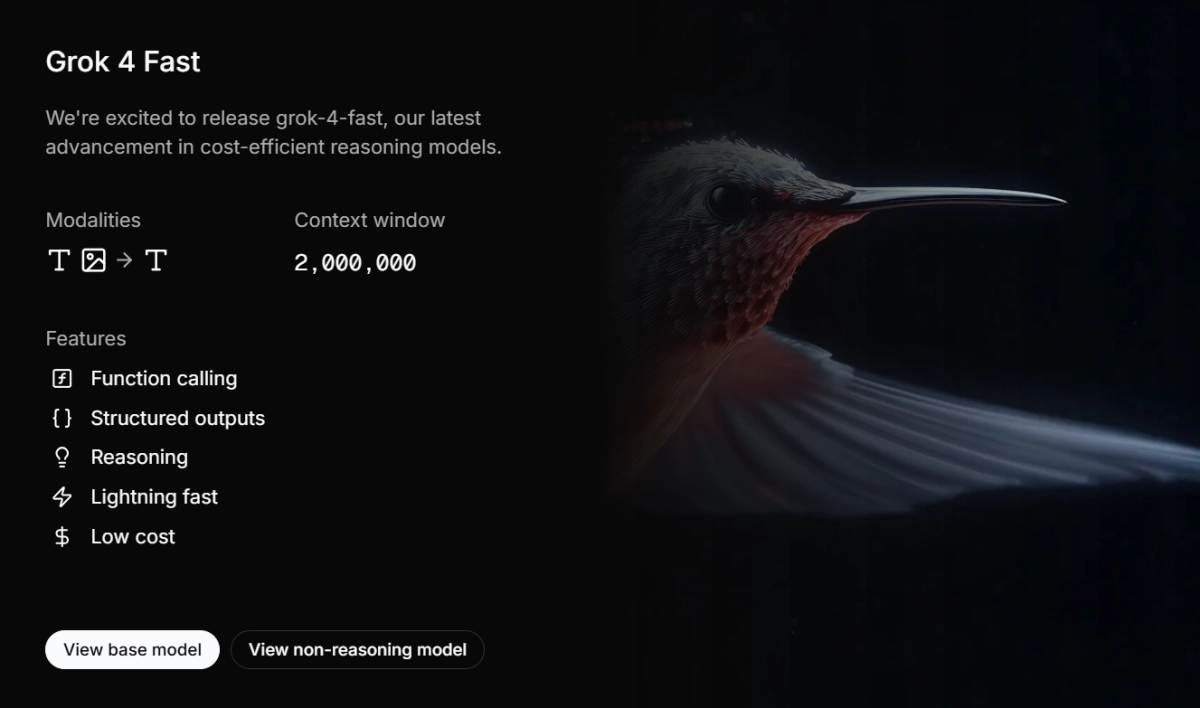

xAI has quietly rolled out a significant upgrade to its Grok 4 Fast models, expanding the context window to an impressive 2 million tokens. The enhancement transforms what was already a cost-efficient reasoning engine into a formidable powerhouse. This move allows Grok 4 Fast to ingest entire codebases, voluminous documents, or extended multi-turn conversations without the typical reliance on retrieval-augmented generation (RAG) pipelines.

Initially launched in September as a leaner alternative to the flagship Grok 4, the Fast variants now boast a unified architecture honed through end-to-end reinforcement learning. This enables seamless tool integration for web searches, code execution, and multimodal analysis, positioning it as a versatile option for developers.